This is the multi-page printable view of this section. Click here to print.

Kubernetes Blog

- Announcing Ingress2Gateway 1.0: Your Path to Gateway API

- Running Agents on Kubernetes with Agent Sandbox

- Securing Production Debugging in Kubernetes

- The Invisible Rewrite: Modernizing the Kubernetes Image Promoter

- Announcing the AI Gateway Working Group

- Before You Migrate: Five Surprising Ingress-NGINX Behaviors You Need to Know

- Spotlight on SIG Architecture: API Governance

- Introducing Node Readiness Controller

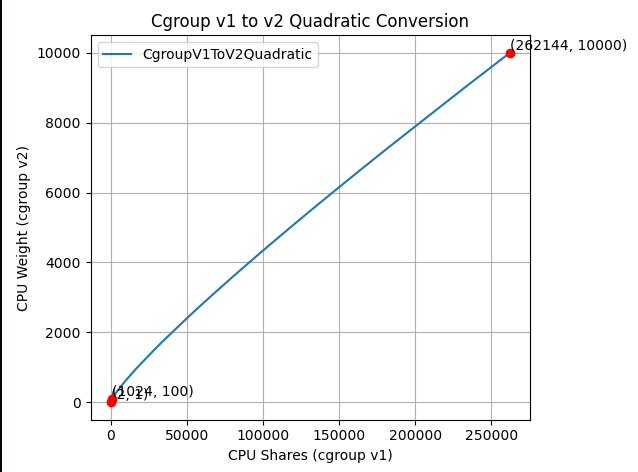

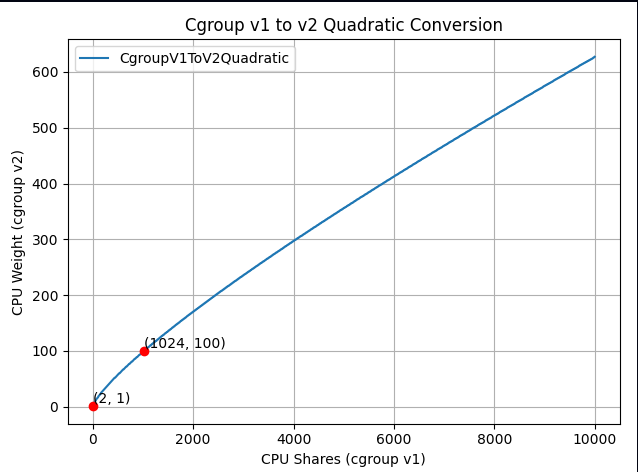

- New Conversion from cgroup v1 CPU Shares to v2 CPU Weight

- Ingress NGINX: Statement from the Kubernetes Steering and Security Response Committees

- Experimenting with Gateway API using kind

- Cluster API v1.12: Introducing In-place Updates and Chained Upgrades

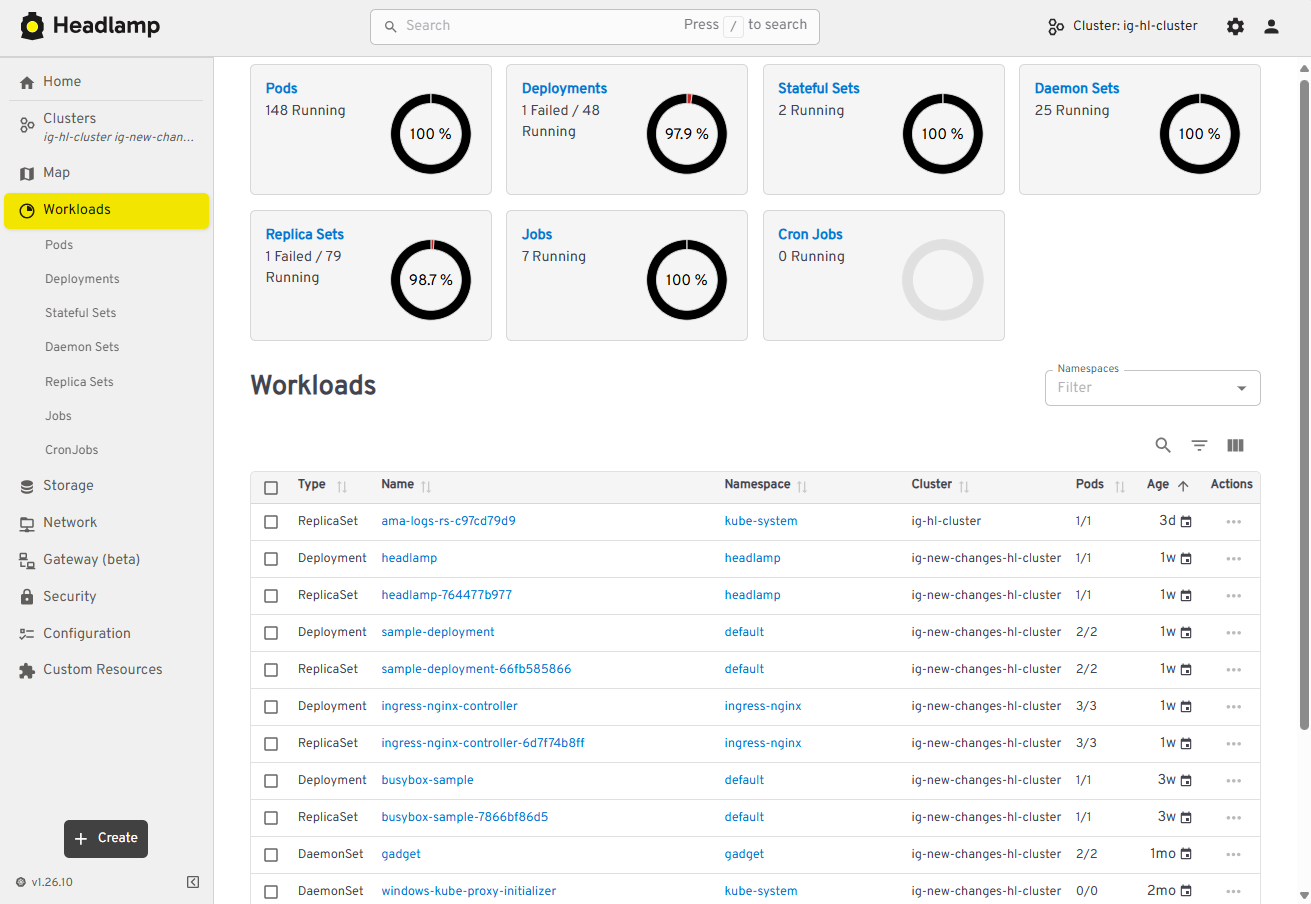

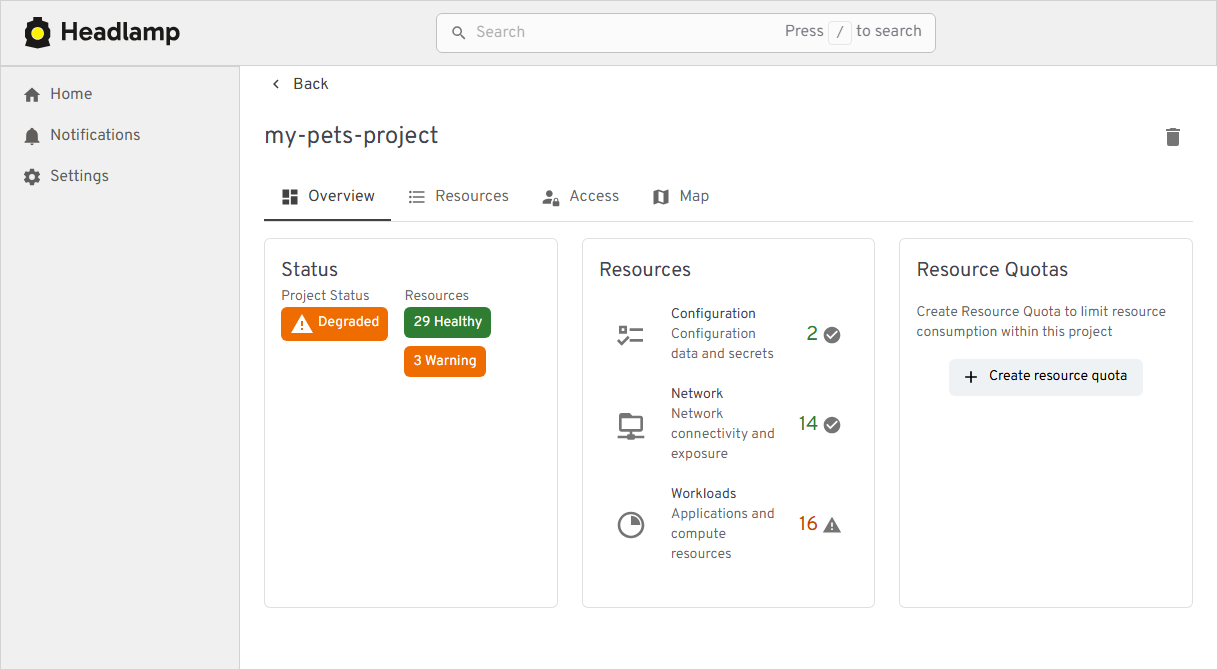

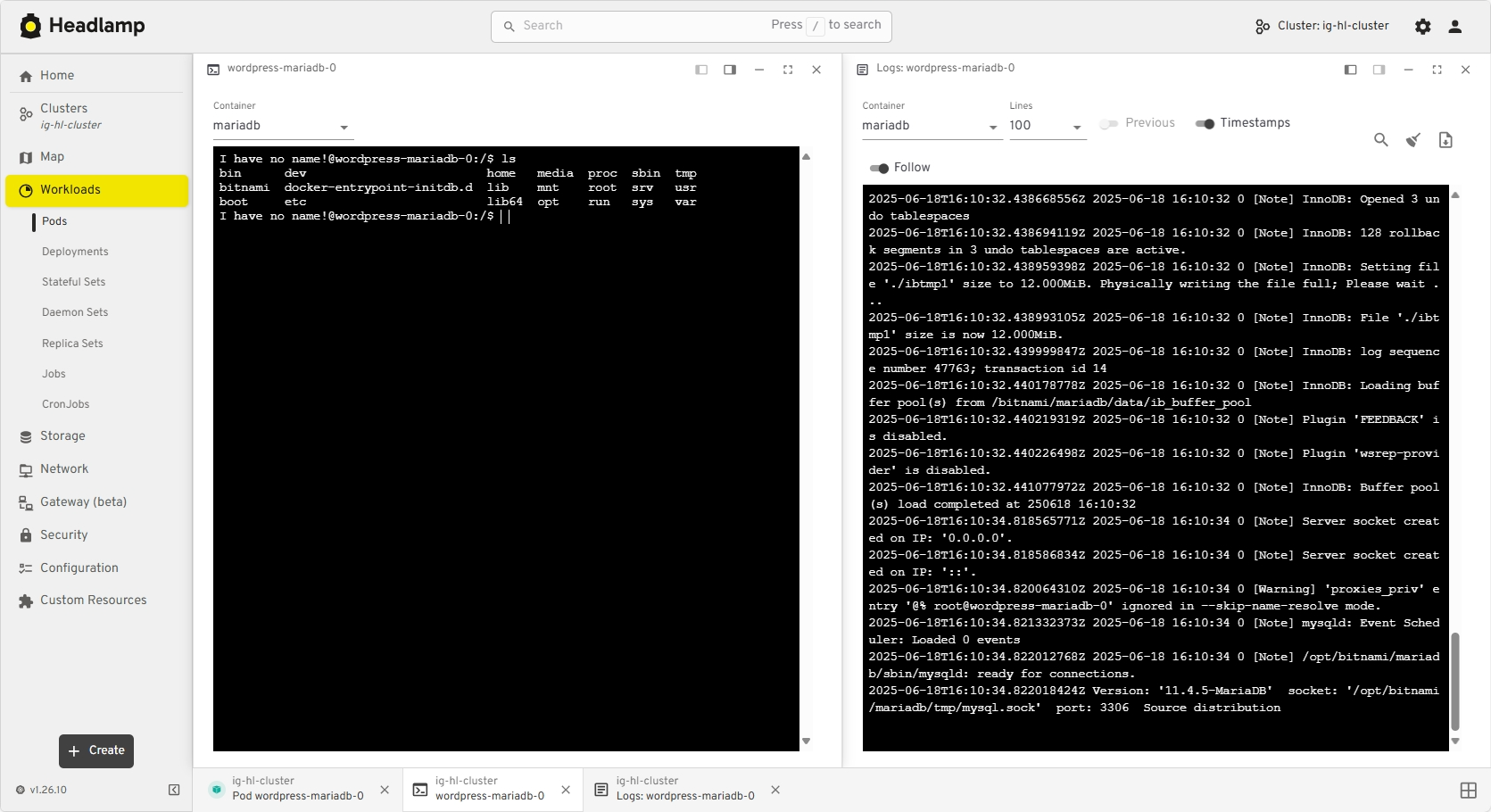

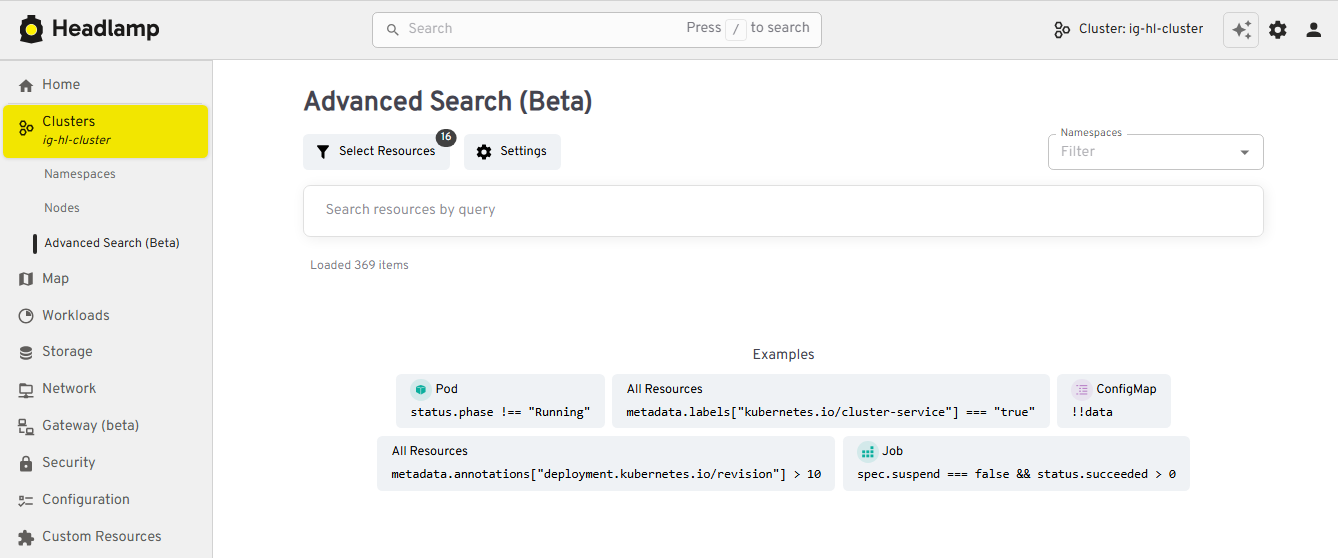

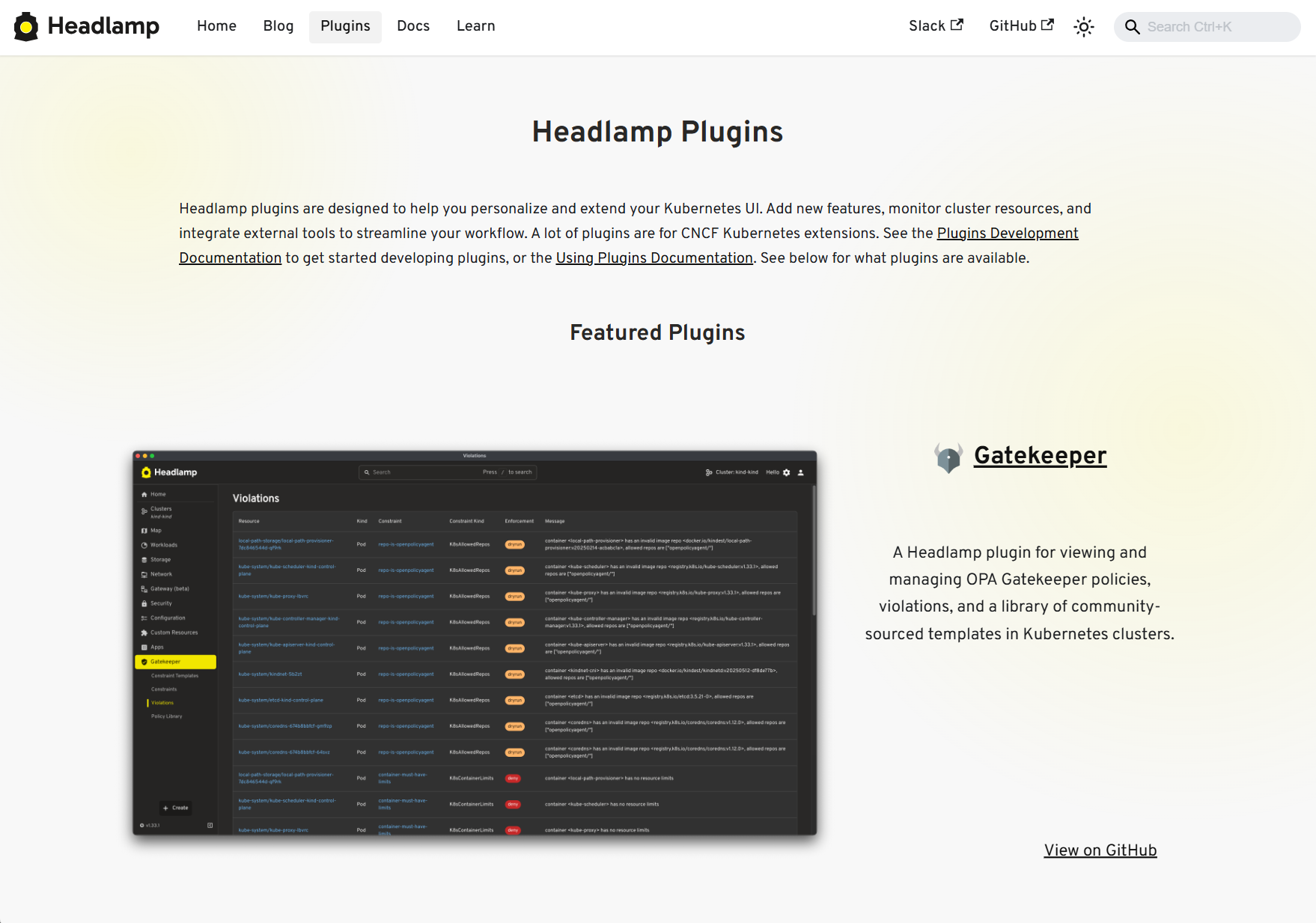

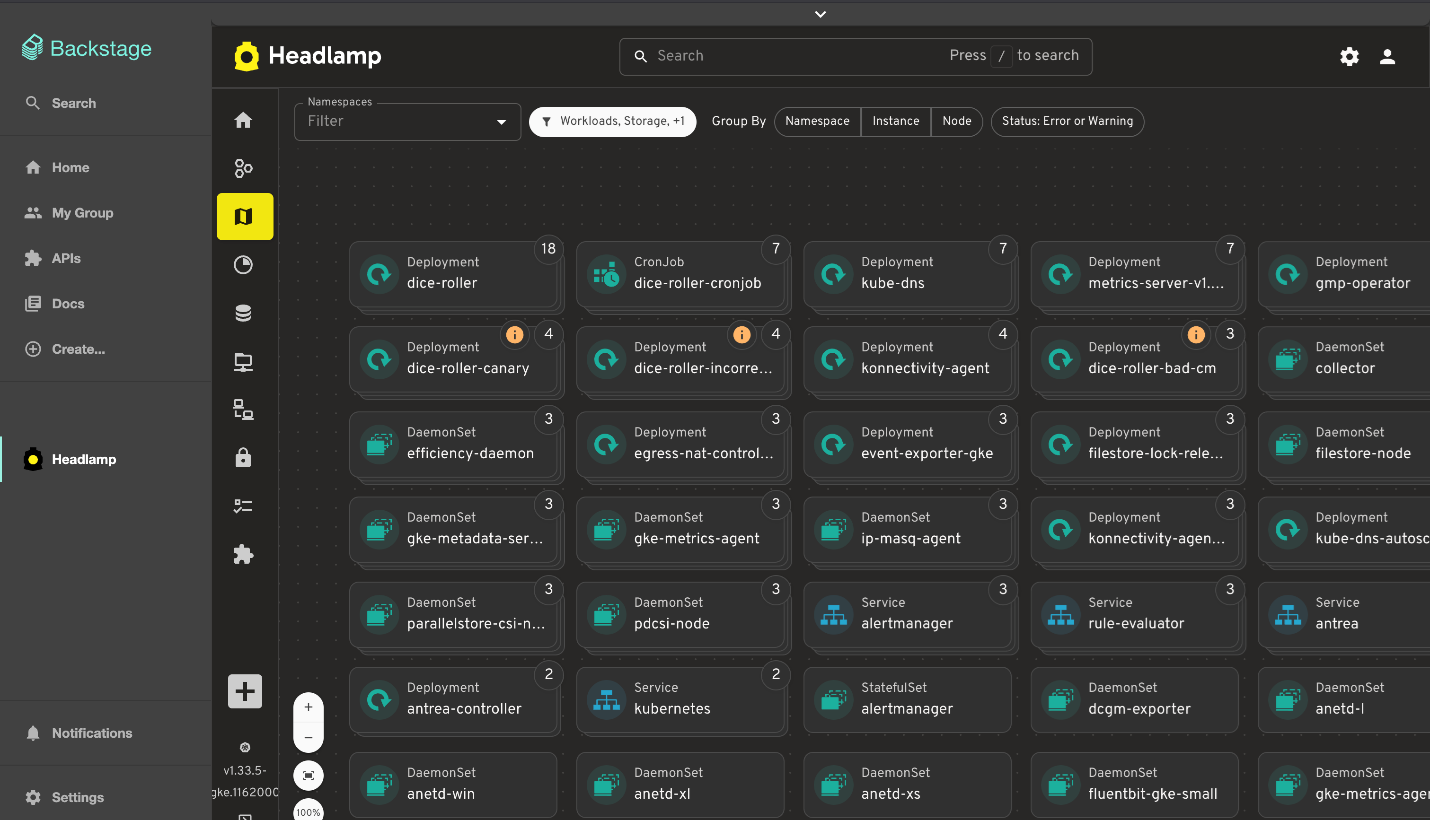

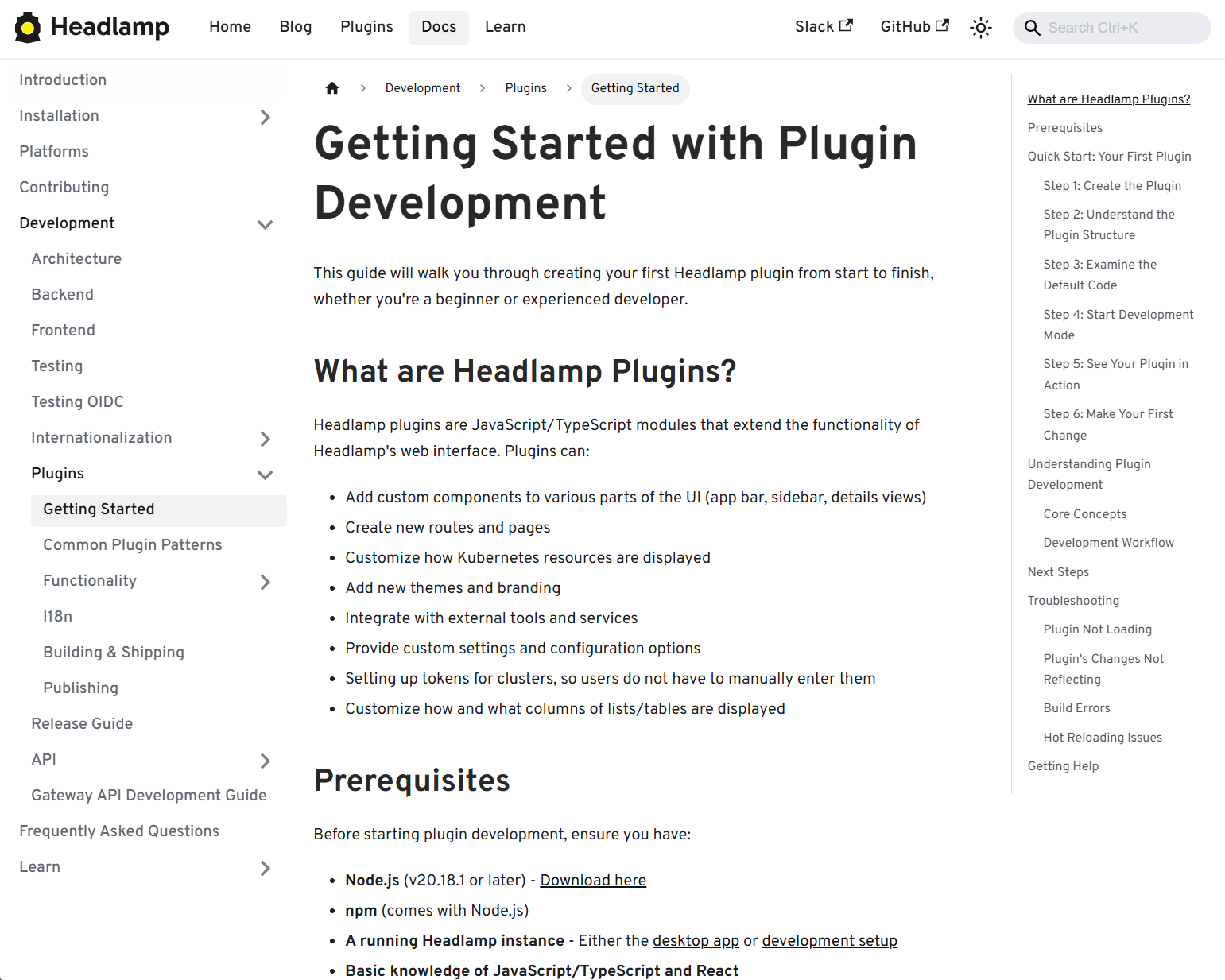

- Headlamp in 2025: Project Highlights

- Announcing the Checkpoint/Restore Working Group

- Uniform API server access using clientcmd

- Kubernetes v1.35: Restricting executables invoked by kubeconfigs via exec plugin allowList added to kuberc

- Kubernetes v1.35: Mutable PersistentVolume Node Affinity (alpha)

- Kubernetes v1.35: A Better Way to Pass Service Account Tokens to CSI Drivers

- Kubernetes v1.35: Extended Toleration Operators to Support Numeric Comparisons (Alpha)

- Kubernetes v1.35: New level of efficiency with in-place Pod restart

- Kubernetes 1.35: Enhanced Debugging with Versioned z-pages APIs

- Kubernetes v1.35: Watch Based Route Reconciliation in the Cloud Controller Manager

- Kubernetes v1.35: Introducing Workload Aware Scheduling

- Kubernetes v1.35: Fine-grained Supplemental Groups Control Graduates to GA

- Kubernetes v1.35: Kubelet Configuration Drop-in Directory Graduates to GA

- Avoiding Zombie Cluster Members When Upgrading to etcd v3.6

- Kubernetes 1.35: In-Place Pod Resize Graduates to Stable

- Kubernetes v1.35: Job Managed By Goes GA

- Kubernetes v1.35: Timbernetes (The World Tree Release)

- Kubernetes v1.35 Sneak Peek

- Kubernetes Configuration Good Practices

- Ingress NGINX Retirement: What You Need to Know

- Announcing the 2025 Steering Committee Election Results

- Gateway API 1.4: New Features

- 7 Common Kubernetes Pitfalls (and How I Learned to Avoid Them)

- Spotlight on Policy Working Group

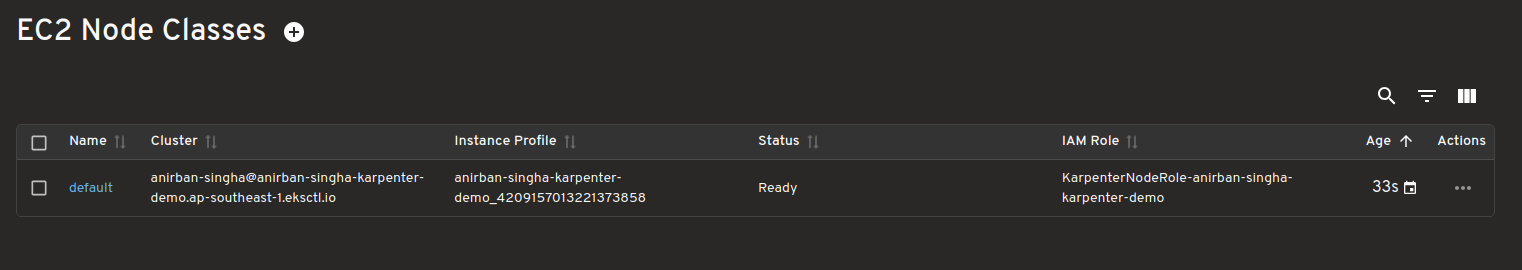

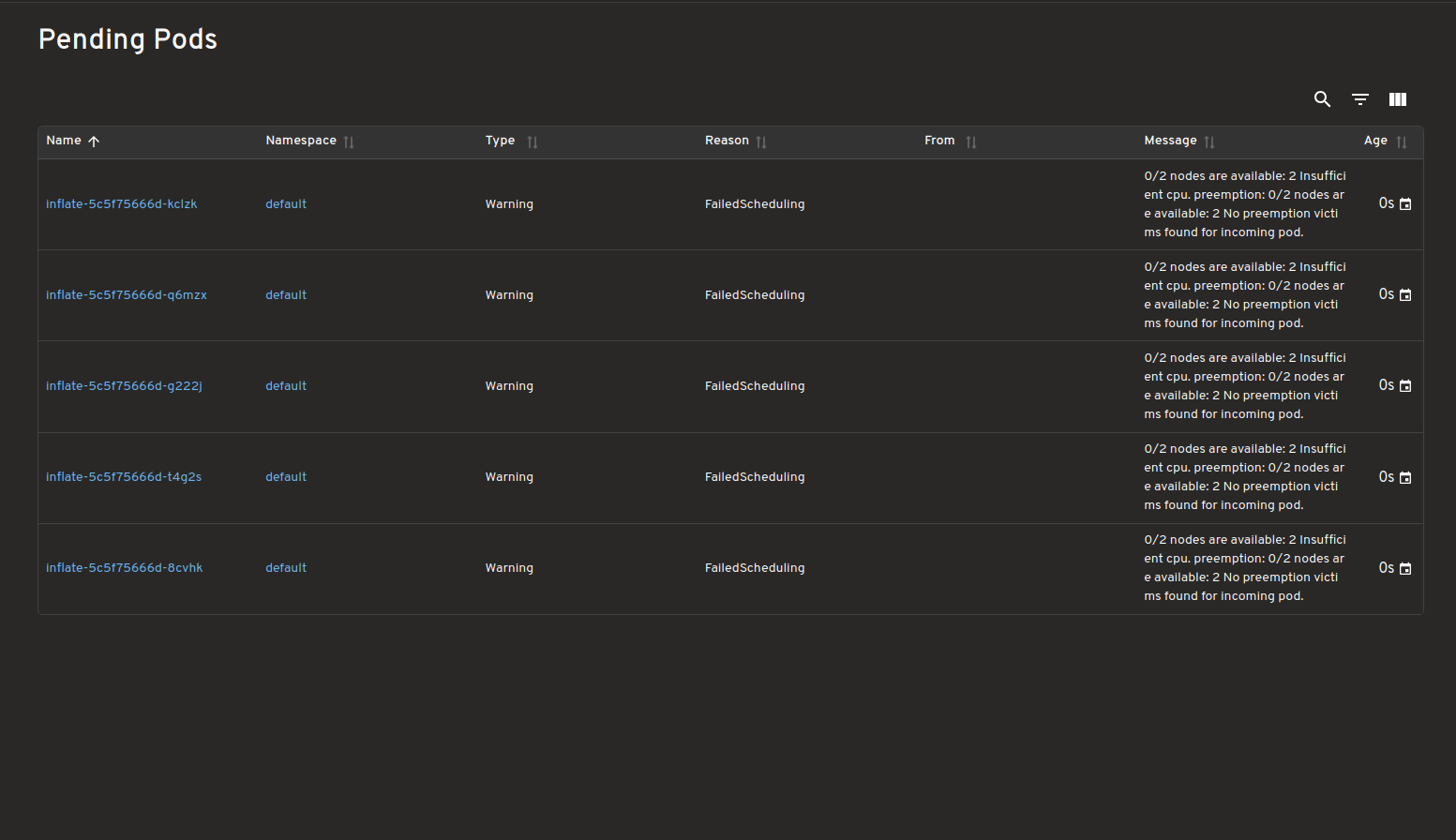

- Introducing Headlamp Plugin for Karpenter - Scaling and Visibility

- Announcing Changed Block Tracking API support (alpha)

- Kubernetes v1.34: Pod Level Resources Graduated to Beta

- Kubernetes v1.34: Recovery From Volume Expansion Failure (GA)

- Kubernetes v1.34: DRA Consumable Capacity

- Kubernetes v1.34: Pods Report DRA Resource Health

- Kubernetes v1.34: Moving Volume Group Snapshots to v1beta2

- Kubernetes v1.34: Decoupled Taint Manager Is Now Stable

- Kubernetes v1.34: Autoconfiguration for Node Cgroup Driver Goes GA

- Kubernetes v1.34: Mutable CSI Node Allocatable Graduates to Beta

- Kubernetes v1.34: Use An Init Container To Define App Environment Variables

- Kubernetes v1.34: Snapshottable API server cache

- Kubernetes v1.34: VolumeAttributesClass for Volume Modification GA

- Kubernetes v1.34: Pod Replacement Policy for Jobs Goes GA

- Kubernetes v1.34: PSI Metrics for Kubernetes Graduates to Beta

- Kubernetes v1.34: Service Account Token Integration for Image Pulls Graduates to Beta

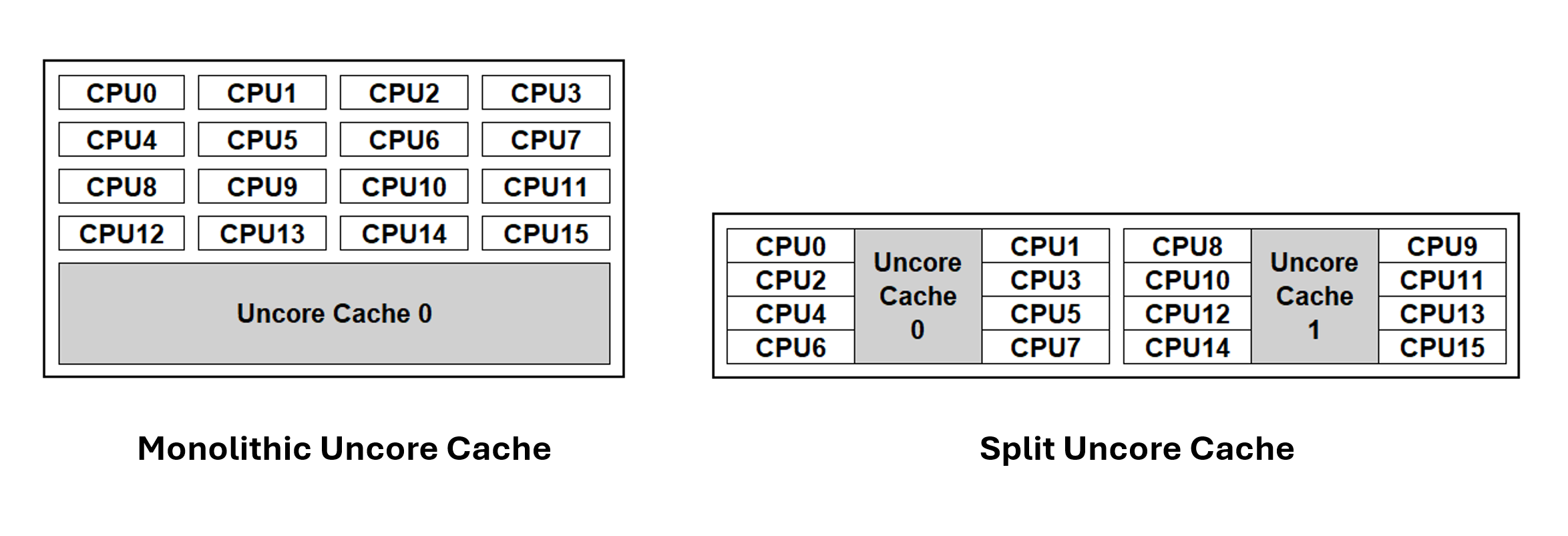

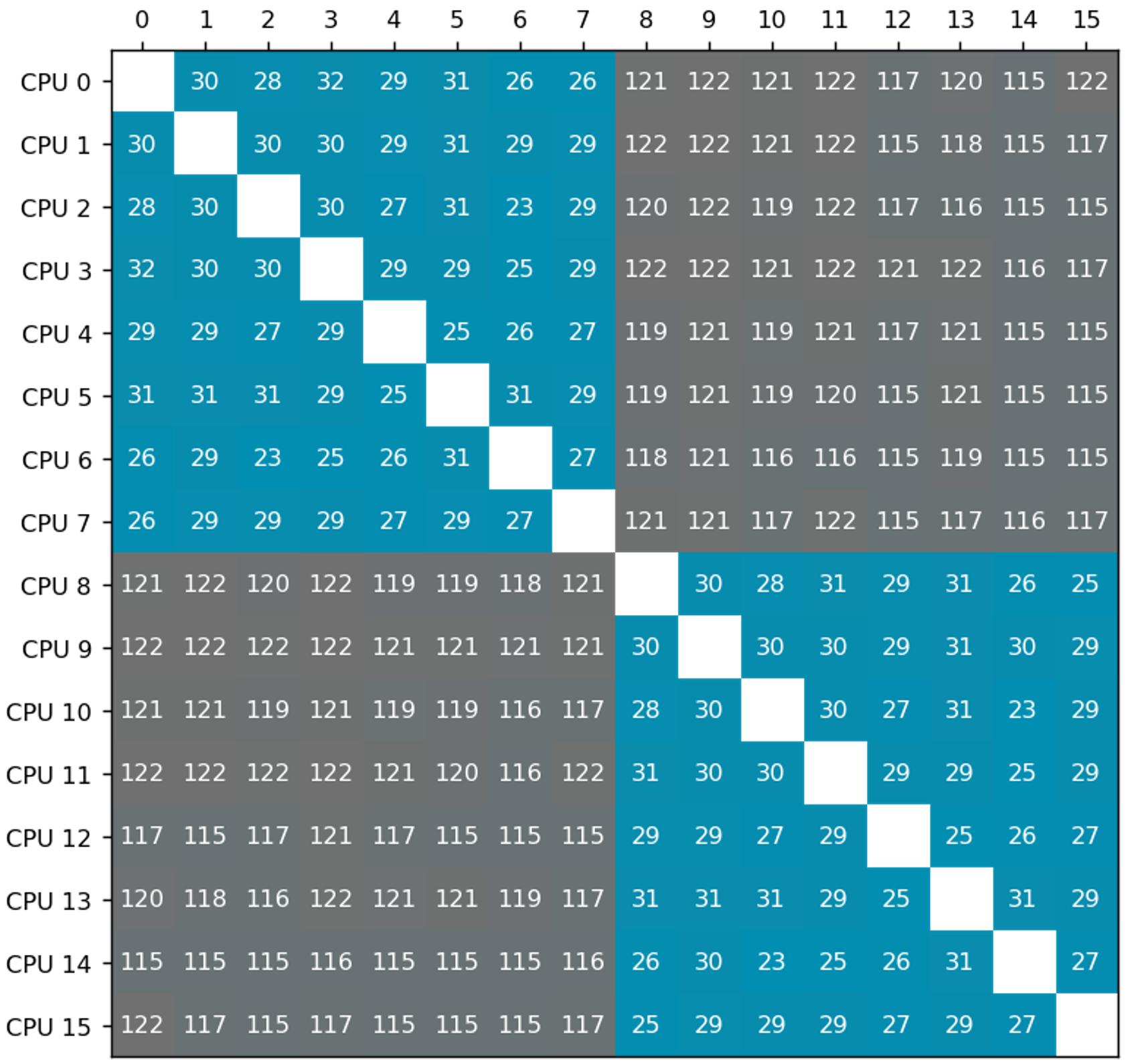

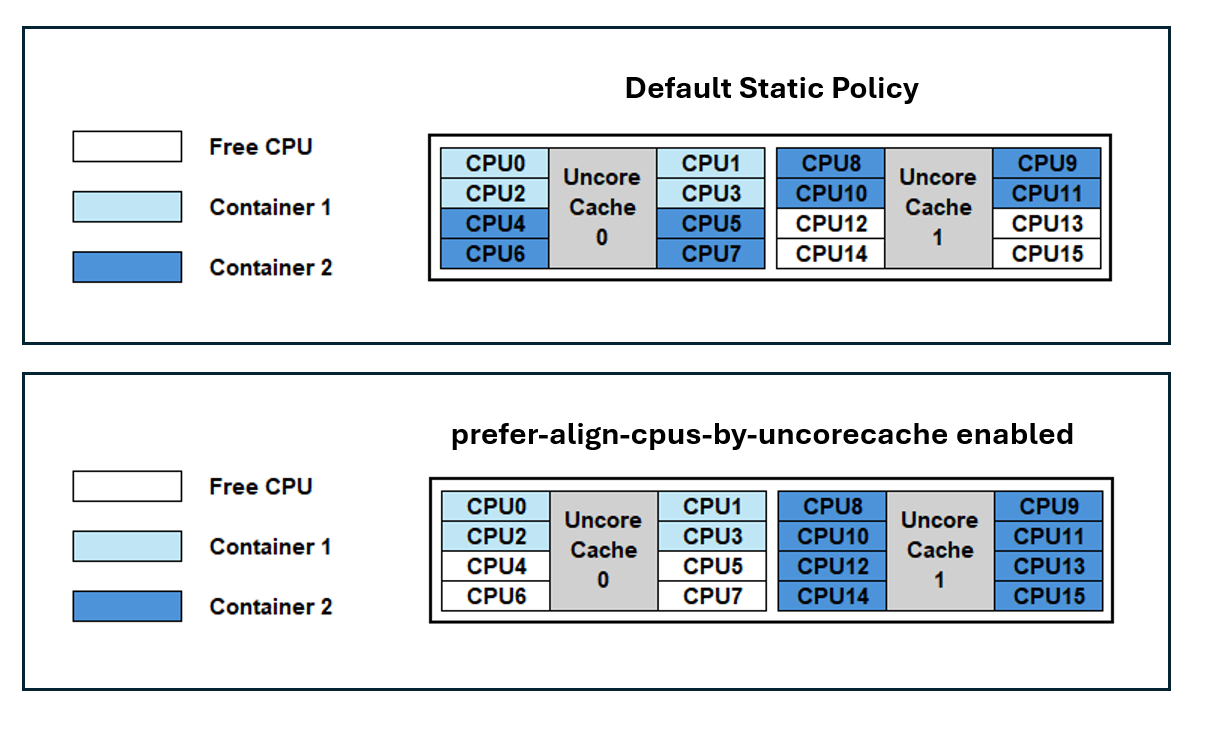

- Kubernetes v1.34: Introducing CPU Manager Static Policy Option for Uncore Cache Alignment

- Kubernetes v1.34: DRA has graduated to GA

- Kubernetes v1.34: Finer-Grained Control Over Container Restarts

- Kubernetes v1.34: User preferences (kuberc) are available for testing in kubectl 1.34

- Kubernetes v1.34: Of Wind & Will (O' WaW)

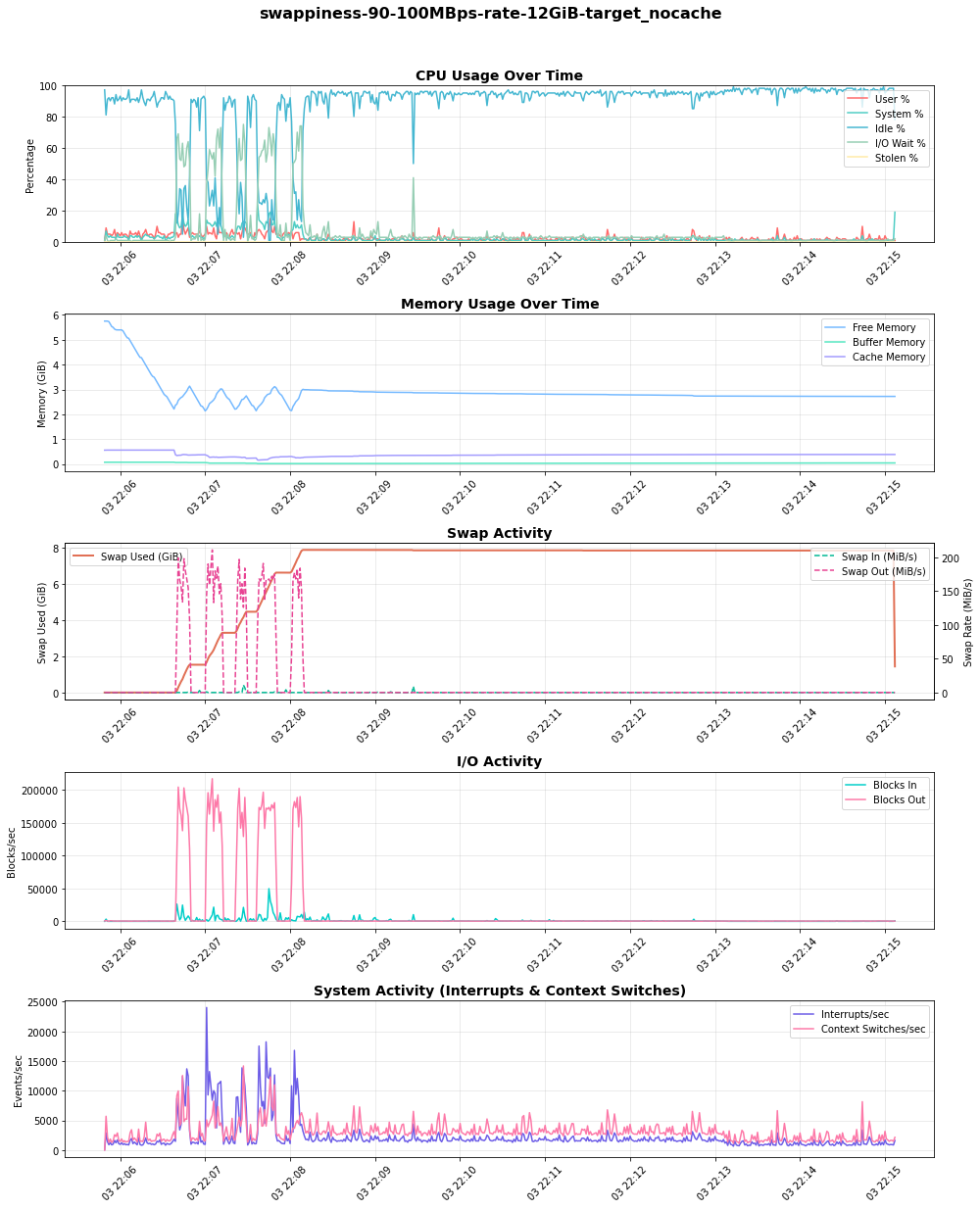

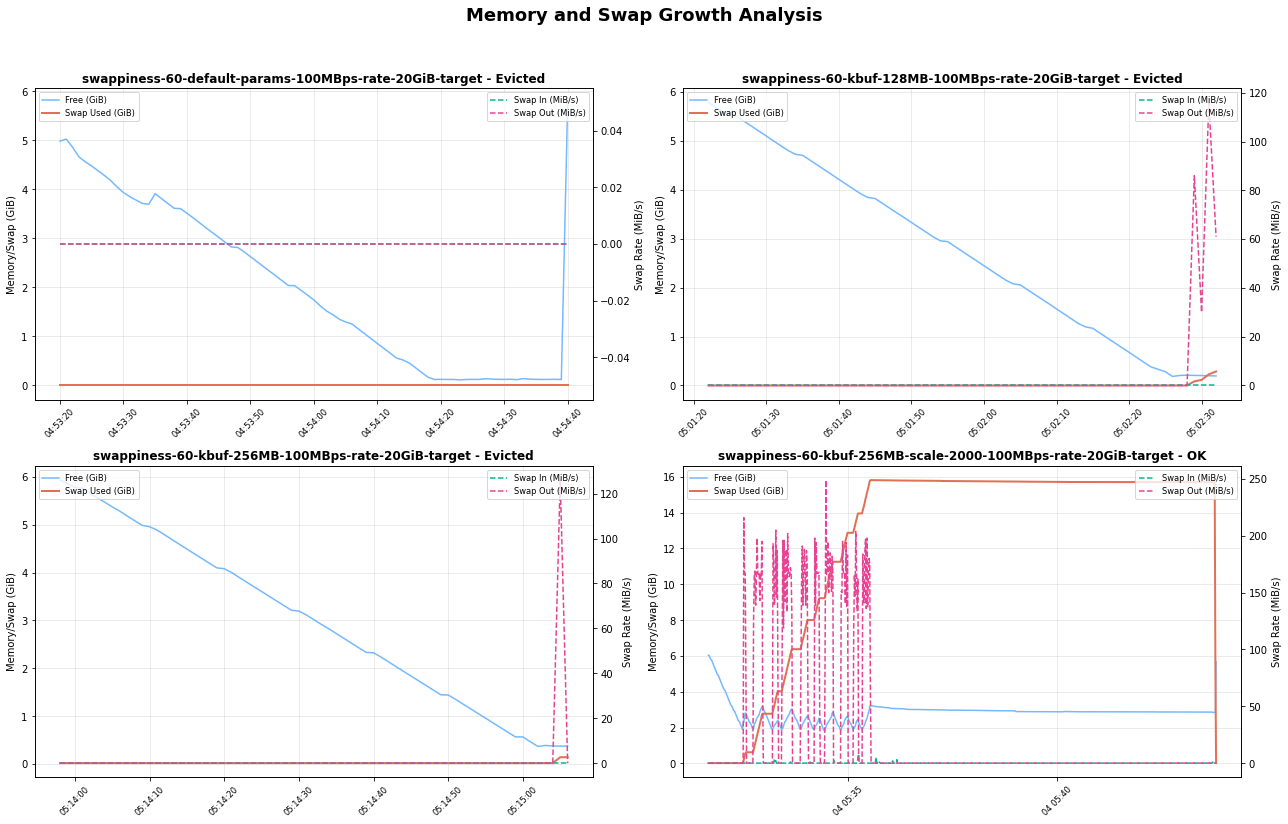

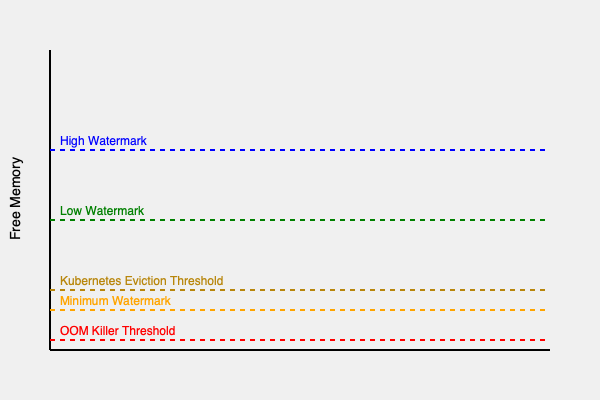

- Tuning Linux Swap for Kubernetes: A Deep Dive

- Introducing Headlamp AI Assistant

- Kubernetes v1.34 Sneak Peek

- Post-Quantum Cryptography in Kubernetes

- Navigating Failures in Pods With Devices

- Image Compatibility In Cloud Native Environments

- Changes to Kubernetes Slack

- Enhancing Kubernetes Event Management with Custom Aggregation

- Introducing Gateway API Inference Extension

- Start Sidecar First: How To Avoid Snags

- Gateway API v1.3.0: Advancements in Request Mirroring, CORS, Gateway Merging, and Retry Budgets

- Kubernetes v1.33: In-Place Pod Resize Graduated to Beta

- Announcing etcd v3.6.0

- Kubernetes 1.33: Job's SuccessPolicy Goes GA

- Kubernetes v1.33: Updates to Container Lifecycle

- Kubernetes v1.33: Job's Backoff Limit Per Index Goes GA

- Kubernetes v1.33: Image Pull Policy the way you always thought it worked!

- Kubernetes v1.33: Streaming List responses

- Kubernetes 1.33: Volume Populators Graduate to GA

- Kubernetes v1.33: From Secrets to Service Accounts: Kubernetes Image Pulls Evolved

- Kubernetes v1.33: Fine-grained SupplementalGroups Control Graduates to Beta

- Kubernetes v1.33: Prevent PersistentVolume Leaks When Deleting out of Order graduates to GA

- Kubernetes v1.33: Mutable CSI Node Allocatable Count

- Kubernetes v1.33: New features in DRA

- Kubernetes v1.33: Storage Capacity Scoring of Nodes for Dynamic Provisioning (alpha)

- Kubernetes v1.33: Image Volumes graduate to beta!

- Kubernetes v1.33: HorizontalPodAutoscaler Configurable Tolerance

- Kubernetes v1.33: User Namespaces enabled by default!

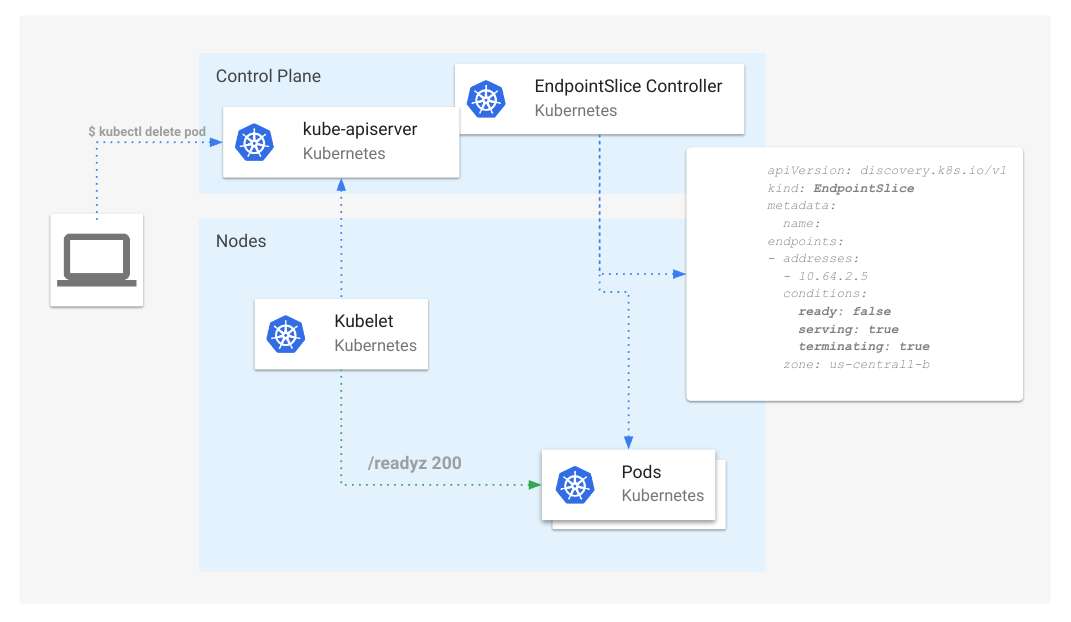

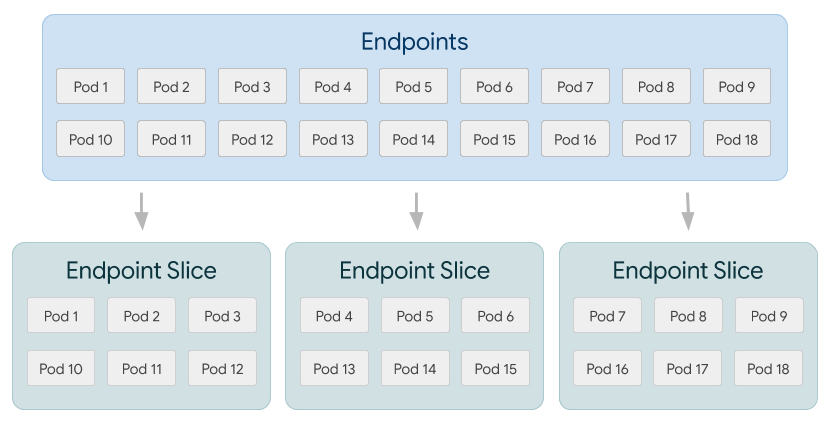

- Kubernetes v1.33: Continuing the transition from Endpoints to EndpointSlices

- Kubernetes v1.33: Octarine

- Kubernetes Multicontainer Pods: An Overview

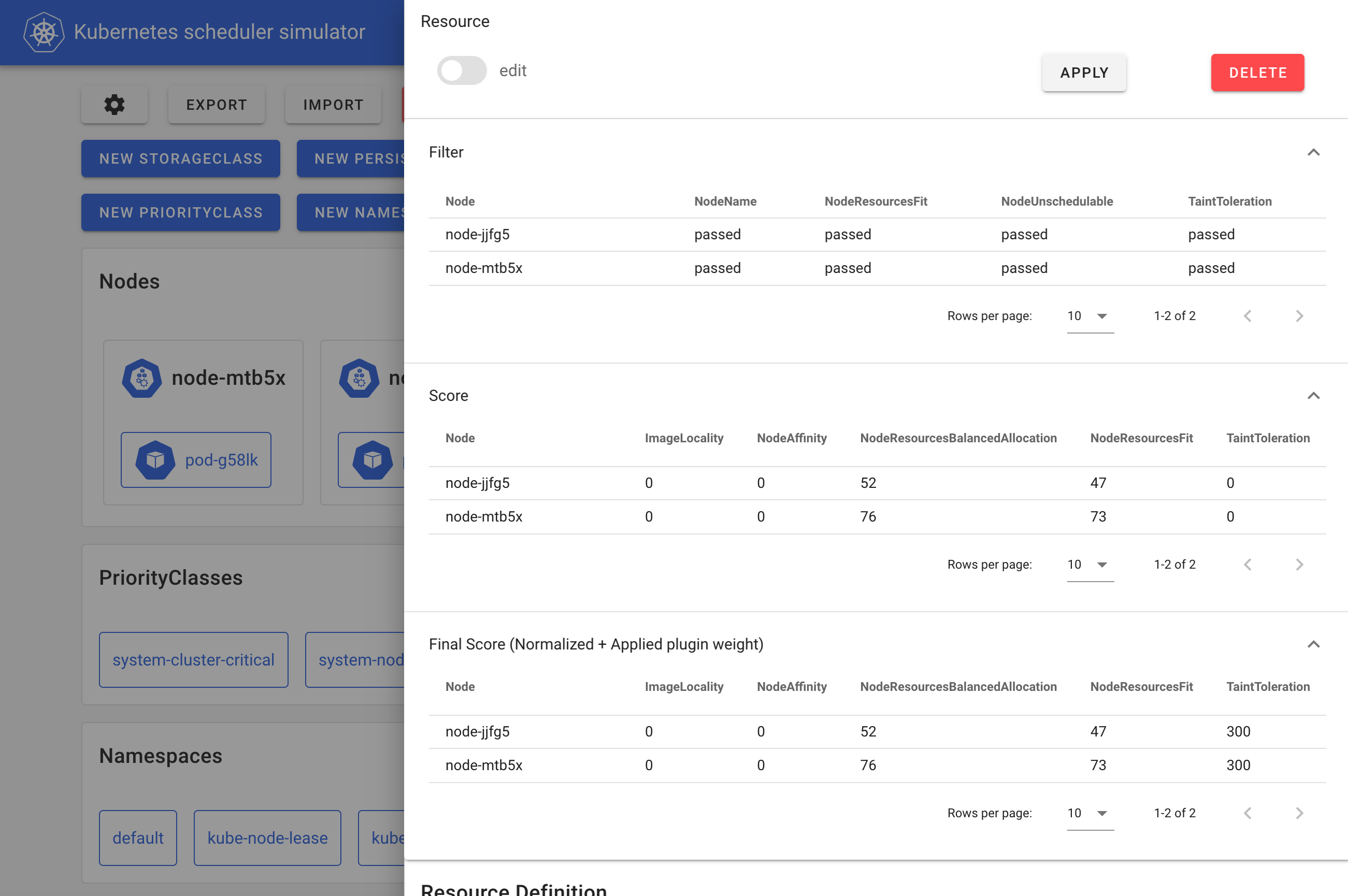

- Introducing kube-scheduler-simulator

- Kubernetes v1.33 sneak peek

- Fresh Swap Features for Linux Users in Kubernetes 1.32

- Ingress-nginx CVE-2025-1974: What You Need to Know

- Introducing JobSet

- Spotlight on SIG Apps

- Spotlight on SIG etcd

- NFTables mode for kube-proxy

- The Cloud Controller Manager Chicken and Egg Problem

- Spotlight on SIG Architecture: Enhancements

- Kubernetes 1.32: Moving Volume Group Snapshots to Beta

- Enhancing Kubernetes API Server Efficiency with API Streaming

- Kubernetes v1.32 Adds A New CPU Manager Static Policy Option For Strict CPU Reservation

- Kubernetes v1.32: Memory Manager Goes GA

- Kubernetes v1.32: QueueingHint Brings a New Possibility to Optimize Pod Scheduling

- Kubernetes v1.32: Penelope

- Gateway API v1.2: WebSockets, Timeouts, Retries, and More

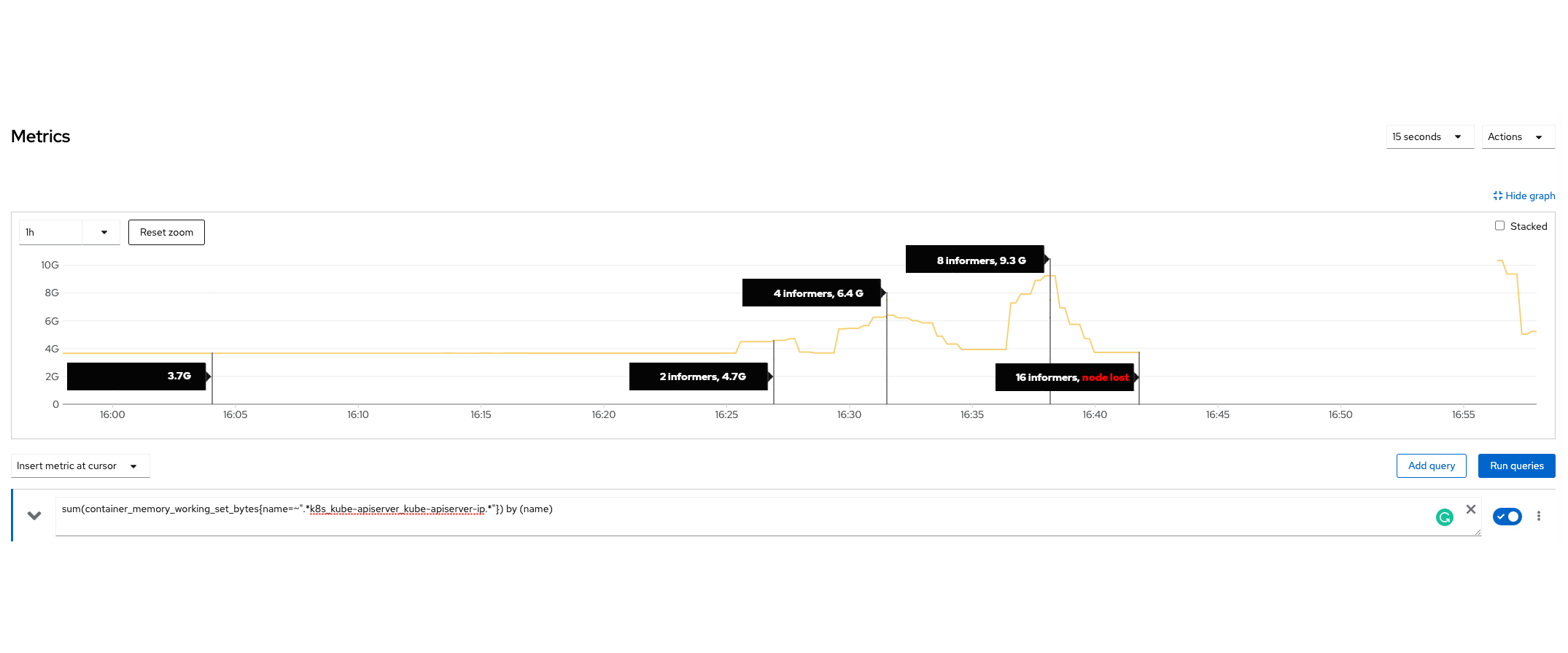

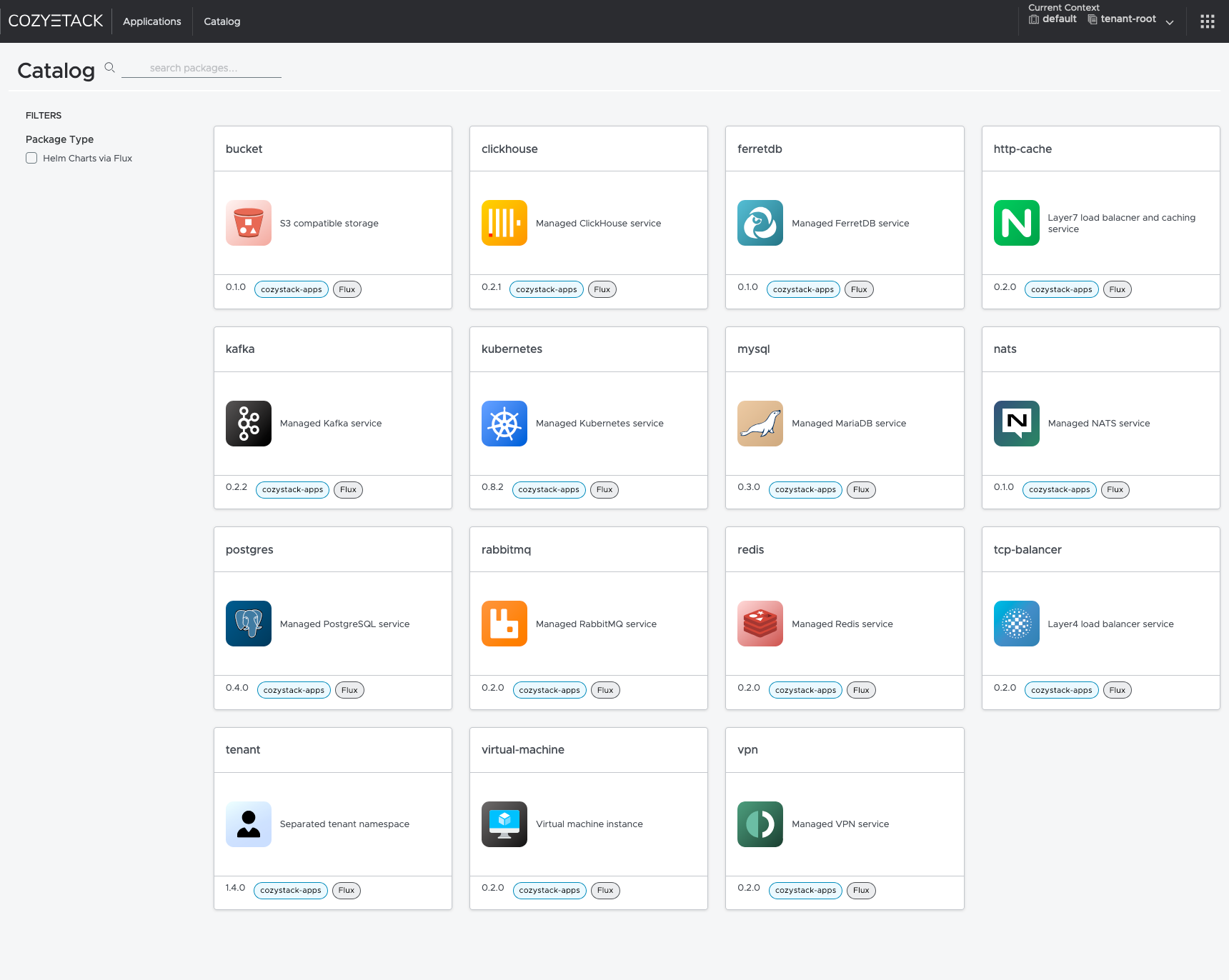

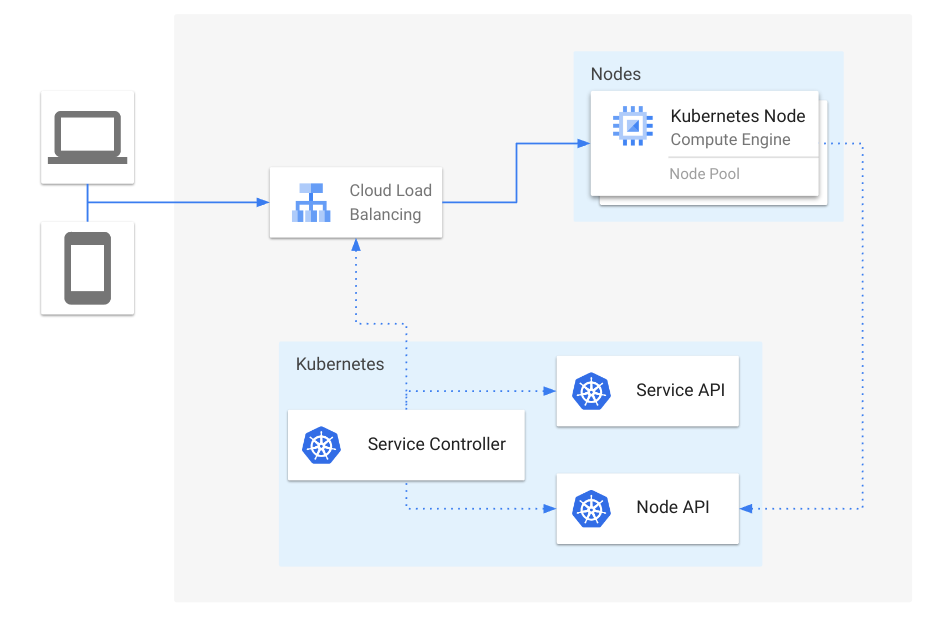

- How we built a dynamic Kubernetes API Server for the API Aggregation Layer in Cozystack

- Kubernetes v1.32 sneak peek

- Spotlight on Kubernetes Upstream Training in Japan

- Announcing the 2024 Steering Committee Election Results

- Spotlight on CNCF Deaf and Hard-of-hearing Working Group (DHHWG)

- Spotlight on SIG Scheduling

- Kubernetes v1.31: kubeadm v1beta4

- Kubernetes 1.31: Custom Profiling in Kubectl Debug Graduates to Beta

- Kubernetes 1.31: Fine-grained SupplementalGroups control

- Kubernetes v1.31: New Kubernetes CPUManager Static Policy: Distribute CPUs Across Cores

- Kubernetes 1.31: Autoconfiguration For Node Cgroup Driver (beta)

- Kubernetes 1.31: Streaming Transitions from SPDY to WebSockets

- Kubernetes 1.31: Pod Failure Policy for Jobs Goes GA

- Kubernetes 1.31: MatchLabelKeys in PodAffinity graduates to beta

- Kubernetes 1.31: Prevent PersistentVolume Leaks When Deleting out of Order

- Kubernetes 1.31: Read Only Volumes Based On OCI Artifacts (alpha)

- Kubernetes 1.31: VolumeAttributesClass for Volume Modification Beta

- Kubernetes v1.31: Accelerating Cluster Performance with Consistent Reads from Cache

- Kubernetes 1.31: Moving cgroup v1 Support into Maintenance Mode

- Kubernetes v1.31: PersistentVolume Last Phase Transition Time Moves to GA

- Kubernetes v1.31: Elli

- Introducing Feature Gates to Client-Go: Enhancing Flexibility and Control

- Spotlight on SIG API Machinery

- Kubernetes Removals and Major Changes In v1.31

- Spotlight on SIG Node

- 10 Years of Kubernetes

- Completing the largest migration in Kubernetes history

- Gateway API v1.1: Service mesh, GRPCRoute, and a whole lot more

- Container Runtime Interface streaming explained

- Kubernetes 1.30: Preventing unauthorized volume mode conversion moves to GA

- Kubernetes 1.30: Multi-Webhook and Modular Authorization Made Much Easier

- Kubernetes 1.30: Structured Authentication Configuration Moves to Beta

- Kubernetes 1.30: Validating Admission Policy Is Generally Available

- Kubernetes 1.30: Read-only volume mounts can be finally literally read-only

- Kubernetes 1.30: Beta Support For Pods With User Namespaces

- Kubernetes v1.30: Uwubernetes

- Spotlight on SIG Architecture: Code Organization

- DIY: Create Your Own Cloud with Kubernetes (Part 3)

- DIY: Create Your Own Cloud with Kubernetes (Part 2)

- DIY: Create Your Own Cloud with Kubernetes (Part 1)

- Introducing the Windows Operational Readiness Specification

- A Peek at Kubernetes v1.30

- CRI-O: Applying seccomp profiles from OCI registries

- Spotlight on SIG Cloud Provider

- A look into the Kubernetes Book Club

- Image Filesystem: Configuring Kubernetes to store containers on a separate filesystem

- Spotlight on SIG Release (Release Team Subproject)

- Contextual logging in Kubernetes 1.29: Better troubleshooting and enhanced logging

- Kubernetes 1.29: Decoupling taint manager from node lifecycle controller

- Kubernetes 1.29: PodReadyToStartContainers Condition Moves to Beta

- Kubernetes 1.29: New (alpha) Feature, Load Balancer IP Mode for Services

- Kubernetes 1.29: Single Pod Access Mode for PersistentVolumes Graduates to Stable

- Kubernetes 1.29: CSI Storage Resizing Authenticated and Generally Available in v1.29

- Kubernetes 1.29: VolumeAttributesClass for Volume Modification

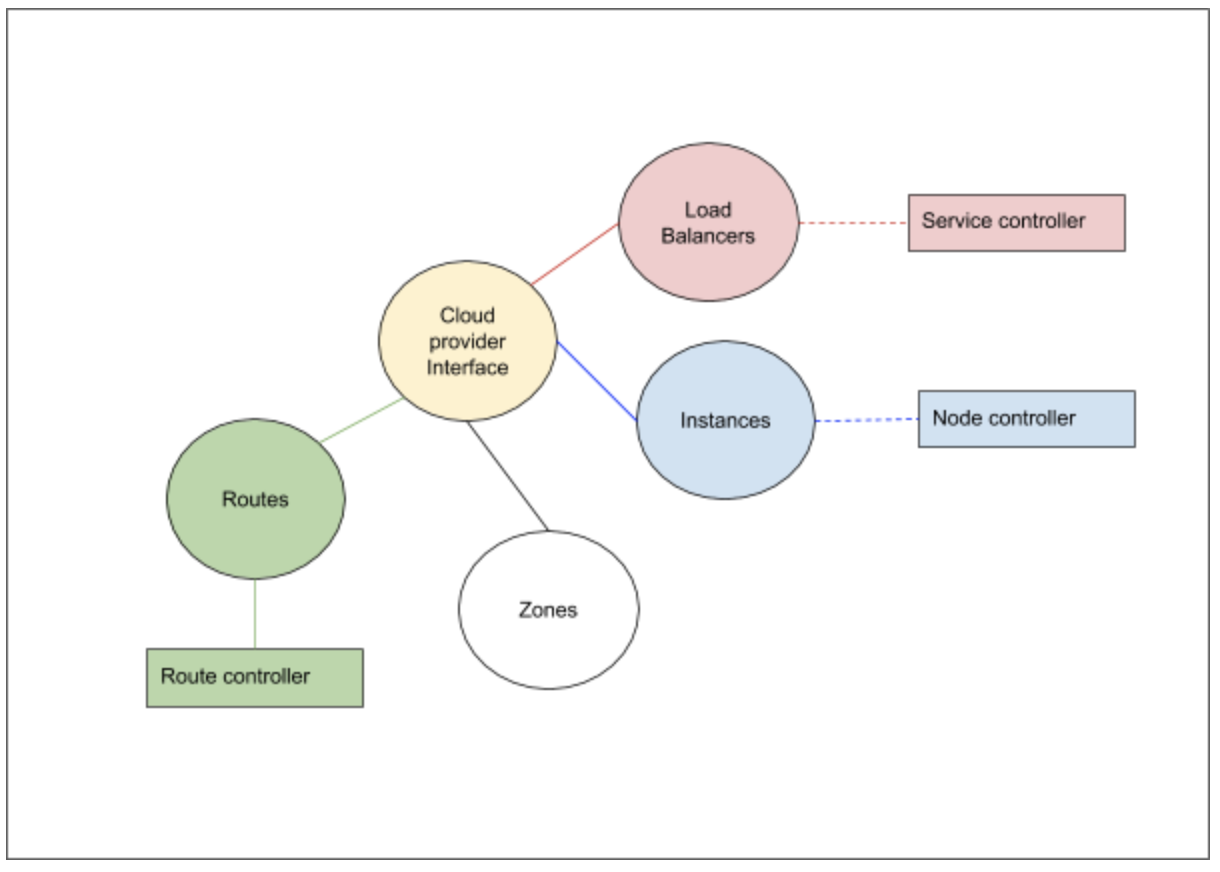

- Kubernetes 1.29: Cloud Provider Integrations Are Now Separate Components

- Kubernetes v1.29: Mandala

- New Experimental Features in Gateway API v1.0

- Spotlight on SIG Testing

- Kubernetes Removals, Deprecations, and Major Changes in Kubernetes 1.29

- The Case for Kubernetes Resource Limits: Predictability vs. Efficiency

- Introducing SIG etcd

- Kubernetes Contributor Summit: Behind-the-scenes

- Spotlight on SIG Architecture: Production Readiness

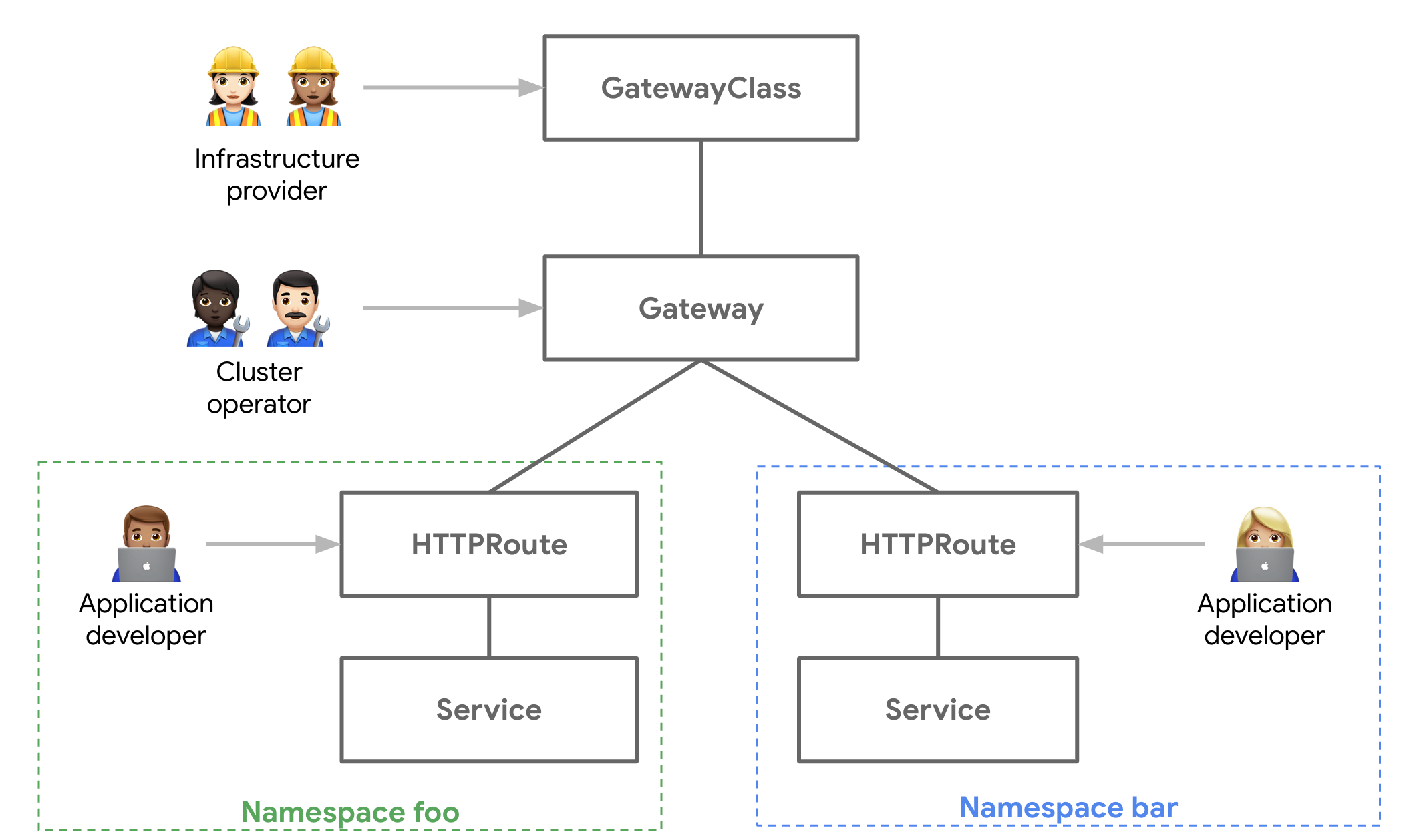

- Gateway API v1.0: GA Release

- Introducing ingress2gateway; Simplifying Upgrades to Gateway API

- Plants, process and parties: the Kubernetes 1.28 release interview

- PersistentVolume Last Phase Transition Time in Kubernetes

- A Quick Recap of 2023 China Kubernetes Contributor Summit

- Bootstrap an Air Gapped Cluster With Kubeadm

- CRI-O is moving towards pkgs.k8s.io

- Spotlight on SIG Architecture: Conformance

- Announcing the 2023 Steering Committee Election Results

- Happy 7th Birthday kubeadm!

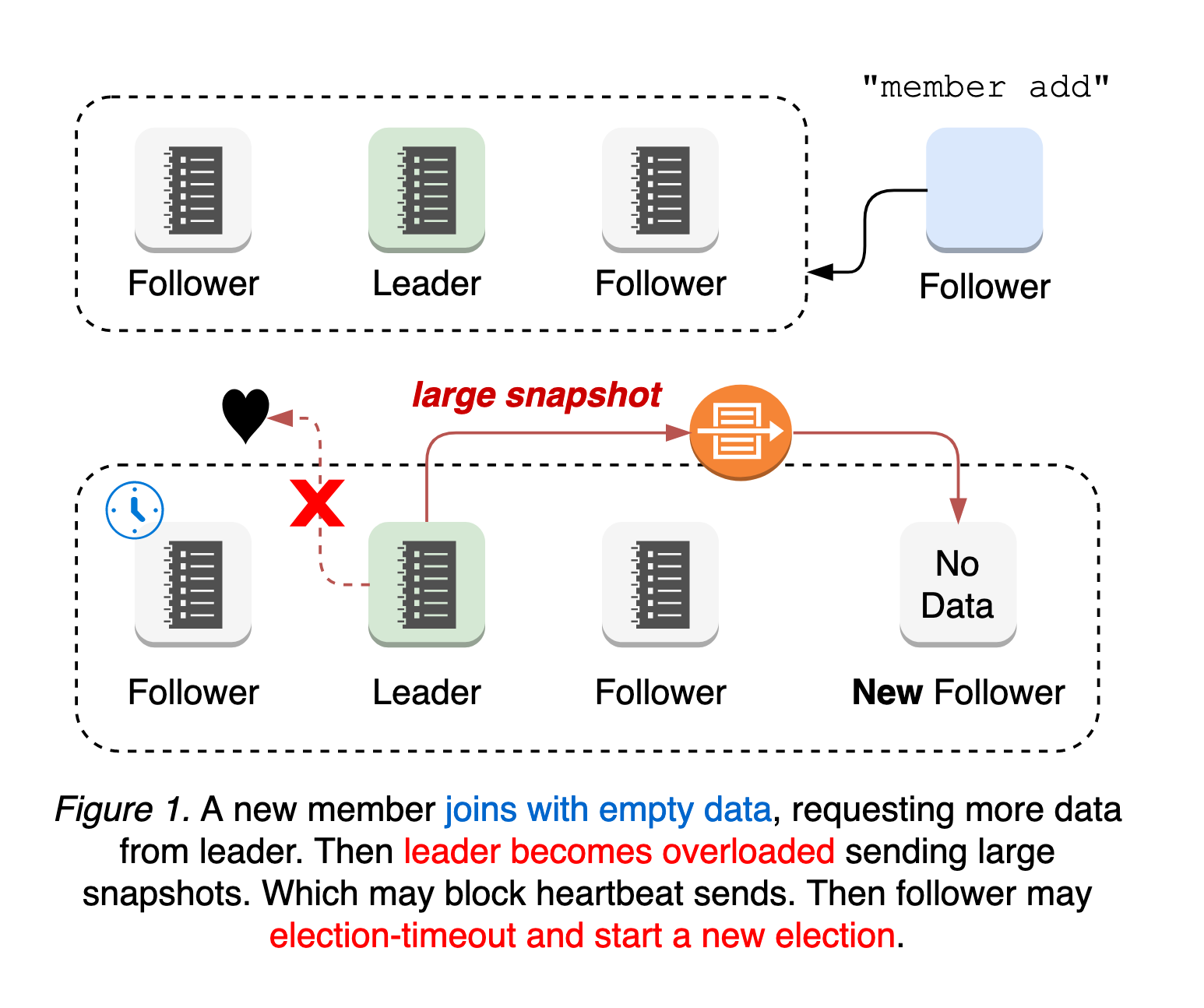

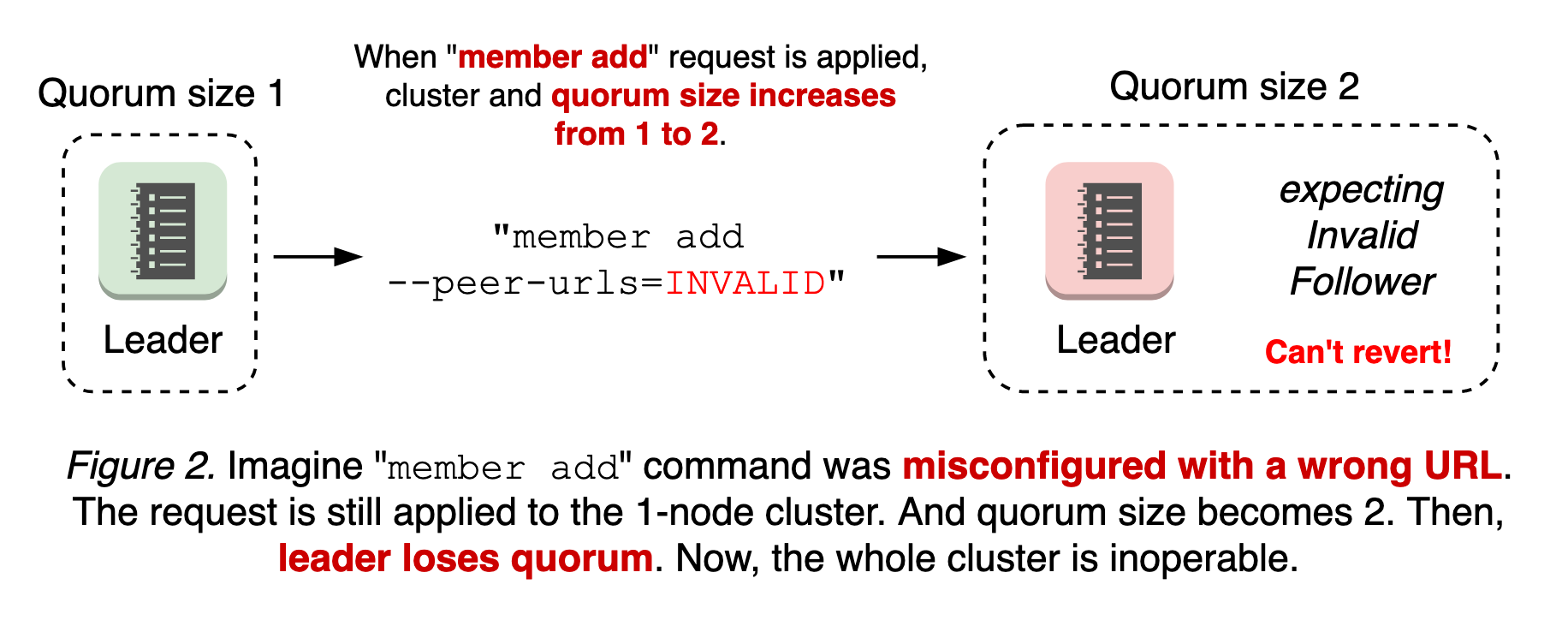

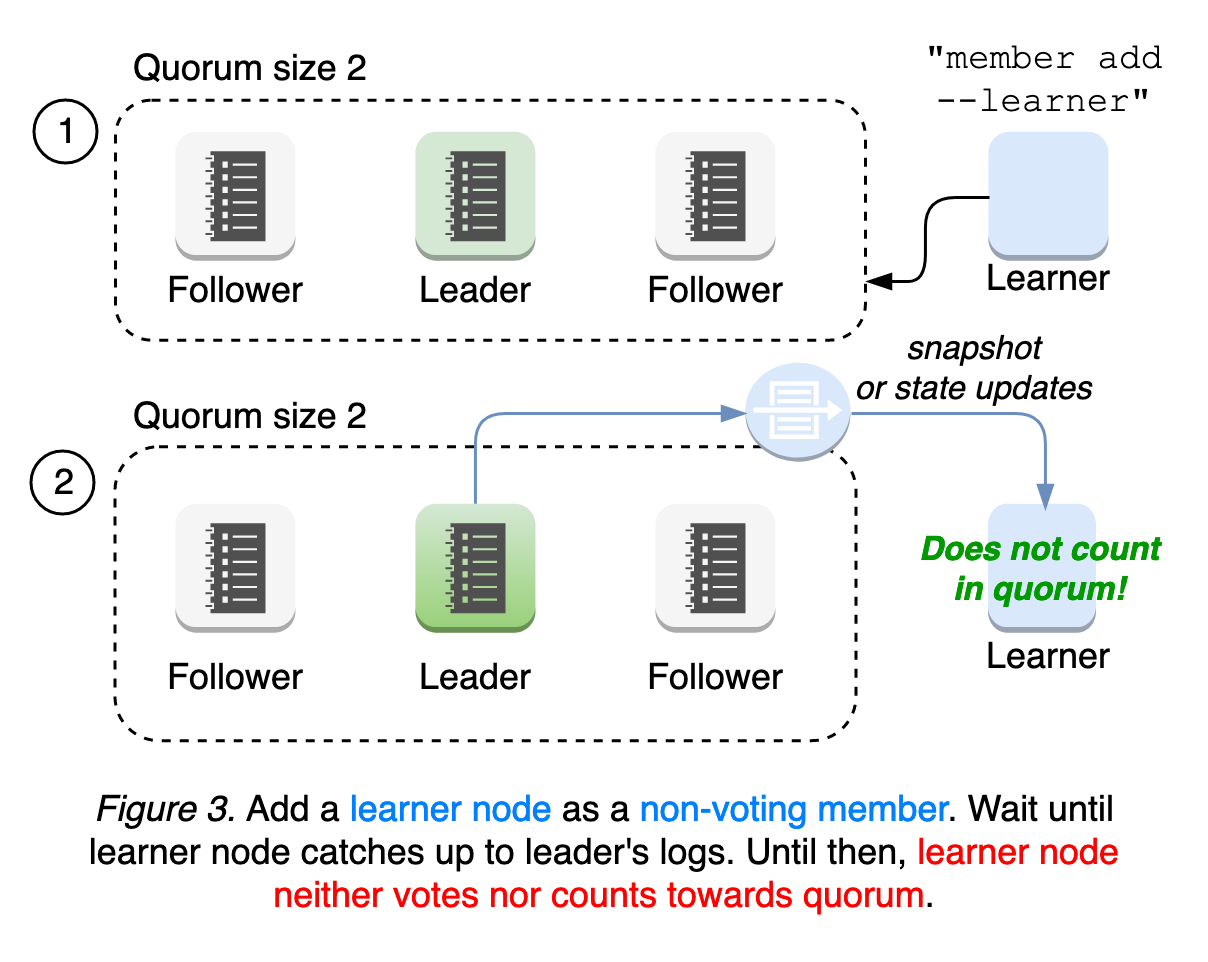

- kubeadm: Use etcd Learner to Join a Control Plane Node Safely

- User Namespaces: Now Supports Running Stateful Pods in Alpha!

- Comparing Local Kubernetes Development Tools: Telepresence, Gefyra, and mirrord

- Kubernetes Legacy Package Repositories Will Be Frozen On September 13, 2023

- Gateway API v0.8.0: Introducing Service Mesh Support

- Kubernetes 1.28: A New (alpha) Mechanism For Safer Cluster Upgrades

- Kubernetes v1.28: Introducing native sidecar containers

- Kubernetes 1.28: Beta support for using swap on Linux

- Kubernetes 1.28: Node podresources API Graduates to GA

- Kubernetes 1.28: Improved failure handling for Jobs

- Kubernetes v1.28: Retroactive Default StorageClass move to GA

- Kubernetes 1.28: Non-Graceful Node Shutdown Moves to GA

- pkgs.k8s.io: Introducing Kubernetes Community-Owned Package Repositories

- Kubernetes v1.28: Planternetes

- Spotlight on SIG ContribEx

- Spotlight on SIG CLI

- Confidential Kubernetes: Use Confidential Virtual Machines and Enclaves to improve your cluster security

- Verifying Container Image Signatures Within CRI Runtimes

- dl.k8s.io to adopt a Content Delivery Network

- Using OCI artifacts to distribute security profiles for seccomp, SELinux and AppArmor

- Having fun with seccomp profiles on the edge

- Kubernetes 1.27: KMS V2 Moves to Beta

- Kubernetes 1.27: updates on speeding up Pod startup

- Kubernetes 1.27: In-place Resource Resize for Kubernetes Pods (alpha)

- Kubernetes 1.27: Avoid Collisions Assigning Ports to NodePort Services

- Kubernetes 1.27: Safer, More Performant Pruning in kubectl apply

- Kubernetes 1.27: Introducing An API For Volume Group Snapshots

- Kubernetes 1.27: Quality-of-Service for Memory Resources (alpha)

- Kubernetes 1.27: StatefulSet PVC Auto-Deletion (beta)

- Kubernetes 1.27: HorizontalPodAutoscaler ContainerResource type metric moves to beta

- Kubernetes 1.27: StatefulSet Start Ordinal Simplifies Migration

- Updates to the Auto-refreshing Official CVE Feed

- Kubernetes 1.27: Server Side Field Validation and OpenAPI V3 move to GA

- Kubernetes 1.27: Query Node Logs Using The Kubelet API

- Kubernetes 1.27: Single Pod Access Mode for PersistentVolumes Graduates to Beta

- Kubernetes 1.27: Efficient SELinux volume relabeling (Beta)

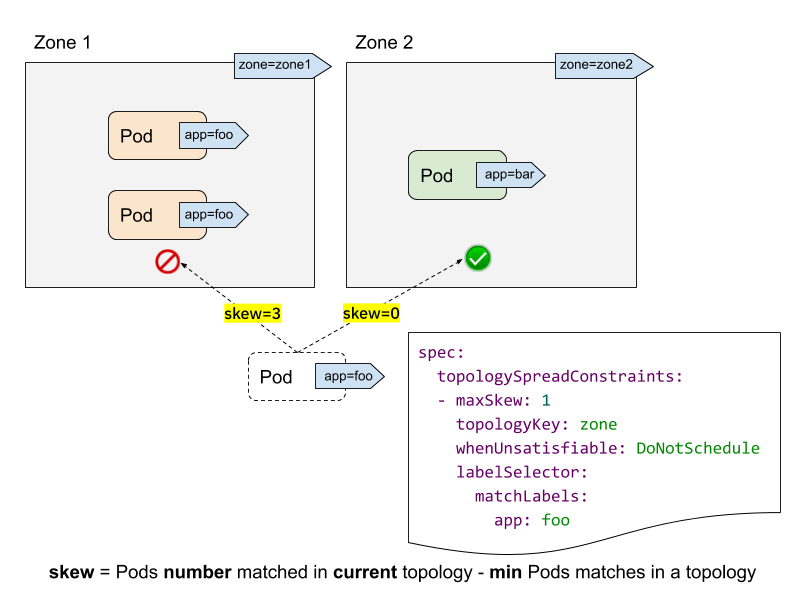

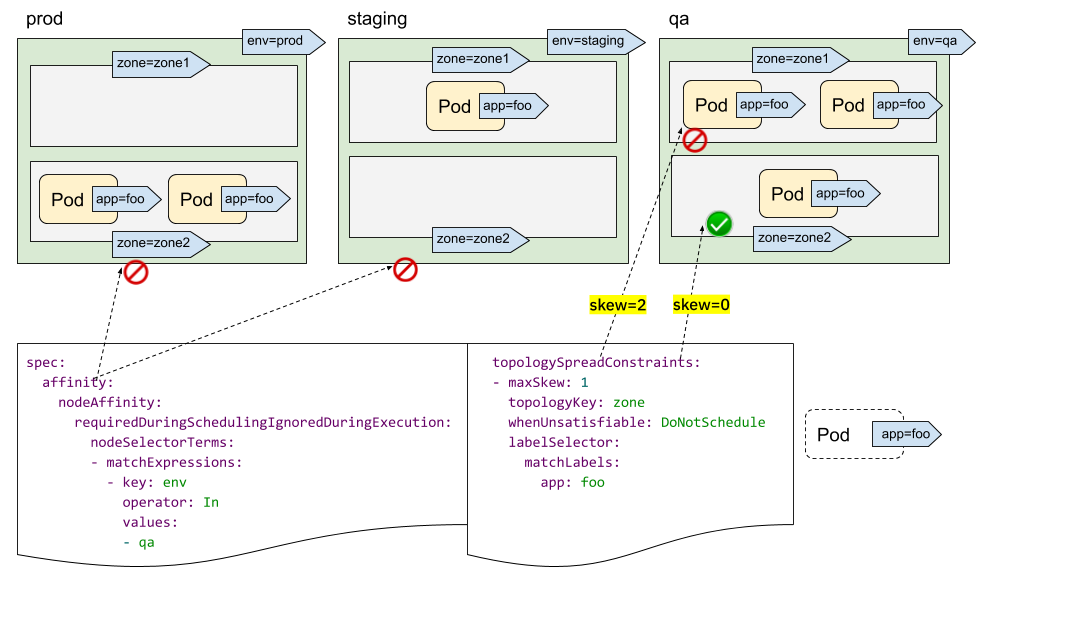

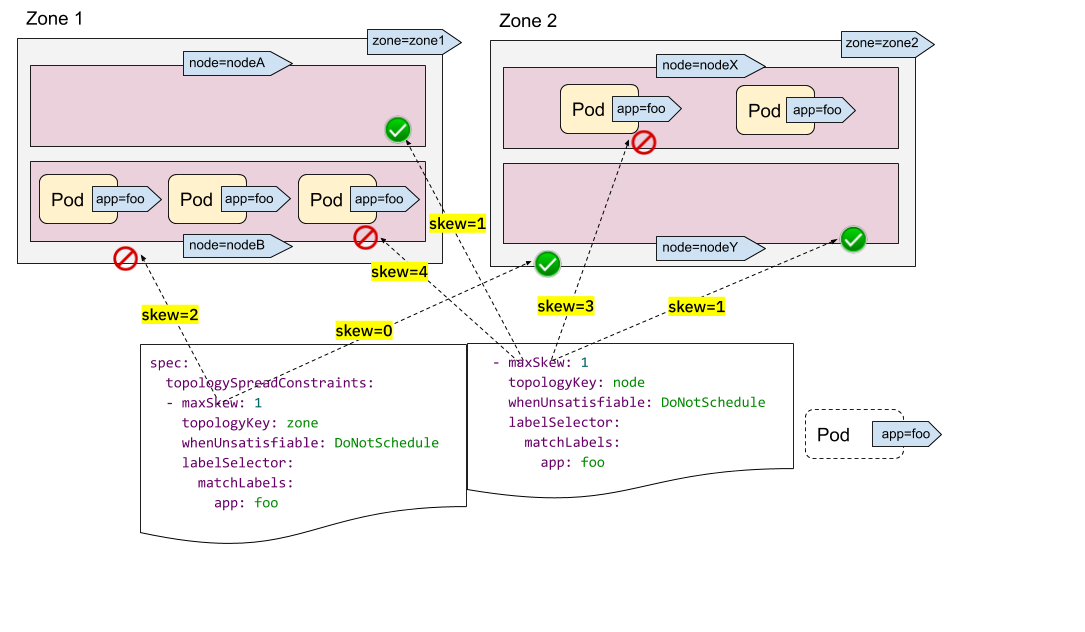

- Kubernetes 1.27: More fine-grained pod topology spread policies reached beta

- Kubernetes v1.27: Chill Vibes

- Keeping Kubernetes Secure with Updated Go Versions

- Kubernetes Validating Admission Policies: A Practical Example

- Kubernetes Removals and Major Changes In v1.27

- k8s.gcr.io Redirect to registry.k8s.io - What You Need to Know

- Forensic container analysis

- Introducing KWOK: Kubernetes WithOut Kubelet

- Free Katacoda Kubernetes Tutorials Are Shutting Down

- k8s.gcr.io Image Registry Will Be Frozen From the 3rd of April 2023

- Spotlight on SIG Instrumentation

- Consider All Microservices Vulnerable — And Monitor Their Behavior

- Protect Your Mission-Critical Pods From Eviction With PriorityClass

- Kubernetes 1.26: Eviction policy for unhealthy pods guarded by PodDisruptionBudgets

- Kubernetes v1.26: Retroactive Default StorageClass

- Kubernetes v1.26: Alpha support for cross-namespace storage data sources

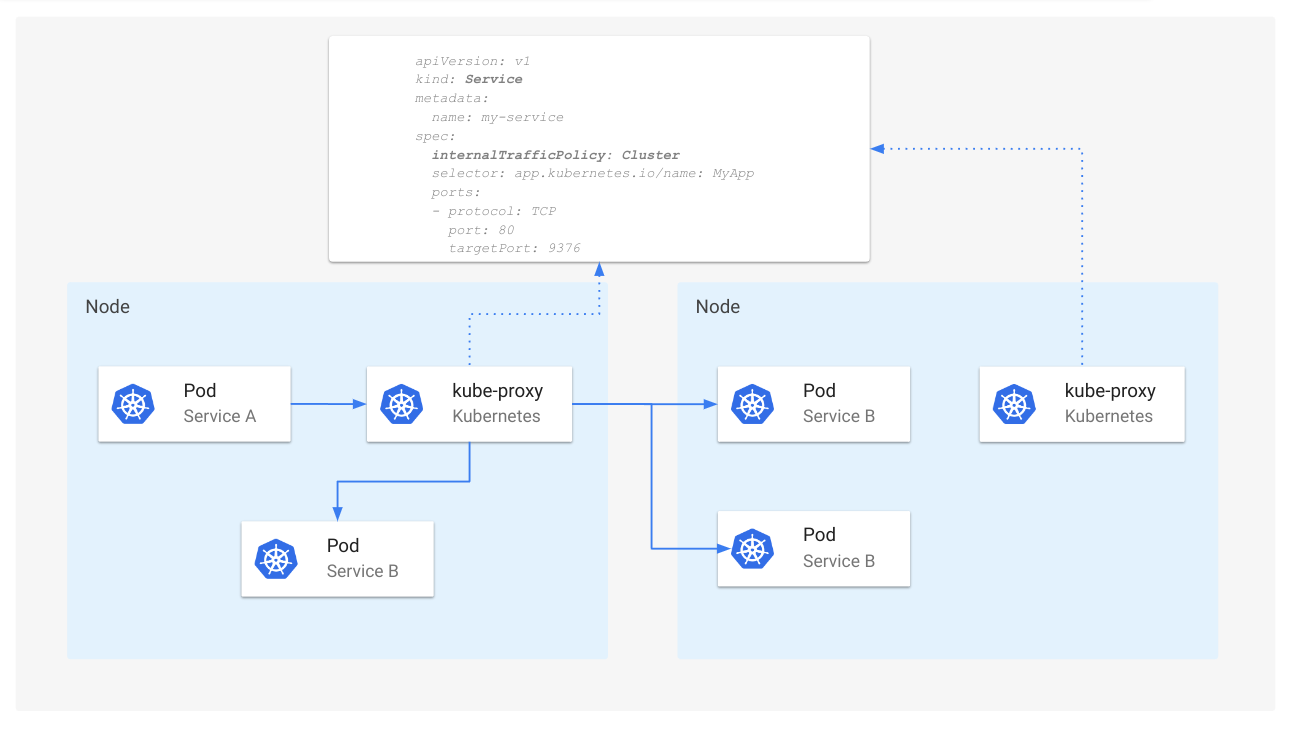

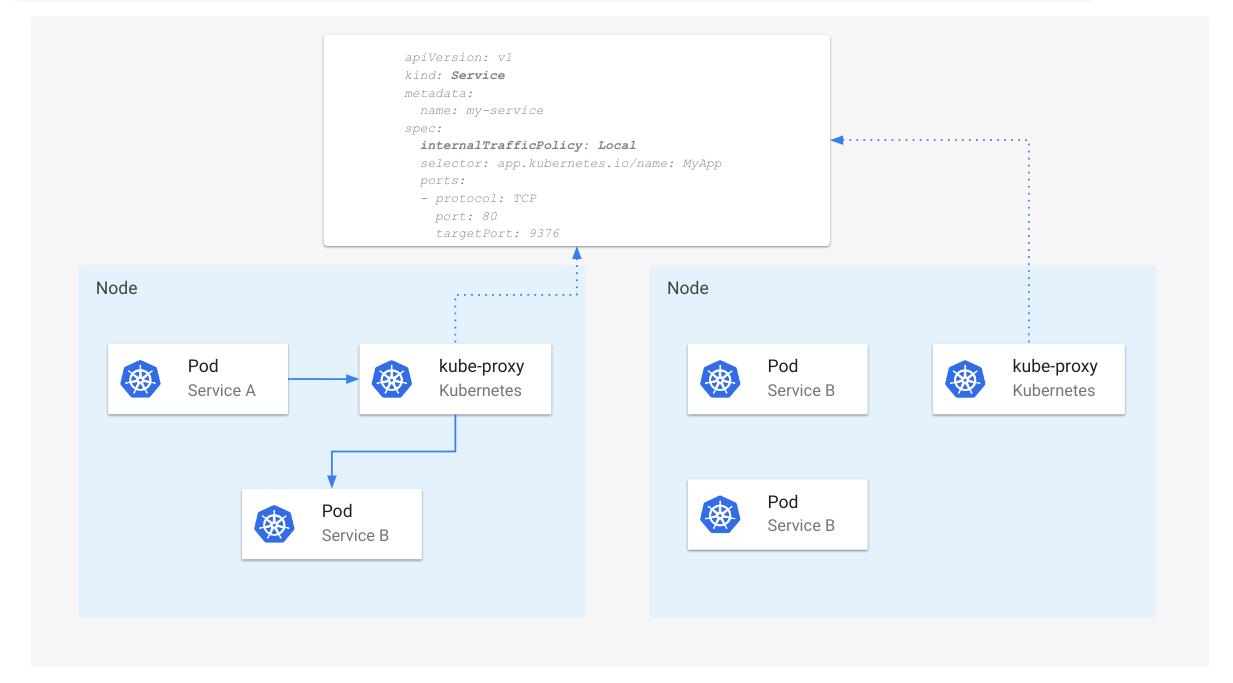

- Kubernetes v1.26: Advancements in Kubernetes Traffic Engineering

- Kubernetes 1.26: Job Tracking, to Support Massively Parallel Batch Workloads, Is Generally Available

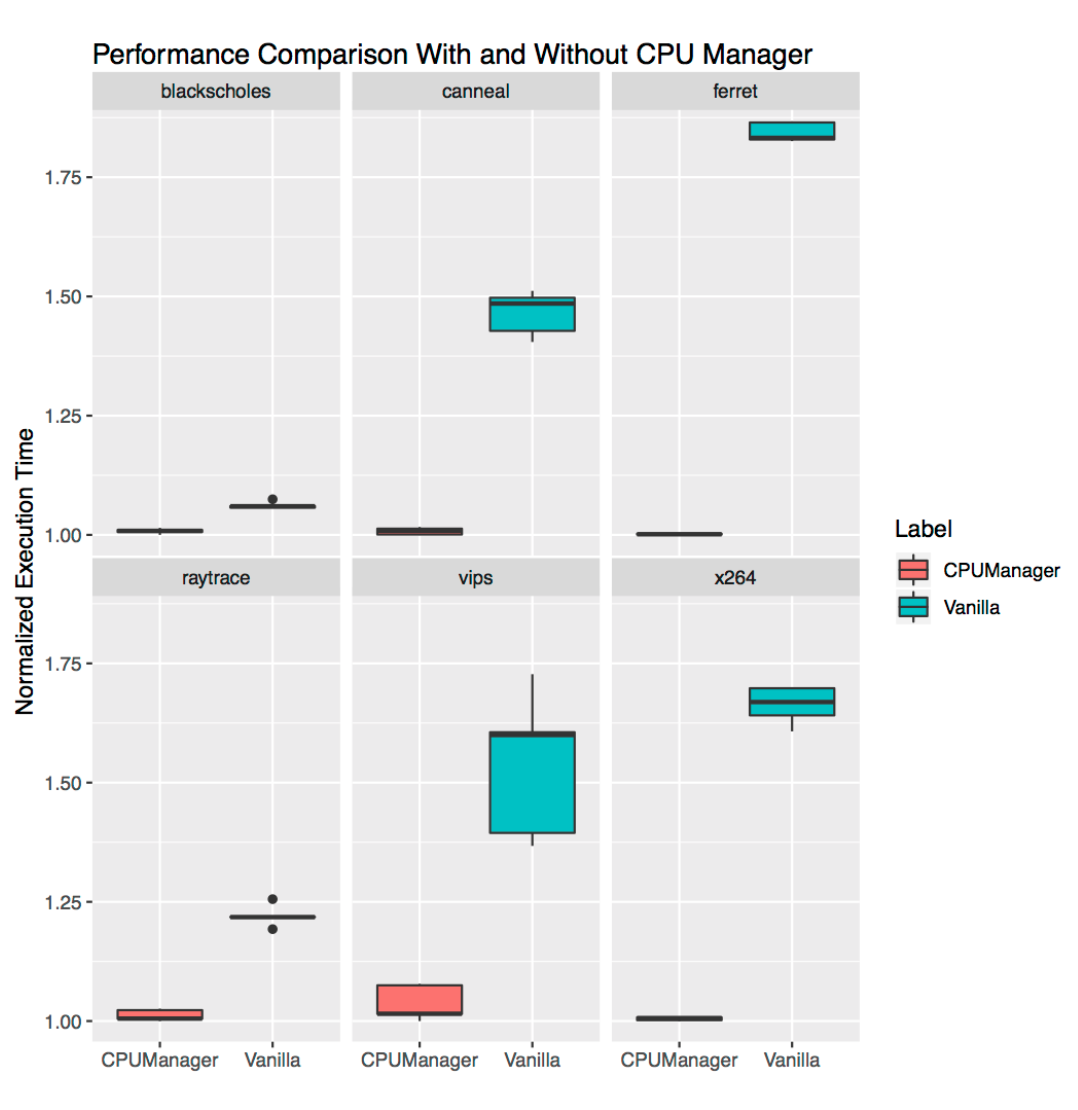

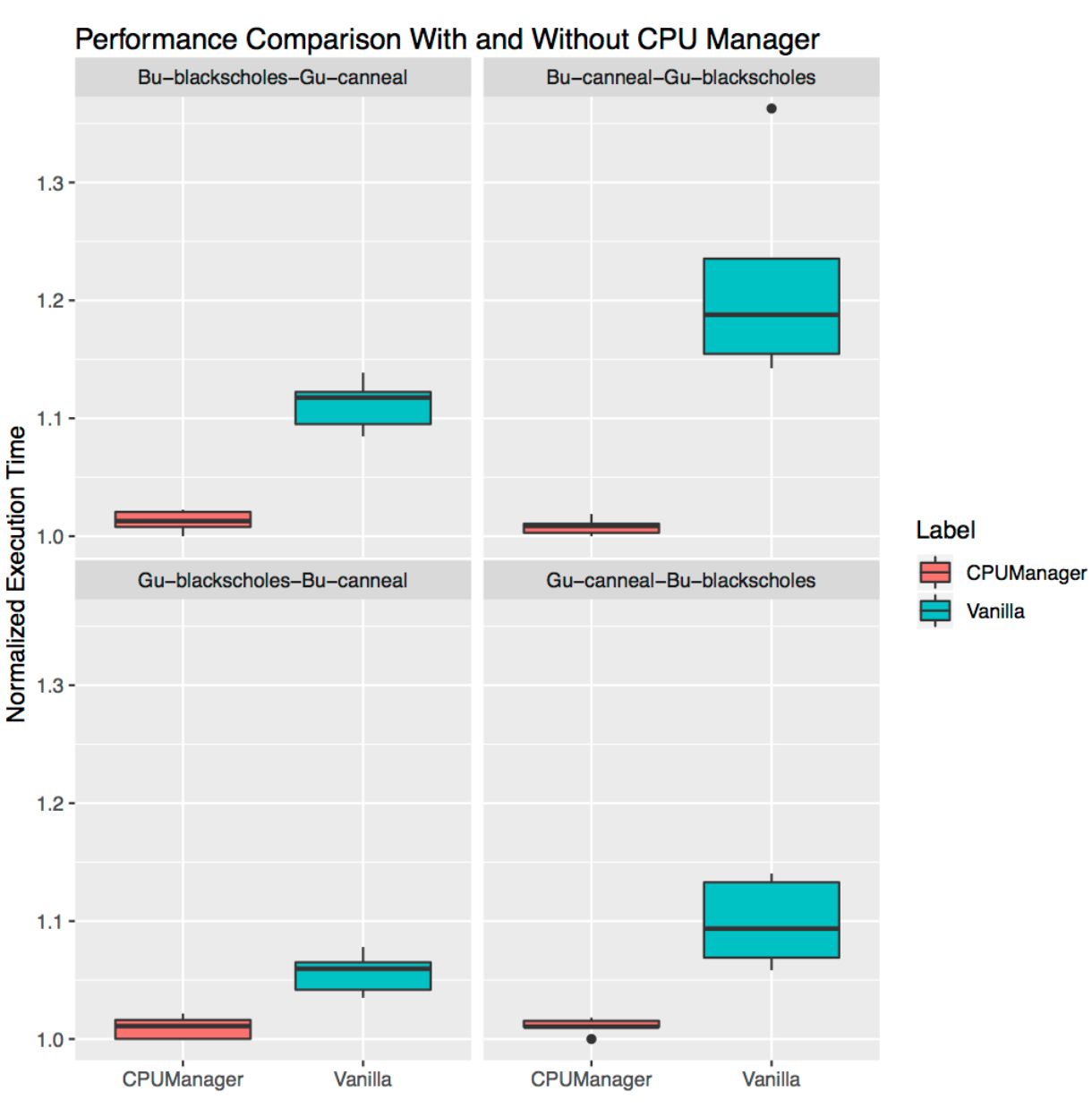

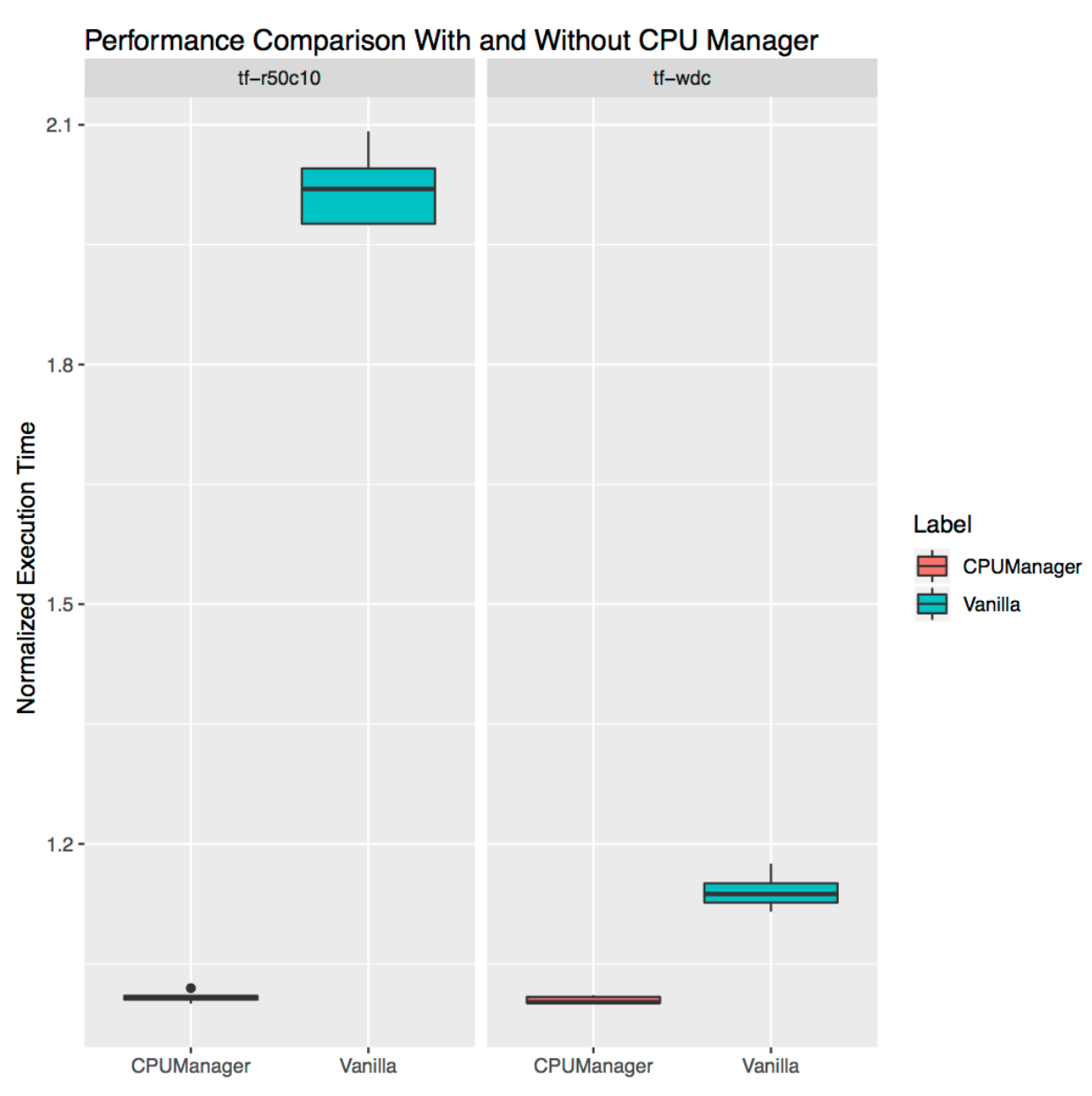

- Kubernetes v1.26: CPUManager goes GA

- Kubernetes 1.26: Pod Scheduling Readiness

- Kubernetes 1.26: Support for Passing Pod fsGroup to CSI Drivers At Mount Time

- Kubernetes v1.26: GA Support for Kubelet Credential Providers

- Kubernetes 1.26: Introducing Validating Admission Policies

- Kubernetes 1.26: Device Manager graduates to GA

- Kubernetes 1.26: Non-Graceful Node Shutdown Moves to Beta

- Kubernetes 1.26: Alpha API For Dynamic Resource Allocation

- Kubernetes 1.26: Windows HostProcess Containers Are Generally Available

- Kubernetes 1.26: We're now signing our binary release artifacts!

- Kubernetes v1.26: Electrifying

- Forensic container checkpointing in Kubernetes

- Finding suspicious syscalls with the seccomp notifier

- Boosting Kubernetes container runtime observability with OpenTelemetry

- registry.k8s.io: faster, cheaper and Generally Available (GA)

- Kubernetes Removals, Deprecations, and Major Changes in 1.26

- Live and let live with Kluctl and Server Side Apply

- Server Side Apply Is Great And You Should Be Using It

- Current State: 2019 Third Party Security Audit of Kubernetes

- Introducing Kueue

- Kubernetes 1.25: alpha support for running Pods with user namespaces

- Enforce CRD Immutability with CEL Transition Rules

- Kubernetes 1.25: Kubernetes In-Tree to CSI Volume Migration Status Update

- Kubernetes 1.25: CustomResourceDefinition Validation Rules Graduate to Beta

- Kubernetes 1.25: Use Secrets for Node-Driven Expansion of CSI Volumes

- Kubernetes 1.25: Local Storage Capacity Isolation Reaches GA

- Kubernetes 1.25: Two Features for Apps Rollouts Graduate to Stable

- Kubernetes 1.25: PodHasNetwork Condition for Pods

- Announcing the Auto-refreshing Official Kubernetes CVE Feed

- Kubernetes 1.25: KMS V2 Improvements

- Kubernetes’s IPTables Chains Are Not API

- Introducing COSI: Object Storage Management using Kubernetes APIs

- Kubernetes 1.25: cgroup v2 graduates to GA

- Kubernetes 1.25: CSI Inline Volumes have graduated to GA

- Kubernetes v1.25: Pod Security Admission Controller in Stable

- PodSecurityPolicy: The Historical Context

- Kubernetes v1.25: Combiner

- Spotlight on SIG Storage

- Meet Our Contributors - APAC (China region)

- Enhancing Kubernetes one KEP at a Time

- Kubernetes Removals and Major Changes In 1.25

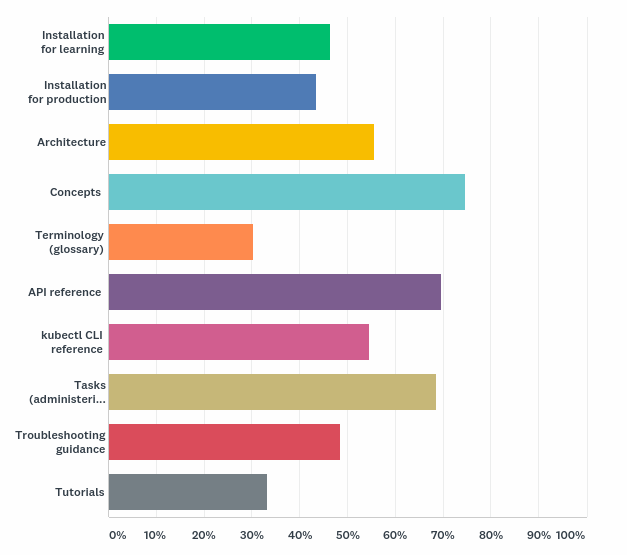

- Spotlight on SIG Docs

- Kubernetes Gateway API Graduates to Beta

- Annual Report Summary 2021

- Kubernetes 1.24: Maximum Unavailable Replicas for StatefulSet

- Contextual Logging in Kubernetes 1.24

- Kubernetes 1.24: Avoid Collisions Assigning IP Addresses to Services

- Kubernetes 1.24: Introducing Non-Graceful Node Shutdown Alpha

- Kubernetes 1.24: Prevent unauthorised volume mode conversion

- Kubernetes 1.24: Volume Populators Graduate to Beta

- Kubernetes 1.24: gRPC container probes in beta

- Kubernetes 1.24: Storage Capacity Tracking Now Generally Available

- Kubernetes 1.24: Volume Expansion Now A Stable Feature

- Dockershim: The Historical Context

- Kubernetes 1.24: Stargazer

- Increasing the security bar in Ingress-NGINX v1.2.0

- Kubernetes Removals and Deprecations In 1.24

- Is Your Cluster Ready for v1.24?

- Meet Our Contributors - APAC (Aus-NZ region)

- Updated: Dockershim Removal FAQ

- SIG Node CI Subproject Celebrates Two Years of Test Improvements

- Spotlight on SIG Multicluster

- Securing Admission Controllers

- Meet Our Contributors - APAC (India region)

- Kubernetes is Moving on From Dockershim: Commitments and Next Steps

- Kubernetes-in-Kubernetes and the WEDOS PXE bootable server farm

- Using Admission Controllers to Detect Container Drift at Runtime

- What's new in Security Profiles Operator v0.4.0

- Kubernetes 1.23: StatefulSet PVC Auto-Deletion (alpha)

- Kubernetes 1.23: Prevent PersistentVolume leaks when deleting out of order

- Kubernetes 1.23: Kubernetes In-Tree to CSI Volume Migration Status Update

- Kubernetes 1.23: Pod Security Graduates to Beta

- Kubernetes 1.23: Dual-stack IPv4/IPv6 Networking Reaches GA

- Kubernetes 1.23: The Next Frontier

- Contribution, containers and cricket: the Kubernetes 1.22 release interview

- Quality-of-Service for Memory Resources

- Dockershim removal is coming. Are you ready?

- Non-root Containers And Devices

- Announcing the 2021 Steering Committee Election Results

- Use KPNG to Write Specialized kube-proxiers

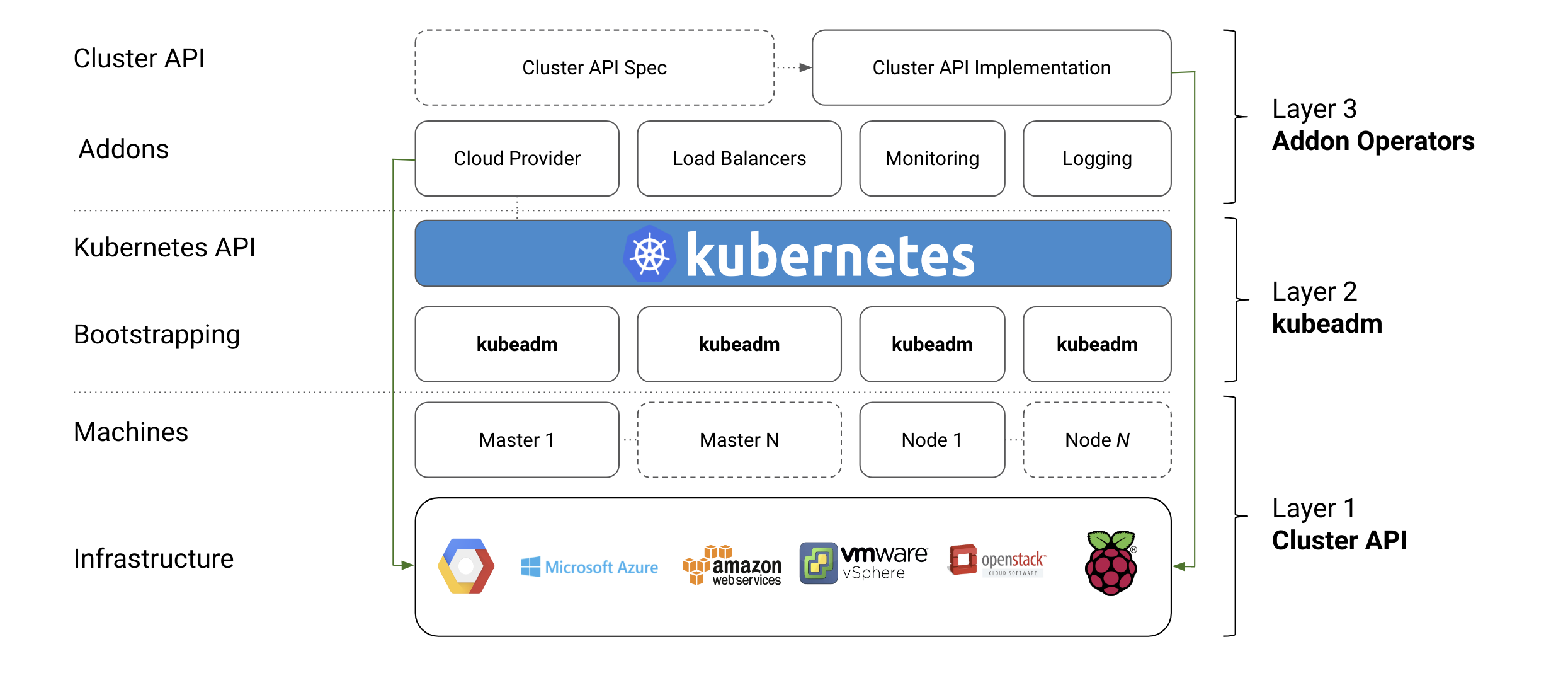

- Introducing ClusterClass and Managed Topologies in Cluster API

- A Closer Look at NSA/CISA Kubernetes Hardening Guidance

- How to Handle Data Duplication in Data-Heavy Kubernetes Environments

- Spotlight on SIG Node

- Introducing Single Pod Access Mode for PersistentVolumes

- Alpha in Kubernetes v1.22: API Server Tracing

- Kubernetes 1.22: A New Design for Volume Populators

- Minimum Ready Seconds for StatefulSets

- Enable seccomp for all workloads with a new v1.22 alpha feature

- Alpha in v1.22: Windows HostProcess Containers

- Kubernetes Memory Manager moves to beta

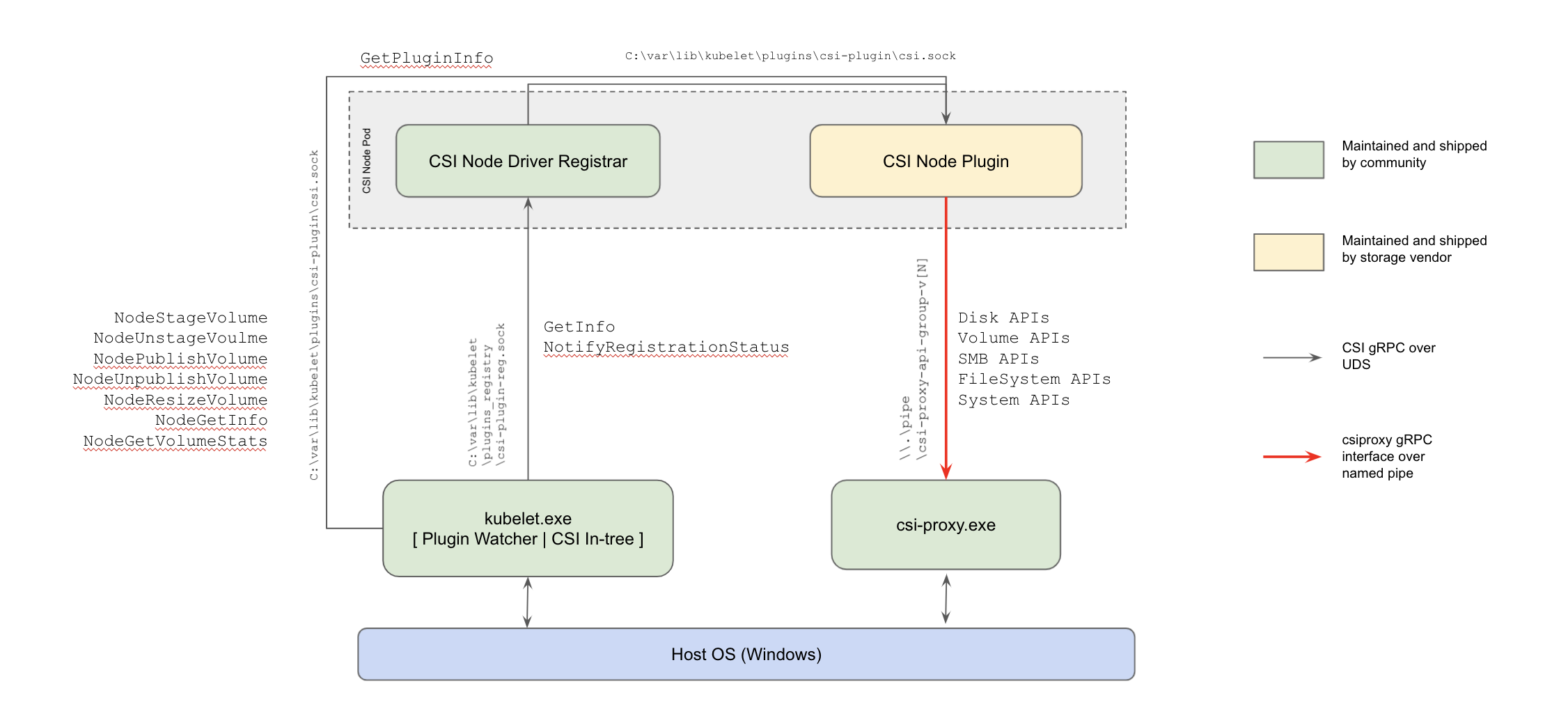

- Kubernetes 1.22: CSI Windows Support (with CSI Proxy) reaches GA

- New in Kubernetes v1.22: alpha support for using swap memory

- Kubernetes 1.22: Server Side Apply moves to GA

- Kubernetes 1.22: Reaching New Peaks

- Roorkee robots, releases and racing: the Kubernetes 1.21 release interview

- Updating NGINX-Ingress to use the stable Ingress API

- Kubernetes Release Cadence Change: Here’s What You Need To Know

- Spotlight on SIG Usability

- Kubernetes API and Feature Removals In 1.22: Here’s What You Need To Know

- Announcing Kubernetes Community Group Annual Reports

- Writing a Controller for Pod Labels

- Using Finalizers to Control Deletion

- Kubernetes 1.21: Metrics Stability hits GA

- Evolving Kubernetes networking with the Gateway API

- Graceful Node Shutdown Goes Beta

- Annotating Kubernetes Services for Humans

- Defining Network Policy Conformance for Container Network Interface (CNI) providers

- Introducing Indexed Jobs

- Volume Health Monitoring Alpha Update

- Three Tenancy Models For Kubernetes

- Local Storage: Storage Capacity Tracking, Distributed Provisioning and Generic Ephemeral Volumes hit Beta

- kube-state-metrics goes v2.0

- Introducing Suspended Jobs

- Kubernetes 1.21: CronJob Reaches GA

- Kubernetes 1.21: Power to the Community

- PodSecurityPolicy Deprecation: Past, Present, and Future

- The Evolution of Kubernetes Dashboard

- A Custom Kubernetes Scheduler to Orchestrate Highly Available Applications

- Kubernetes 1.20: Pod Impersonation and Short-lived Volumes in CSI Drivers

- Third Party Device Metrics Reaches GA

- Kubernetes 1.20: Granular Control of Volume Permission Changes

- Kubernetes 1.20: Kubernetes Volume Snapshot Moves to GA

- Kubernetes 1.20: The Raddest Release

- GSoD 2020: Improving the API Reference Experience

- Dockershim Deprecation FAQ

- Don't Panic: Kubernetes and Docker

- Cloud native security for your clusters

- Remembering Dan Kohn

- Announcing the 2020 Steering Committee Election Results

- Contributing to the Development Guide

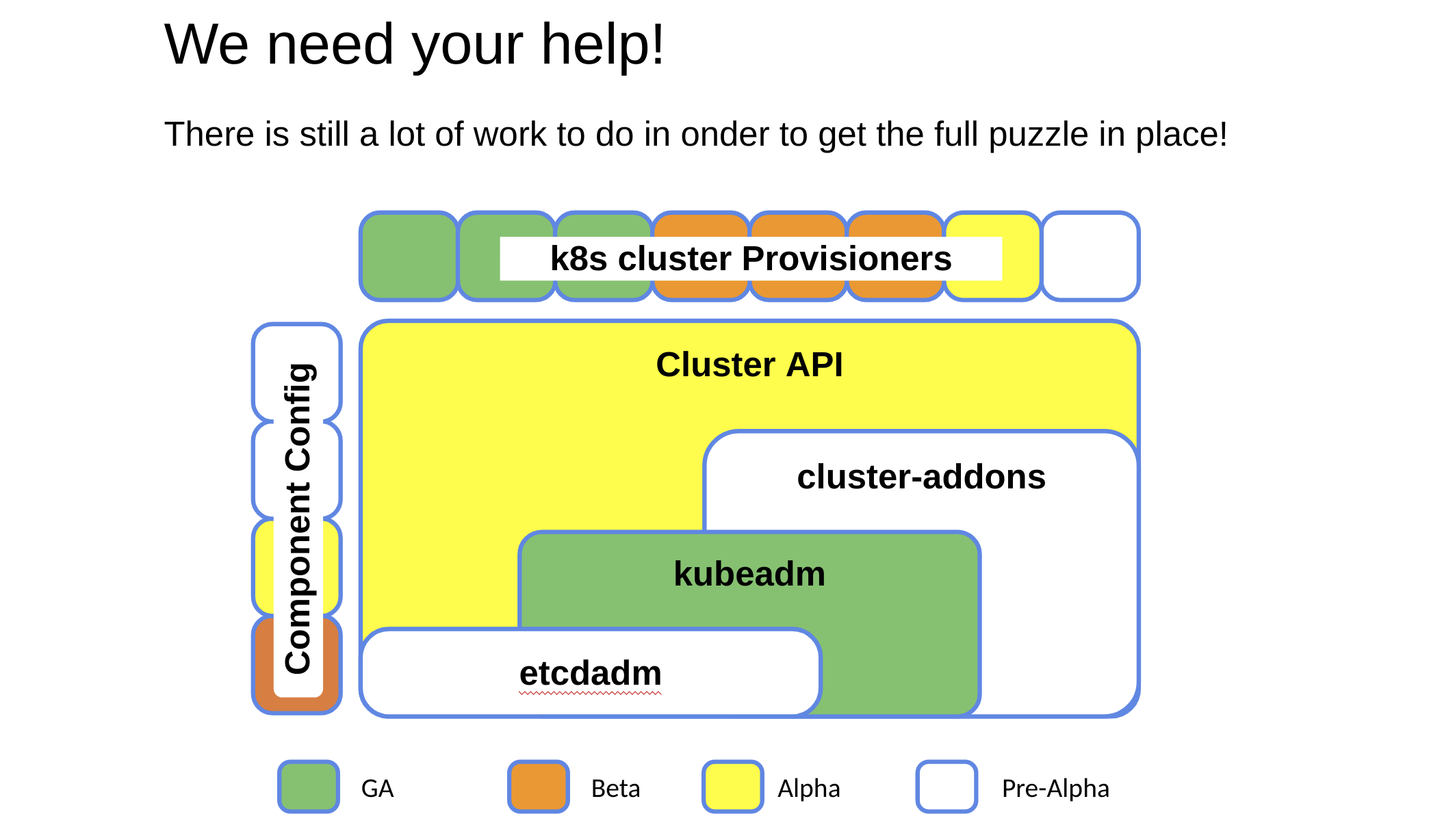

- GSoC 2020 - Building operators for cluster addons

- Introducing Structured Logs

- Warning: Helpful Warnings Ahead

- Scaling Kubernetes Networking With EndpointSlices

- Ephemeral volumes with storage capacity tracking: EmptyDir on steroids

- Increasing the Kubernetes Support Window to One Year

- Kubernetes 1.19: Accentuate the Paw-sitive

- Moving Forward From Beta

- Introducing Hierarchical Namespaces

- Physics, politics and Pull Requests: the Kubernetes 1.18 release interview

- Music and math: the Kubernetes 1.17 release interview

- SIG-Windows Spotlight

- Working with Terraform and Kubernetes

- A Better Docs UX With Docsy

- Supporting the Evolving Ingress Specification in Kubernetes 1.18

- K8s KPIs with Kuberhealthy

- My exciting journey into Kubernetes’ history

- An Introduction to the K8s-Infrastructure Working Group

- WSL+Docker: Kubernetes on the Windows Desktop

- How Docs Handle Third Party and Dual Sourced Content

- Introducing PodTopologySpread

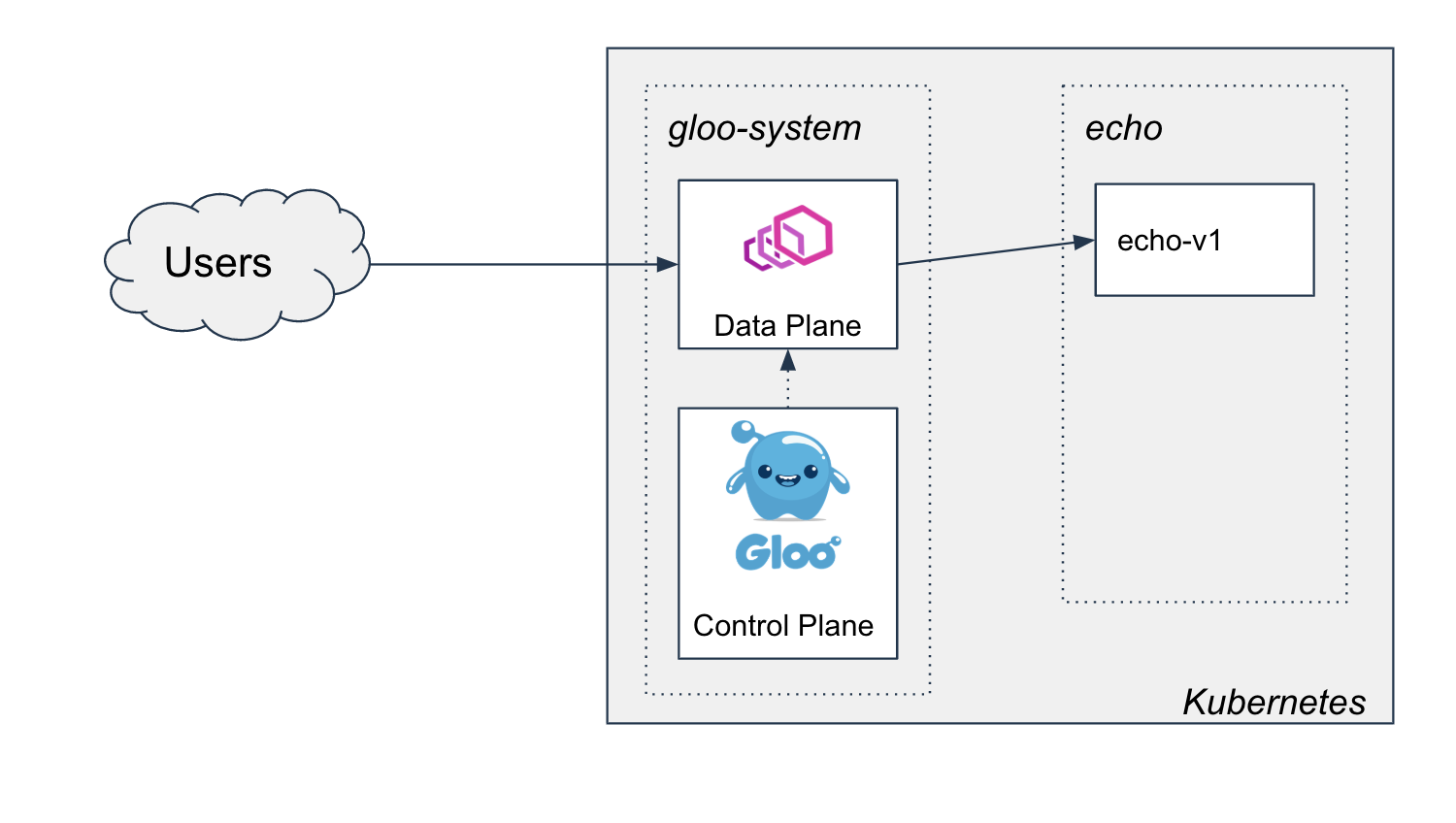

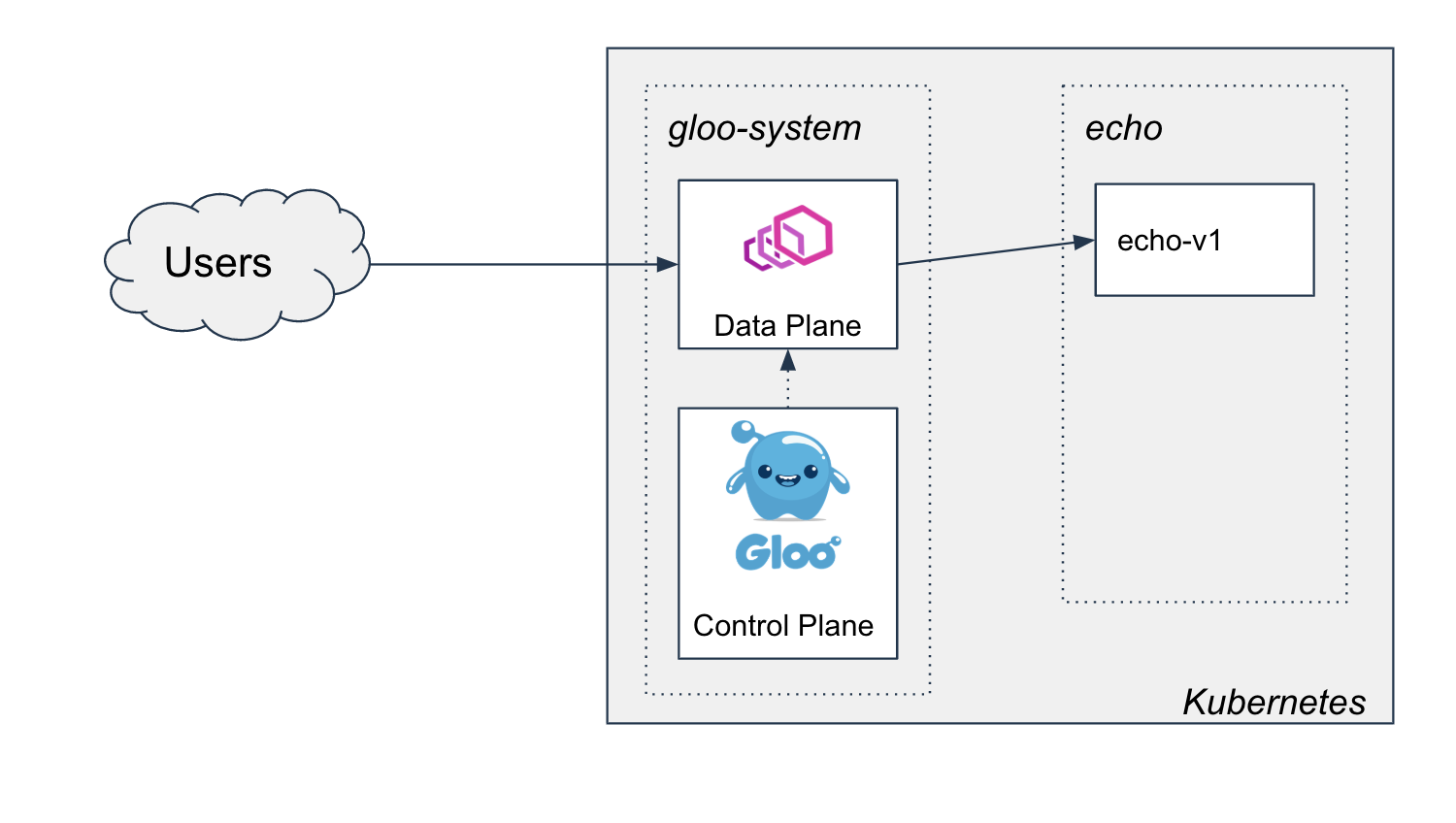

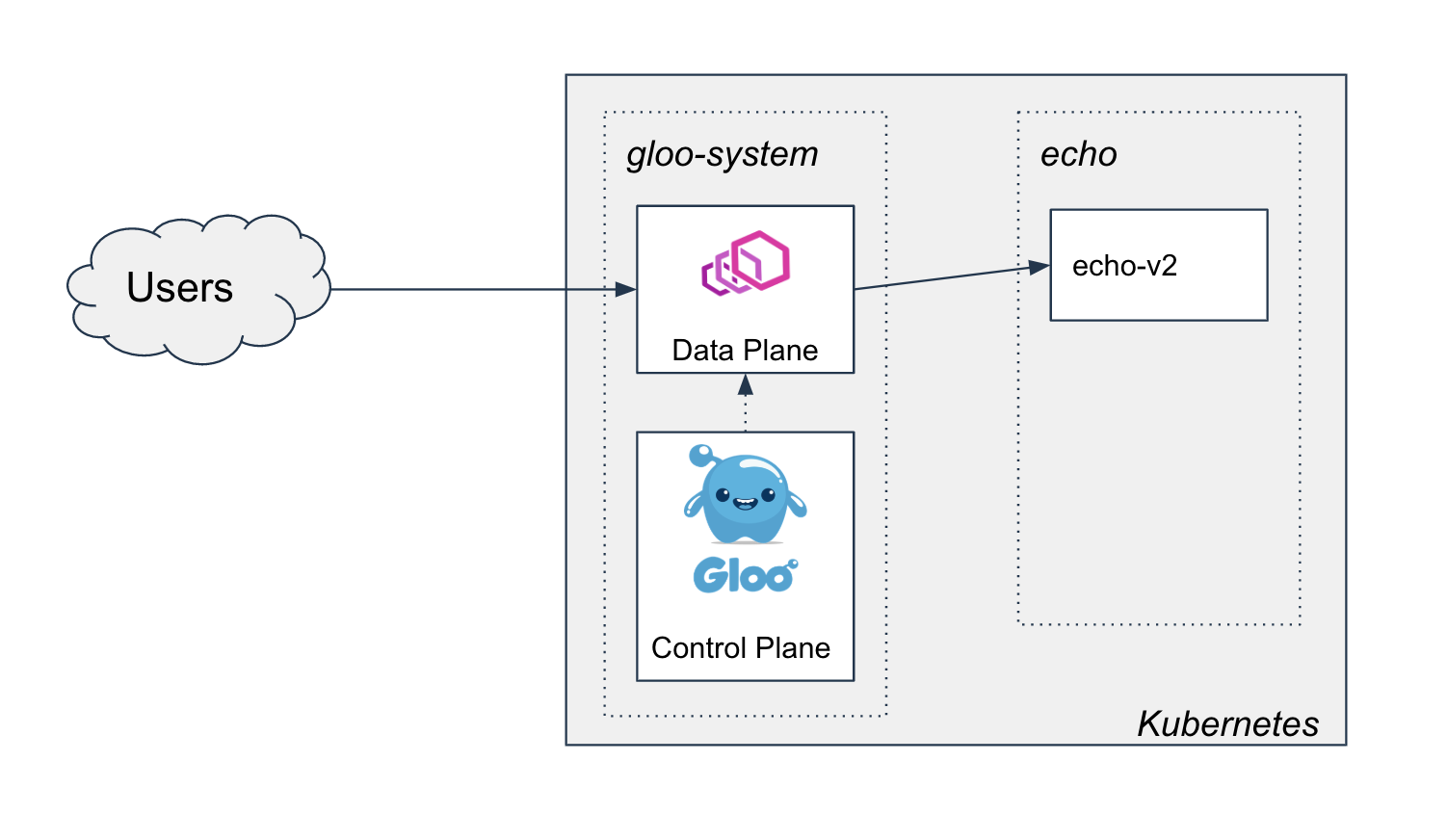

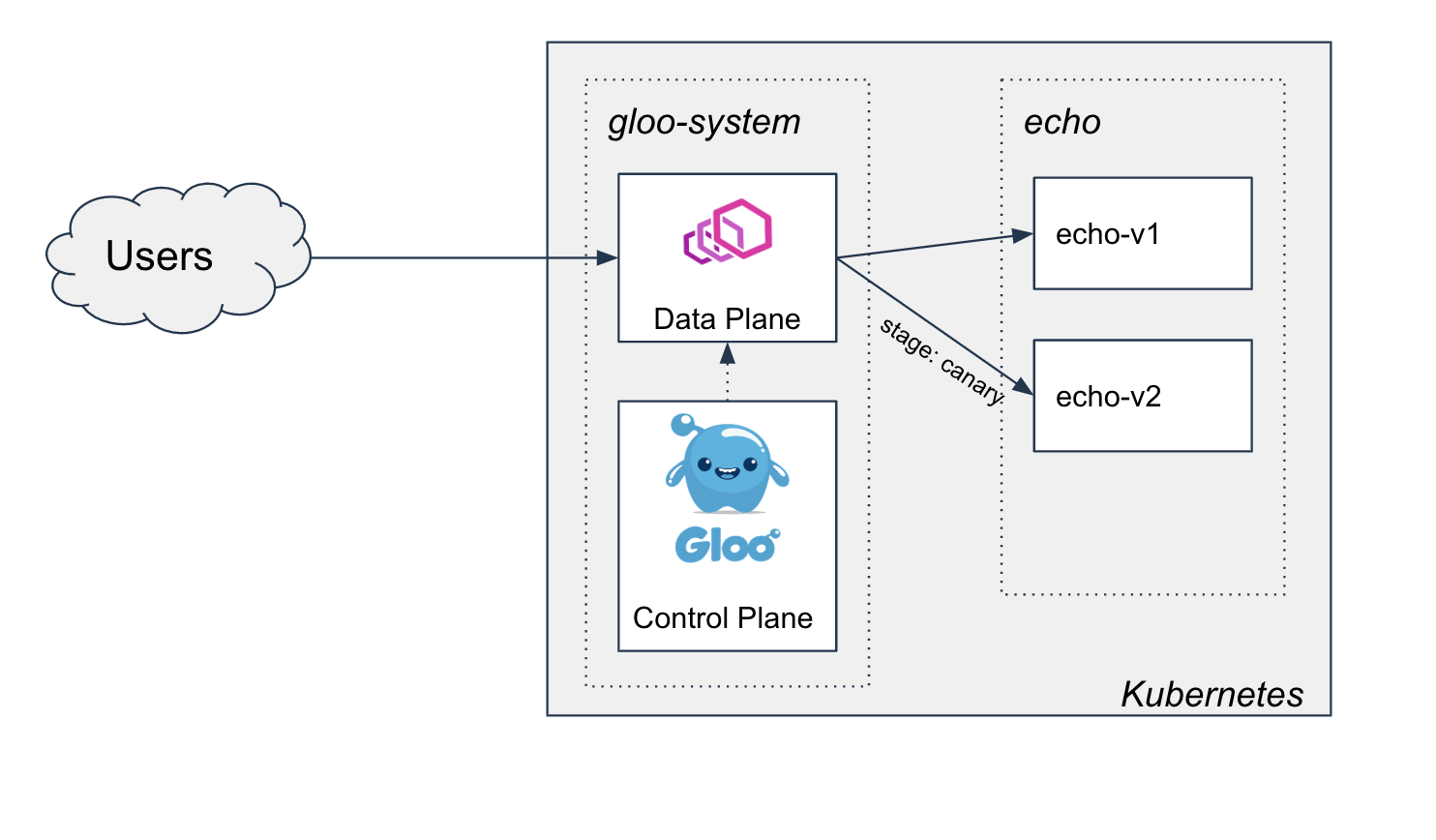

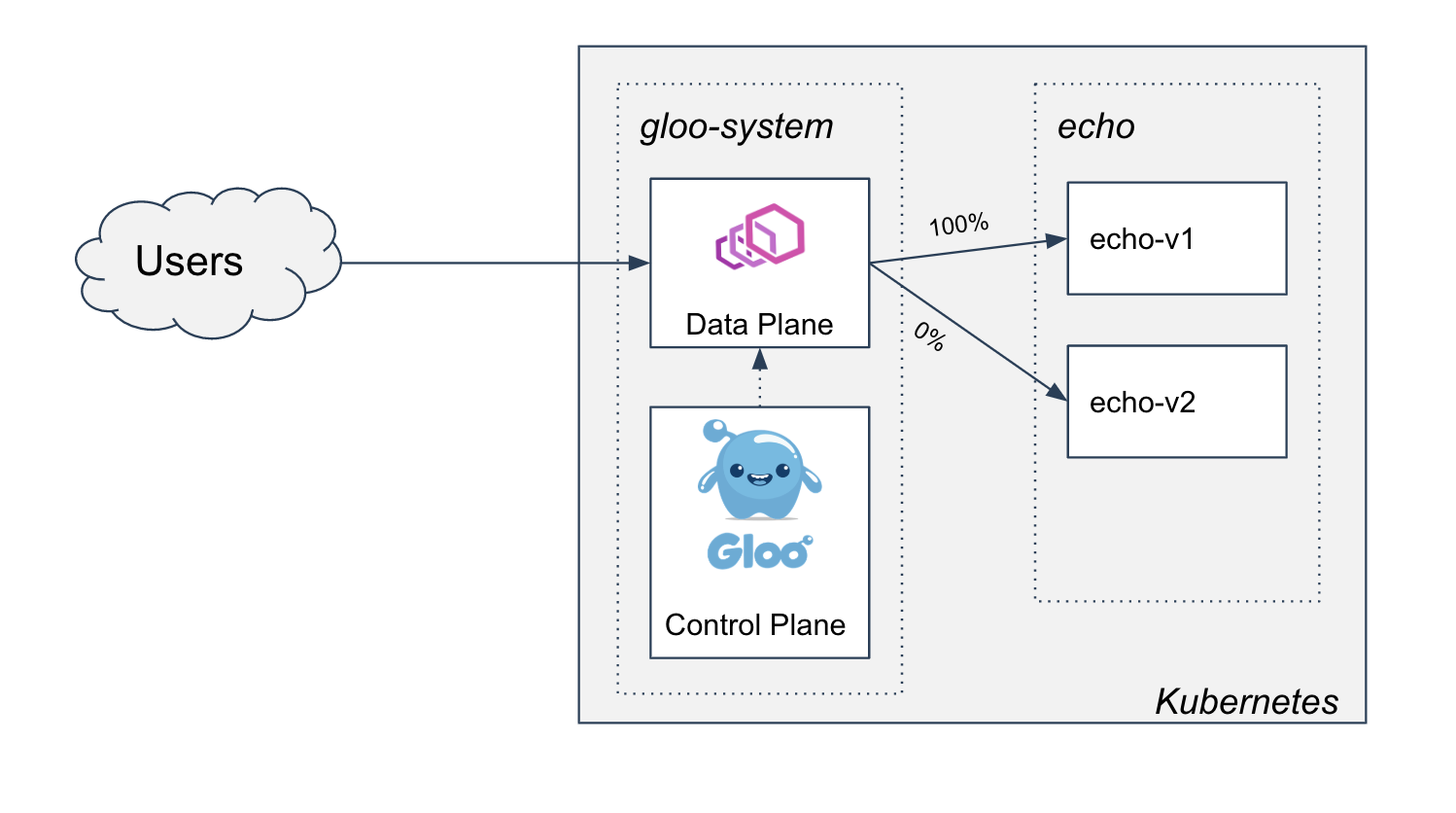

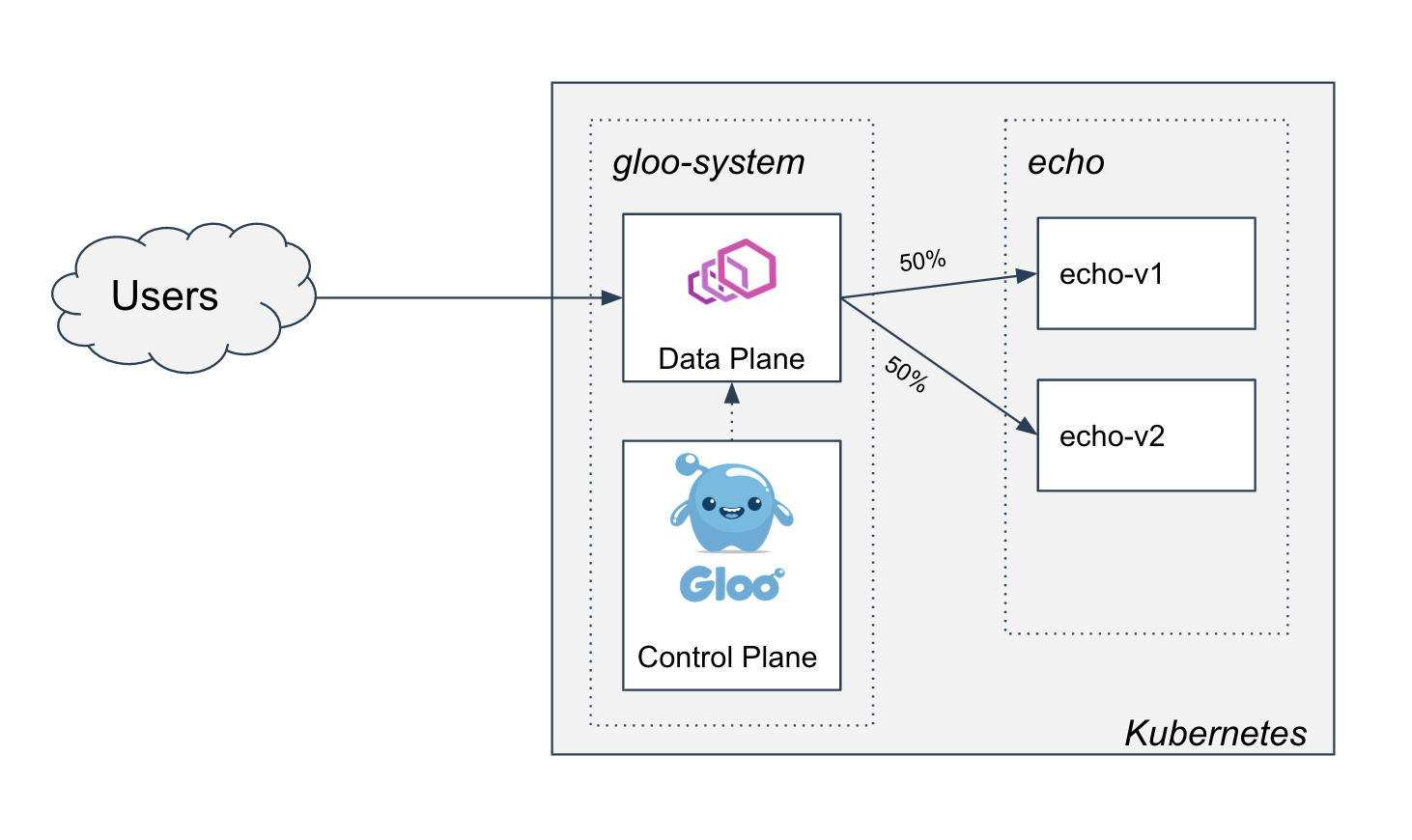

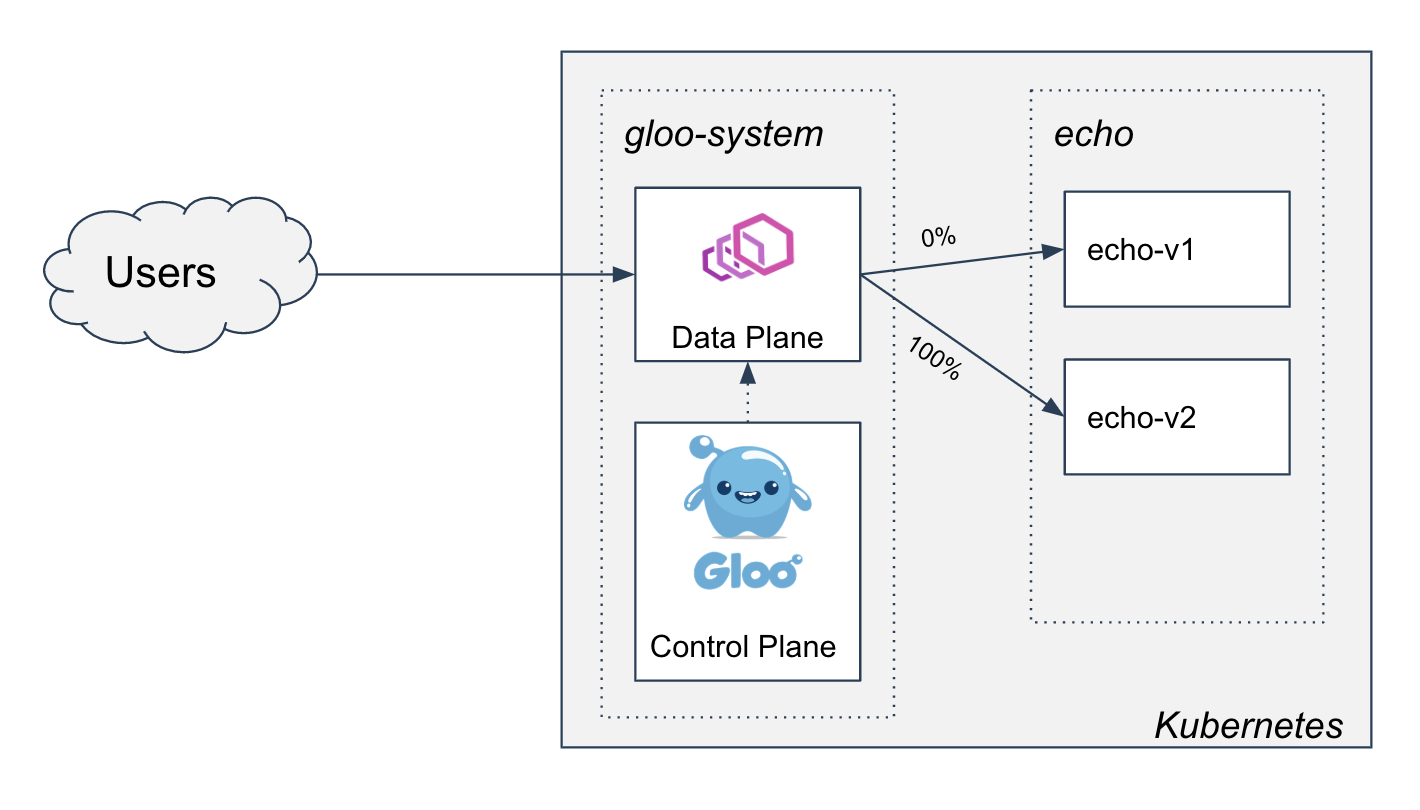

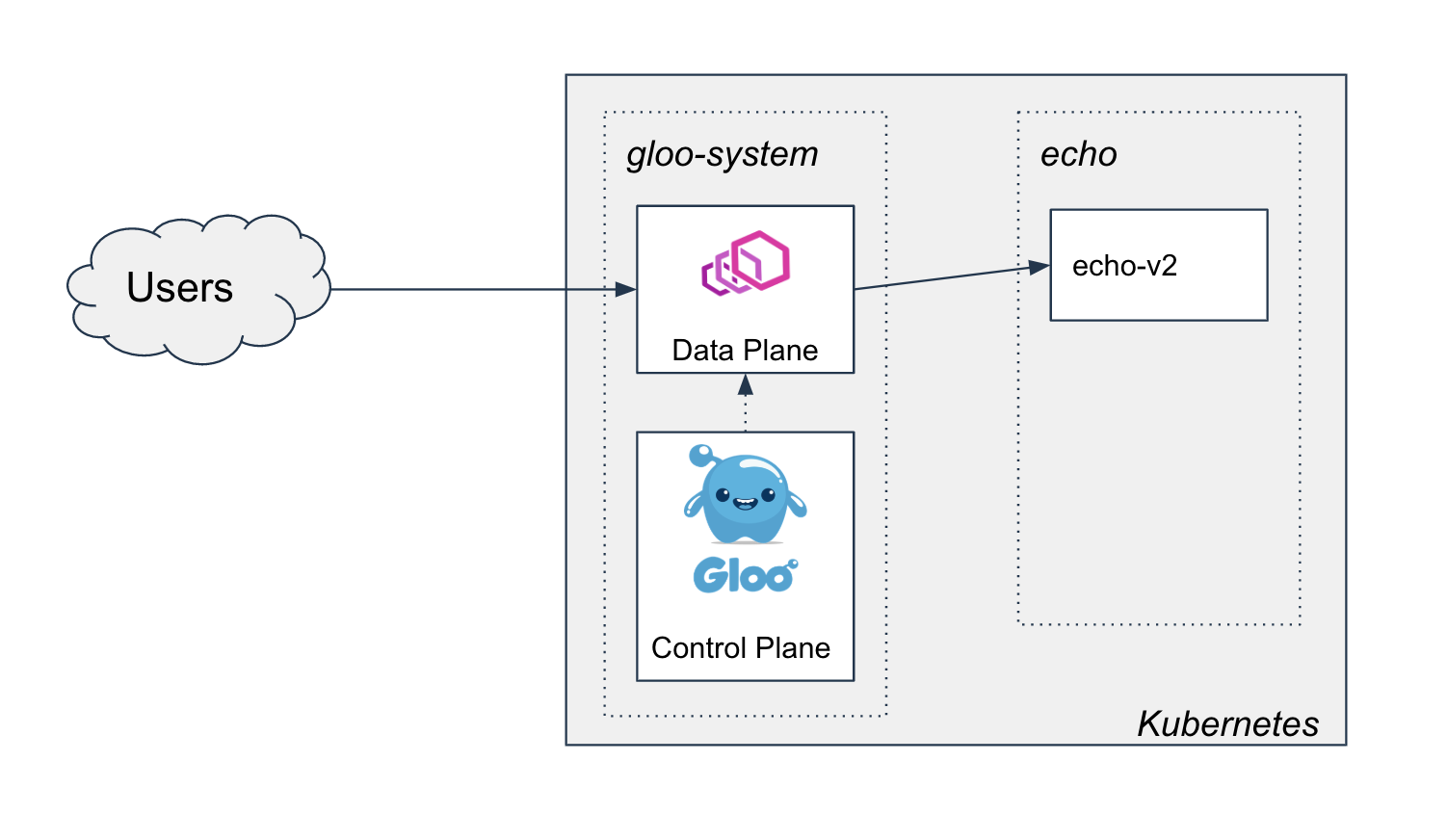

- Two-phased Canary Rollout with Open Source Gloo

- Cluster API v1alpha3 Delivers New Features and an Improved User Experience

- How Kubernetes contributors are building a better communication process

- API Priority and Fairness Alpha

- Introducing Windows CSI support alpha for Kubernetes

- Improvements to the Ingress API in Kubernetes 1.18

- Kubernetes 1.18 Feature Server-side Apply Beta 2

- Kubernetes Topology Manager Moves to Beta - Align Up!

- Kubernetes 1.18: Fit & Finish

- Join SIG Scalability and Learn Kubernetes the Hard Way

- Kong Ingress Controller and Service Mesh: Setting up Ingress to Istio on Kubernetes

- Contributor Summit Amsterdam Postponed

- Bring your ideas to the world with kubectl plugins

- Contributor Summit Amsterdam Schedule Announced

- Deploying External OpenStack Cloud Provider with Kubeadm

- KubeInvaders - Gamified Chaos Engineering Tool for Kubernetes

- CSI Ephemeral Inline Volumes

- Reviewing 2019 in Docs

- Kubernetes on MIPS

- Announcing the Kubernetes bug bounty program

- Remembering Brad Childs

- Testing of CSI drivers

- Kubernetes 1.17: Stability

- Kubernetes 1.17 Feature: Kubernetes Volume Snapshot Moves to Beta

- Kubernetes 1.17 Feature: Kubernetes In-Tree to CSI Volume Migration Moves to Beta

- When you're in the release team, you're family: the Kubernetes 1.16 release interview

- Gardener Project Update

- Develop a Kubernetes controller in Java

- Running Kubernetes locally on Linux with Microk8s

- Grokkin' the Docs

- Kubernetes Documentation Survey

- Contributor Summit San Diego Schedule Announced!

- 2019 Steering Committee Election Results

- Contributor Summit San Diego Registration Open!

- Kubernetes 1.16: Custom Resources, Overhauled Metrics, and Volume Extensions

- Announcing etcd 3.4

- OPA Gatekeeper: Policy and Governance for Kubernetes

- Get started with Kubernetes (using Python)

- Deprecated APIs Removed In 1.16: Here’s What You Need To Know

- Recap of Kubernetes Contributor Summit Barcelona 2019

- Automated High Availability in kubeadm v1.15: Batteries Included But Swappable

- Introducing Volume Cloning Alpha for Kubernetes

- Future of CRDs: Structural Schemas

- Kubernetes 1.15: Extensibility and Continuous Improvement

- Join us at the Contributor Summit in Shanghai

- Kyma - extend and build on Kubernetes with ease

- Kubernetes, Cloud Native, and the Future of Software

- Expanding our Contributor Workshops

- Cat shirts and Groundhog Day: the Kubernetes 1.14 release interview

- Join us for the 2019 KubeCon Diversity Lunch & Hack

- How You Can Help Localize Kubernetes Docs

- Hardware Accelerated SSL/TLS Termination in Ingress Controllers using Kubernetes Device Plugins and RuntimeClass

- Introducing kube-iptables-tailer: Better Networking Visibility in Kubernetes Clusters

- The Future of Cloud Providers in Kubernetes

- Pod Priority and Preemption in Kubernetes

- Process ID Limiting for Stability Improvements in Kubernetes 1.14

- Kubernetes 1.14: Local Persistent Volumes GA

- Kubernetes v1.14 delivers production-level support for Windows nodes and Windows containers

- kube-proxy Subtleties: Debugging an Intermittent Connection Reset

- Running Kubernetes locally on Linux with Minikube - now with Kubernetes 1.14 support

- Kubernetes 1.14: Production-level support for Windows Nodes, Kubectl Updates, Persistent Local Volumes GA

- Kubernetes End-to-end Testing for Everyone

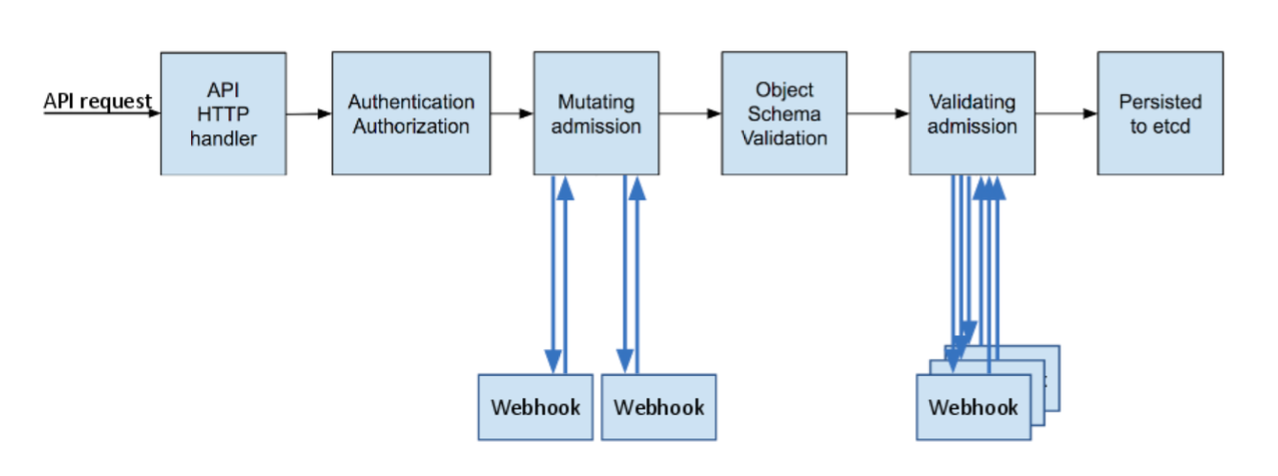

- A Guide to Kubernetes Admission Controllers

- A Look Back and What's in Store for Kubernetes Contributor Summits

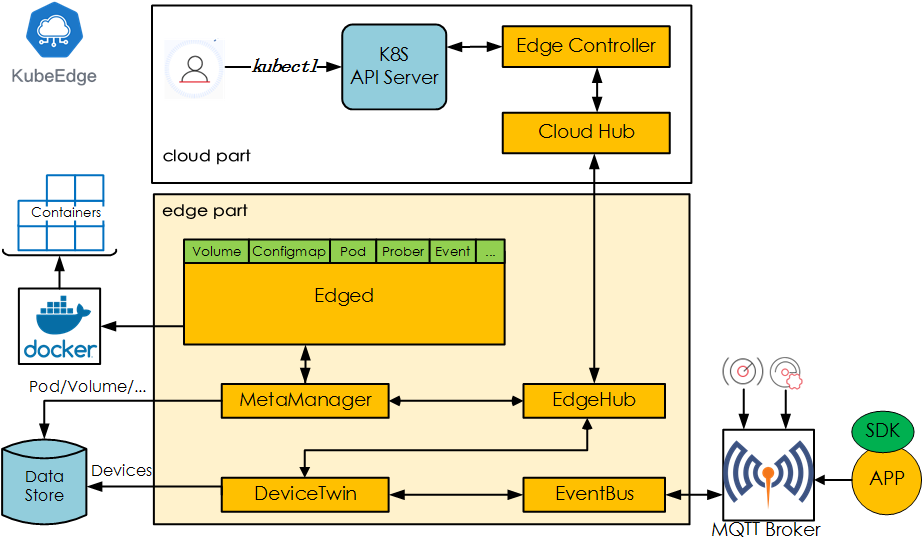

- KubeEdge, a Kubernetes Native Edge Computing Framework

- Kubernetes Setup Using Ansible and Vagrant

- Raw Block Volume support to Beta

- Automate Operations on your Cluster with OperatorHub.io

- Building a Kubernetes Edge (Ingress) Control Plane for Envoy v2

- Runc and CVE-2019-5736

- Poseidon-Firmament Scheduler – Flow Network Graph Based Scheduler

- Update on Volume Snapshot Alpha for Kubernetes

- Container Storage Interface (CSI) for Kubernetes GA

- APIServer dry-run and kubectl diff

- Kubernetes Federation Evolution

- etcd: Current status and future roadmap

- New Contributor Workshop Shanghai

- Production-Ready Kubernetes Cluster Creation with kubeadm

- Kubernetes 1.13: Simplified Cluster Management with Kubeadm, Container Storage Interface (CSI), and CoreDNS as Default DNS are Now Generally Available

- Kubernetes Docs Updates, International Edition

- gRPC Load Balancing on Kubernetes without Tears

- Tips for Your First Kubecon Presentation - Part 2

- Tips for Your First Kubecon Presentation - Part 1

- Kubernetes 2018 North American Contributor Summit

- 2018 Steering Committee Election Results

- Topology-Aware Volume Provisioning in Kubernetes

- Kubernetes v1.12: Introducing RuntimeClass

- Introducing Volume Snapshot Alpha for Kubernetes

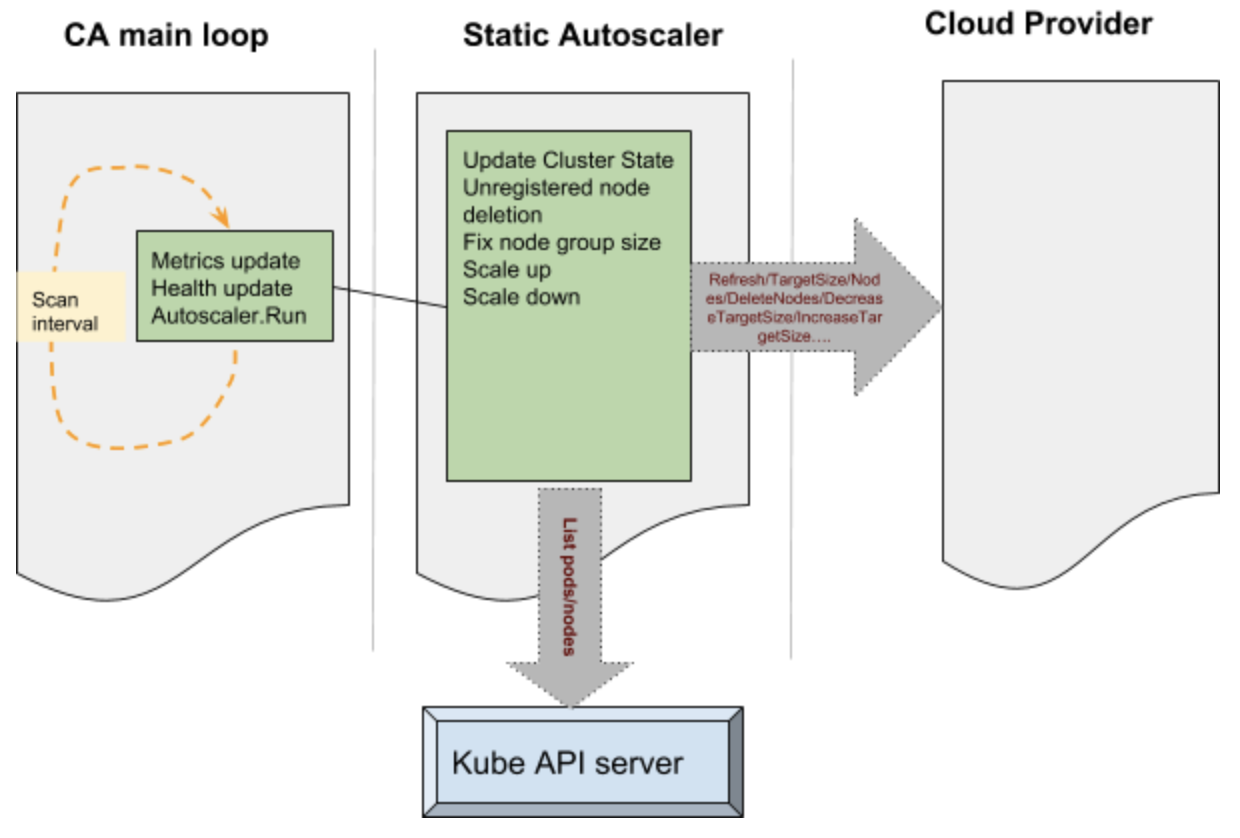

- Support for Azure VMSS, Cluster-Autoscaler and User Assigned Identity

- Introducing the Non-Code Contributor’s Guide

- KubeDirector: The easy way to run complex stateful applications on Kubernetes

- Building a Network Bootable Server Farm for Kubernetes with LTSP

- Health checking gRPC servers on Kubernetes

- Kubernetes 1.12: Kubelet TLS Bootstrap and Azure Virtual Machine Scale Sets (VMSS) Move to General Availability

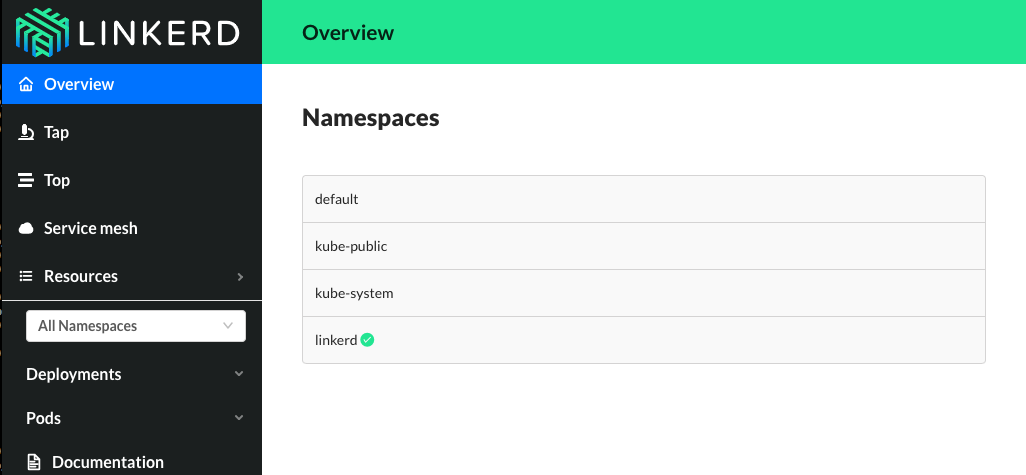

- Hands On With Linkerd 2.0

- 2018 Steering Committee Election Cycle Kicks Off

- The Machines Can Do the Work, a Story of Kubernetes Testing, CI, and Automating the Contributor Experience

- Introducing Kubebuilder: an SDK for building Kubernetes APIs using CRDs

- Out of the Clouds onto the Ground: How to Make Kubernetes Production Grade Anywhere

- Dynamically Expand Volume with CSI and Kubernetes

- KubeVirt: Extending Kubernetes with CRDs for Virtualized Workloads

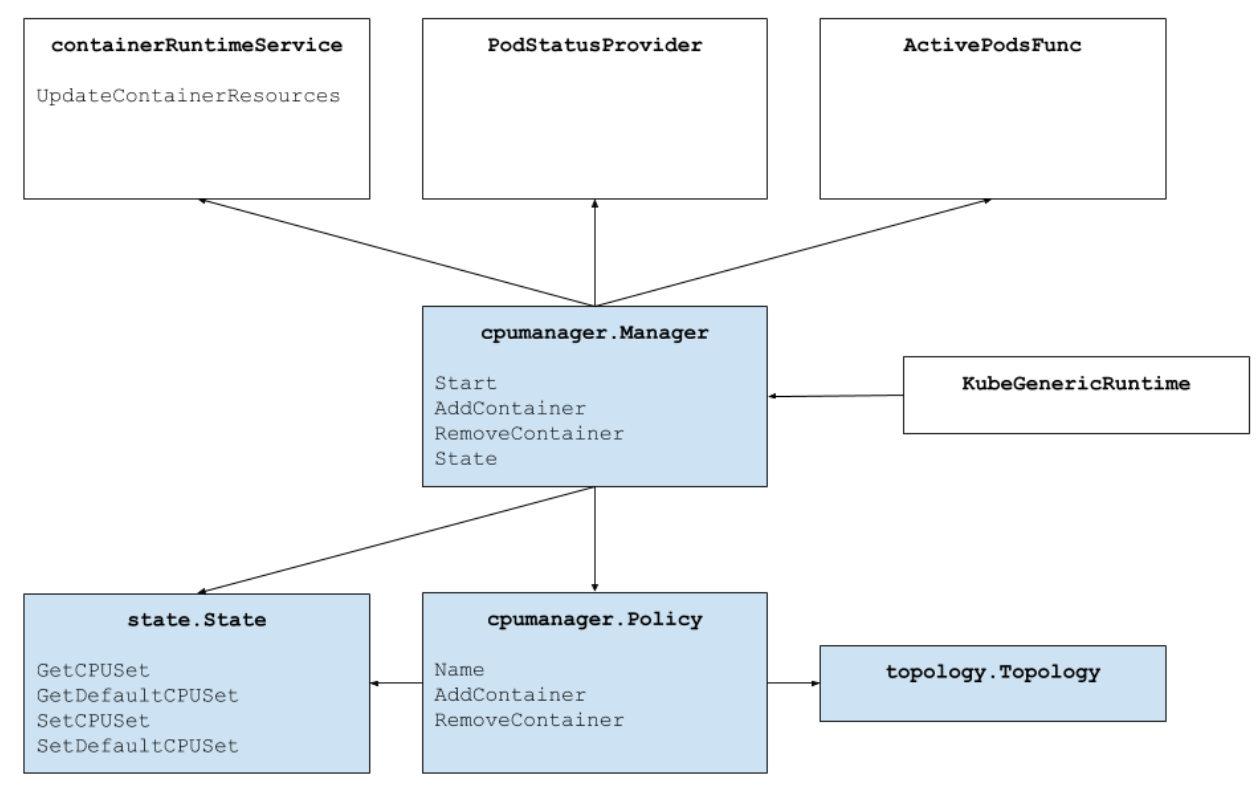

- Feature Highlight: CPU Manager

- The History of Kubernetes & the Community Behind It

- Kubernetes Wins the 2018 OSCON Most Impact Award

- 11 Ways (Not) to Get Hacked

- How the sausage is made: the Kubernetes 1.11 release interview, from the Kubernetes Podcast

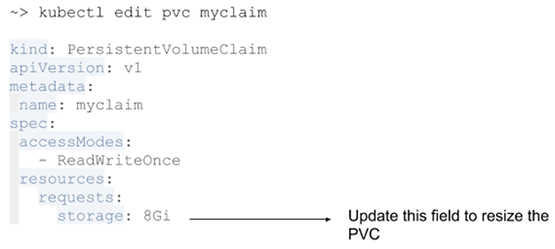

- Resizing Persistent Volumes using Kubernetes

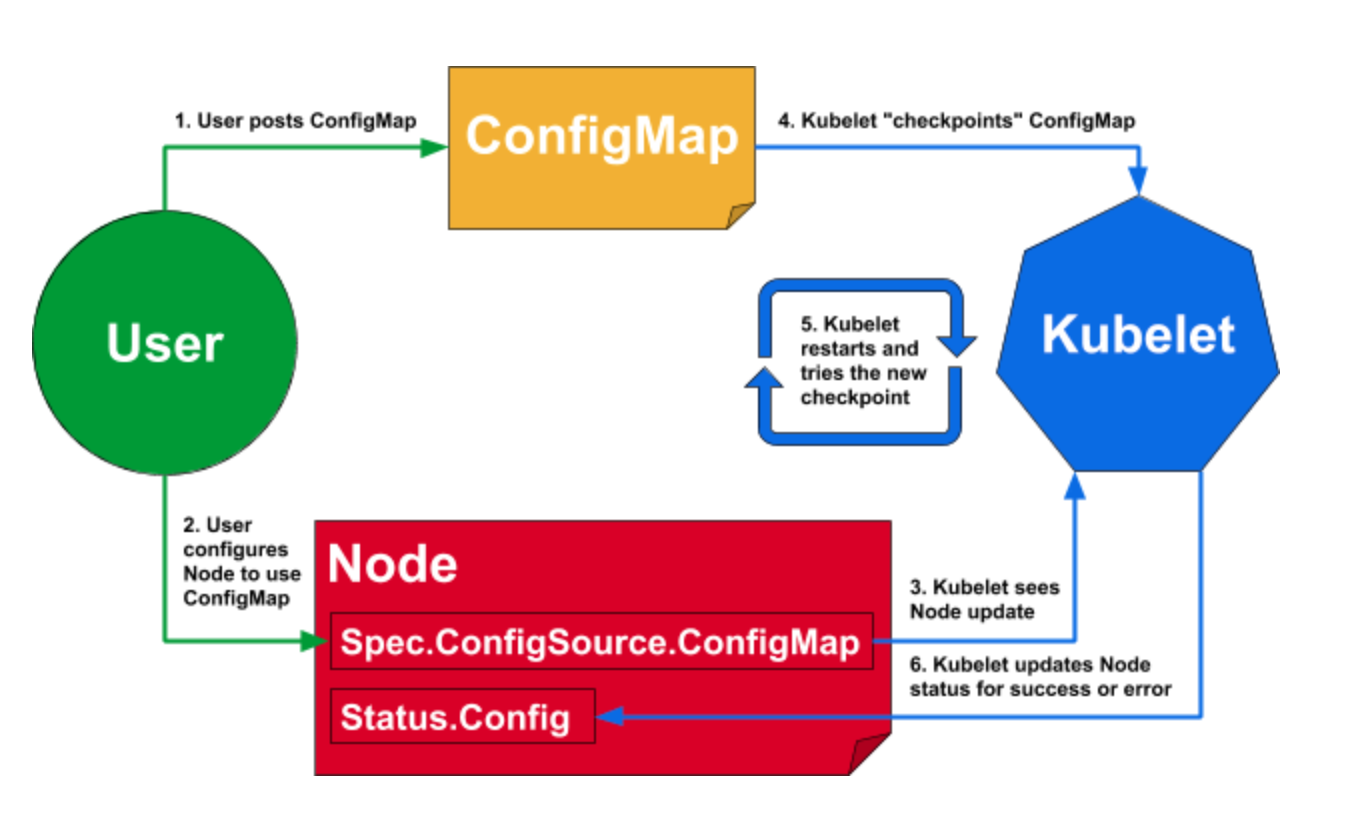

- Dynamic Kubelet Configuration

- CoreDNS GA for Kubernetes Cluster DNS

- Meet Our Contributors - Monthly Streaming YouTube Mentoring Series

- IPVS-Based In-Cluster Load Balancing Deep Dive

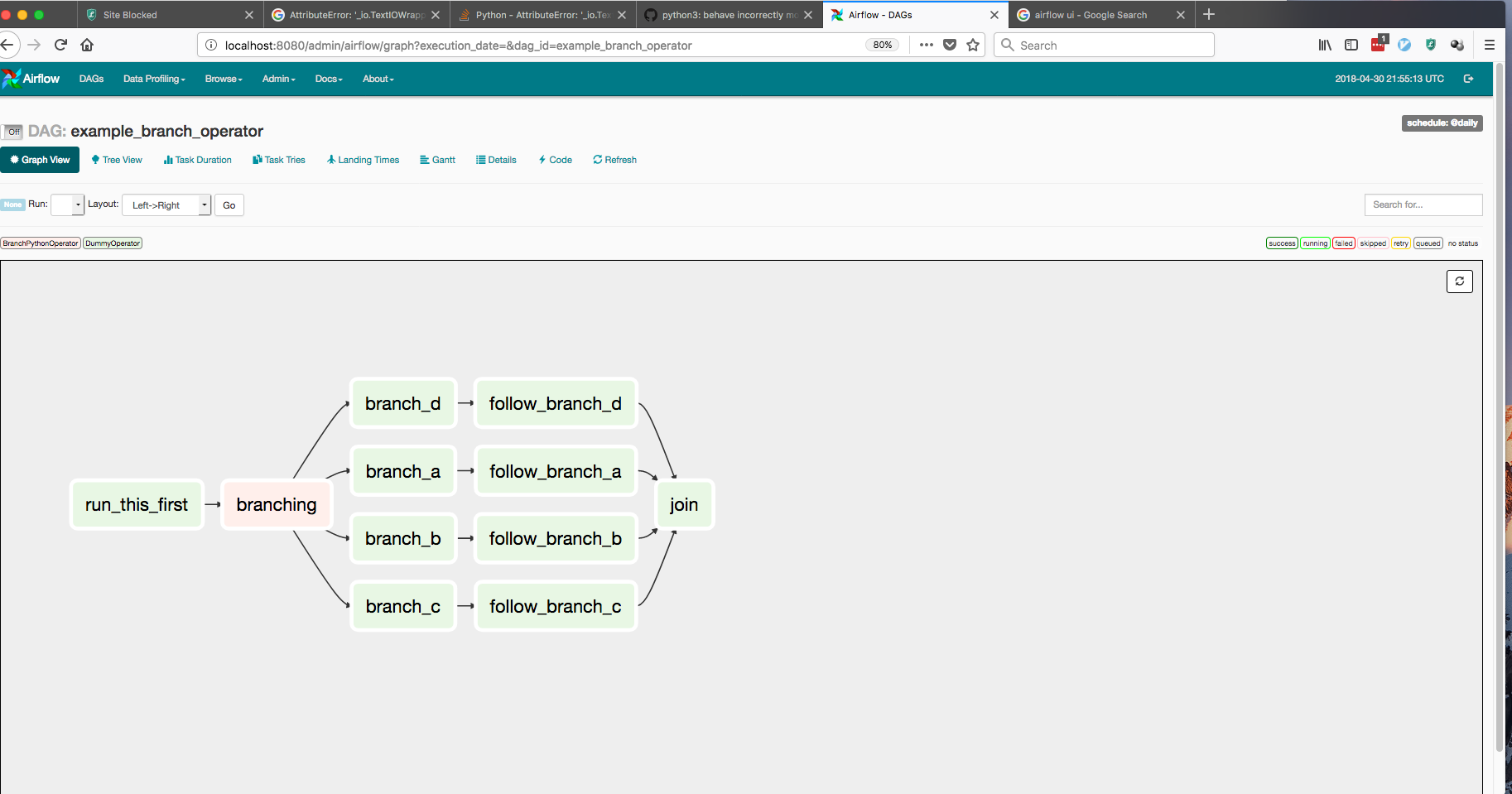

- Airflow on Kubernetes (Part 1): A Different Kind of Operator

- Kubernetes 1.11: In-Cluster Load Balancing and CoreDNS Plugin Graduate to General Availability

- Dynamic Ingress in Kubernetes

- 4 Years of K8s

- Say Hello to Discuss Kubernetes

- Introducing kustomize; Template-free Configuration Customization for Kubernetes

- Kubernetes Containerd Integration Goes GA

- Getting to Know Kubevirt

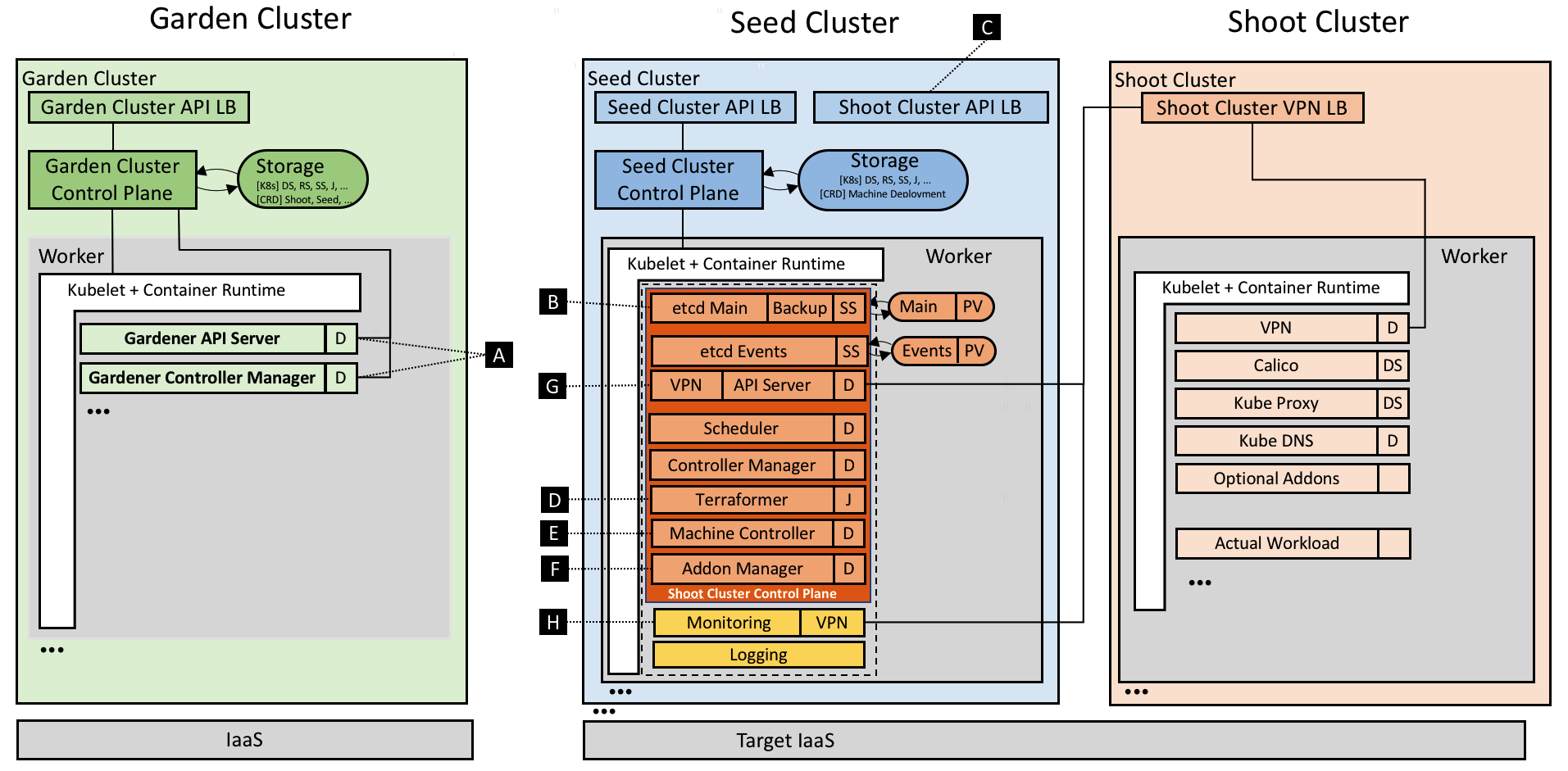

- Gardener - The Kubernetes Botanist

- Docs are Migrating from Jekyll to Hugo

- Announcing Kubeflow 0.1

- Current State of Policy in Kubernetes

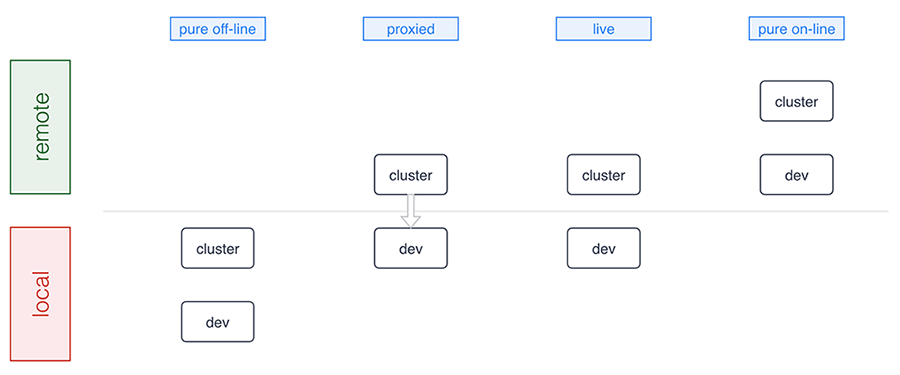

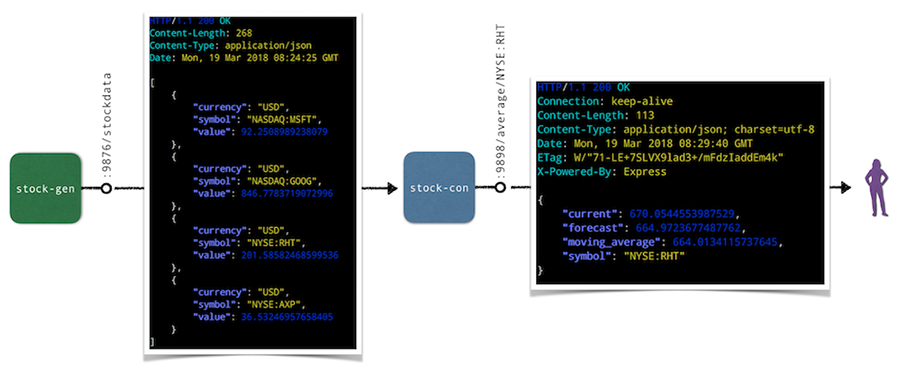

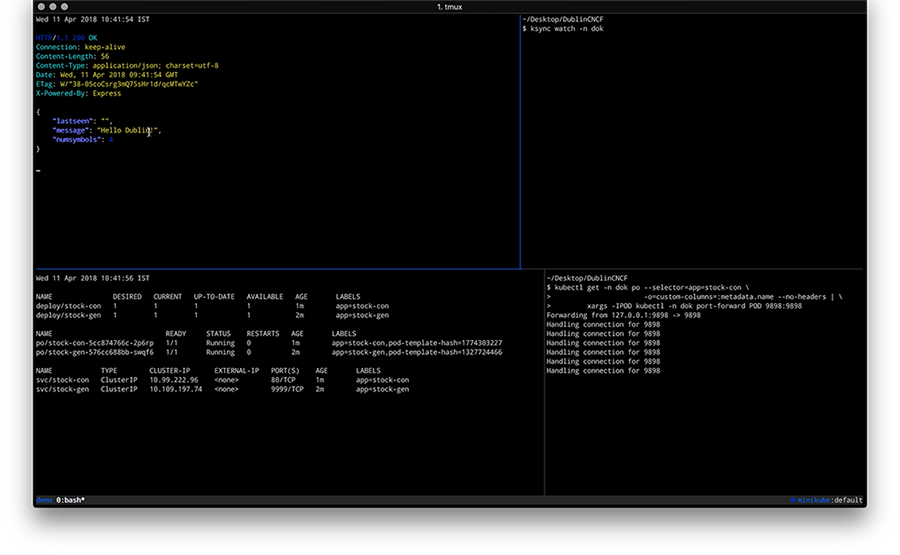

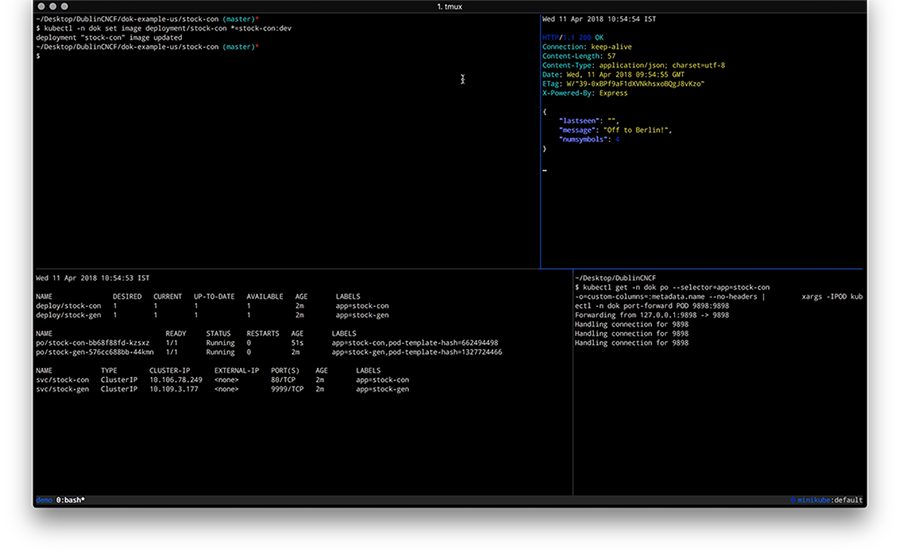

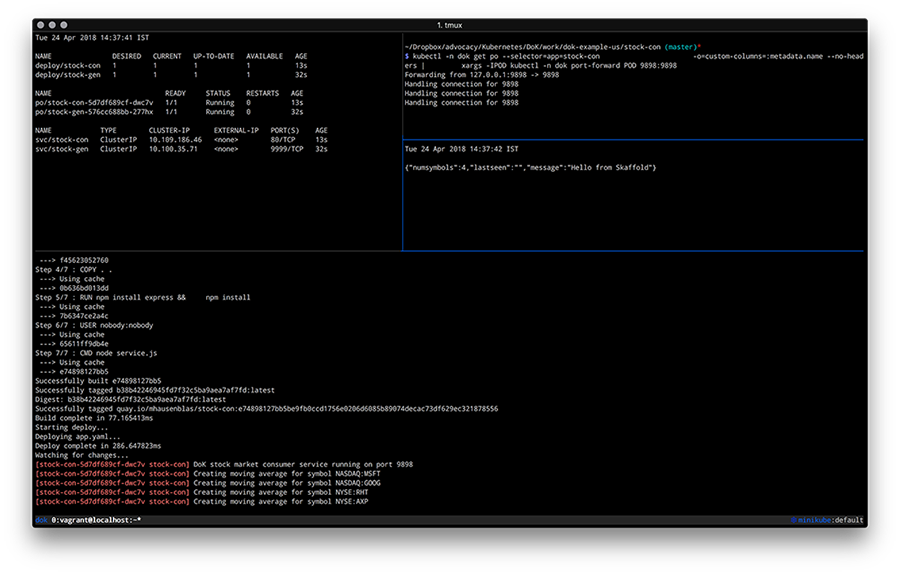

- Developing on Kubernetes

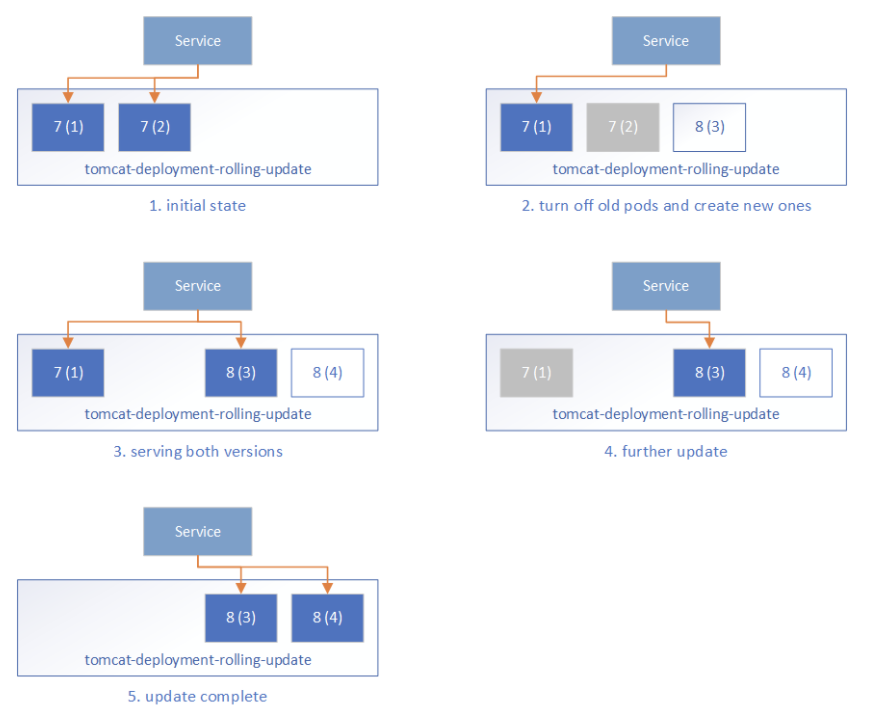

- Zero-downtime Deployment in Kubernetes with Jenkins

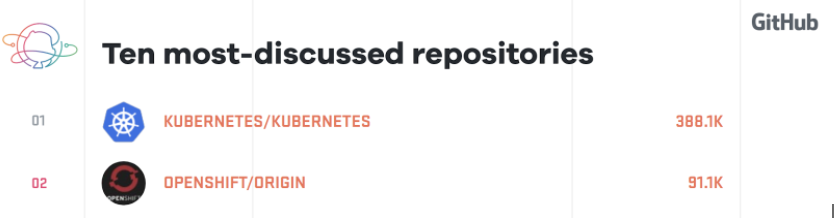

- Kubernetes Community - Top of the Open Source Charts in 2017

- Kubernetes Application Survey 2018 Results

- Local Persistent Volumes for Kubernetes Goes Beta

- Migrating the Kubernetes Blog

- Container Storage Interface (CSI) for Kubernetes Goes Beta

- Fixing the Subpath Volume Vulnerability in Kubernetes

- Kubernetes 1.10: Stabilizing Storage, Security, and Networking

- Principles of Container-based Application Design

- Expanding User Support with Office Hours

- How to Integrate RollingUpdate Strategy for TPR in Kubernetes

- Apache Spark 2.3 with Native Kubernetes Support

- Kubernetes: First Beta Version of Kubernetes 1.10 is Here

- Reporting Errors from Control Plane to Applications Using Kubernetes Events

- Core Workloads API GA

- Introducing client-go version 6

- Extensible Admission is Beta

- Introducing Container Storage Interface (CSI) Alpha for Kubernetes

- Kubernetes v1.9 releases beta support for Windows Server Containers

- Five Days of Kubernetes 1.9

- Introducing Kubeflow - A Composable, Portable, Scalable ML Stack Built for Kubernetes

- Kubernetes 1.9: Apps Workloads GA and Expanded Ecosystem

- Using eBPF in Kubernetes

- PaddlePaddle Fluid: Elastic Deep Learning on Kubernetes

- Autoscaling in Kubernetes

- Certified Kubernetes Conformance Program: Launch Celebration Round Up

- Kubernetes is Still Hard (for Developers)

- Securing Software Supply Chain with Grafeas

- Containerd Brings More Container Runtime Options for Kubernetes

- Kubernetes the Easy Way

- Enforcing Network Policies in Kubernetes

- Using RBAC, Generally Available in Kubernetes v1.8

- It Takes a Village to Raise a Kubernetes

- kubeadm v1.8 Released: Introducing Easy Upgrades for Kubernetes Clusters

- Five Days of Kubernetes 1.8

- Introducing Software Certification for Kubernetes

- Request Routing and Policy Management with the Istio Service Mesh

- Kubernetes Community Steering Committee Election Results

- Kubernetes 1.8: Security, Workloads and Feature Depth

- Kubernetes StatefulSets & DaemonSets Updates

- Introducing the Resource Management Working Group

- Windows Networking at Parity with Linux for Kubernetes

- Kubernetes Meets High-Performance Computing

- High Performance Networking with EC2 Virtual Private Clouds

- Kompose Helps Developers Move Docker Compose Files to Kubernetes

- Happy Second Birthday: A Kubernetes Retrospective

- How Watson Health Cloud Deploys Applications with Kubernetes

- Kubernetes 1.7: Security Hardening, Stateful Application Updates and Extensibility

- Managing microservices with the Istio service mesh

- Draft: Kubernetes container development made easy

- Kubernetes: a monitoring guide

- Kubespray Ansible Playbooks foster Collaborative Kubernetes Ops

- Dancing at the Lip of a Volcano: The Kubernetes Security Process - Explained

- How Bitmovin is Doing Multi-Stage Canary Deployments with Kubernetes in the Cloud and On-Prem

- RBAC Support in Kubernetes

- Configuring Private DNS Zones and Upstream Nameservers in Kubernetes

- Advanced Scheduling in Kubernetes

- Scalability updates in Kubernetes 1.6: 5,000 node and 150,000 pod clusters

- Dynamic Provisioning and Storage Classes in Kubernetes

- Five Days of Kubernetes 1.6

- Kubernetes 1.6: Multi-user, Multi-workloads at Scale

- The K8sPort: Engaging Kubernetes Community One Activity at a Time

- Deploying PostgreSQL Clusters using StatefulSets

- Containers as a Service, the foundation for next generation PaaS

- Inside JD.com's Shift to Kubernetes from OpenStack

- Run Deep Learning with PaddlePaddle on Kubernetes

- Highly Available Kubernetes Clusters

- Fission: Serverless Functions as a Service for Kubernetes

- Running MongoDB on Kubernetes with StatefulSets

- How we run Kubernetes in Kubernetes aka Kubeception

- Scaling Kubernetes deployments with Policy-Based Networking

- A Stronger Foundation for Creating and Managing Kubernetes Clusters

- Kubernetes UX Survey Infographic

- Kubernetes supports OpenAPI

- Cluster Federation in Kubernetes 1.5

- Windows Server Support Comes to Kubernetes

- StatefulSet: Run and Scale Stateful Applications Easily in Kubernetes

- Five Days of Kubernetes 1.5

- Introducing Container Runtime Interface (CRI) in Kubernetes

- Kubernetes 1.5: Supporting Production Workloads

- From Network Policies to Security Policies

- Kompose: a tool to go from Docker-compose to Kubernetes

- Kubernetes Containers Logging and Monitoring with Sematext

- Visualize Kubelet Performance with Node Dashboard

- CNCF Partners With The Linux Foundation To Launch New Kubernetes Certification, Training and Managed Service Provider Program

- Bringing Kubernetes Support to Azure Container Service

- Modernizing the Skytap Cloud Micro-Service Architecture with Kubernetes

- Introducing Kubernetes Service Partners program and a redesigned Partners page

- Tail Kubernetes with Stern

- How We Architected and Run Kubernetes on OpenStack at Scale at Yahoo! JAPAN

- Building Globally Distributed Services using Kubernetes Cluster Federation

- Helm Charts: making it simple to package and deploy common applications on Kubernetes

- Dynamic Provisioning and Storage Classes in Kubernetes

- How we improved Kubernetes Dashboard UI in 1.4 for your production needs

- How we made Kubernetes insanely easy to install

- How Qbox Saved 50% per Month on AWS Bills Using Kubernetes and Supergiant

- Kubernetes 1.4: Making it easy to run on Kubernetes anywhere

- High performance network policies in Kubernetes clusters

- Creating a PostgreSQL Cluster using Helm

- Deploying to Multiple Kubernetes Clusters with kit

- Cloud Native Application Interfaces

- Security Best Practices for Kubernetes Deployment

- Scaling Stateful Applications using Kubernetes Pet Sets and FlexVolumes with Datera Elastic Data Fabric

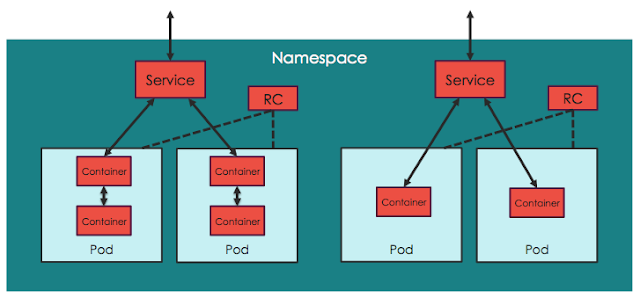

- Kubernetes Namespaces: use cases and insights

- SIG Apps: build apps for and operate them in Kubernetes

- Create a Couchbase cluster using Kubernetes

- Challenges of a Remotely Managed, On-Premises, Bare-Metal Kubernetes Cluster

- Why OpenStack's embrace of Kubernetes is great for both communities

- A Very Happy Birthday Kubernetes

- Happy Birthday Kubernetes. Oh, the places you’ll go!

- The Bet on Kubernetes, a Red Hat Perspective

- Bringing End-to-End Kubernetes Testing to Azure (Part 2)

- Dashboard - Full Featured Web Interface for Kubernetes

- Steering an Automation Platform at Wercker with Kubernetes

- Citrix + Kubernetes = A Home Run

- Cross Cluster Services - Achieving Higher Availability for your Kubernetes Applications

- Stateful Applications in Containers!? Kubernetes 1.3 Says “Yes!”

- Thousand Instances of Cassandra using Kubernetes Pet Set

- Autoscaling in Kubernetes

- Kubernetes in Rancher: the further evolution

- Five Days of Kubernetes 1.3

- Minikube: easily run Kubernetes locally

- rktnetes brings rkt container engine to Kubernetes

- Updates to Performance and Scalability in Kubernetes 1.3 -- 2,000 node 60,000 pod clusters

- Kubernetes 1.3: Bridging Cloud Native and Enterprise Workloads

- Container Design Patterns

- The Illustrated Children's Guide to Kubernetes

- Bringing End-to-End Kubernetes Testing to Azure (Part 1)

- Hypernetes: Bringing Security and Multi-tenancy to Kubernetes

- CoreOS Fest 2016: CoreOS and Kubernetes Community meet in Berlin (& San Francisco)

- Introducing the Kubernetes OpenStack Special Interest Group

- SIG-UI: the place for building awesome user interfaces for Kubernetes

- SIG-ClusterOps: Promote operability and interoperability of Kubernetes clusters

- SIG-Networking: Kubernetes Network Policy APIs Coming in 1.3

- How to deploy secure, auditable, and reproducible Kubernetes clusters on AWS

- Adding Support for Kubernetes in Rancher

- Container survey results - March 2016

- Configuration management with Containers

- Using Deployment objects with Kubernetes 1.2

- Kubernetes 1.2 and simplifying advanced networking with Ingress

- Using Spark and Zeppelin to process big data on Kubernetes 1.2

- AppFormix: Helping Enterprises Operationalize Kubernetes

- Building highly available applications using Kubernetes new multi-zone clusters (a.k.a. 'Ubernetes Lite')

- 1000 nodes and beyond: updates to Kubernetes performance and scalability in 1.2

- Five Days of Kubernetes 1.2

- How container metadata changes your point of view

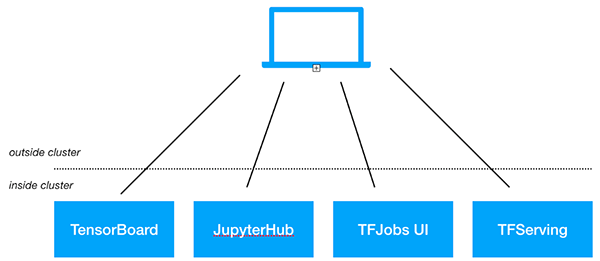

- Scaling neural network image classification using Kubernetes with TensorFlow Serving

- Kubernetes 1.2: Even more performance upgrades, plus easier application deployment and management

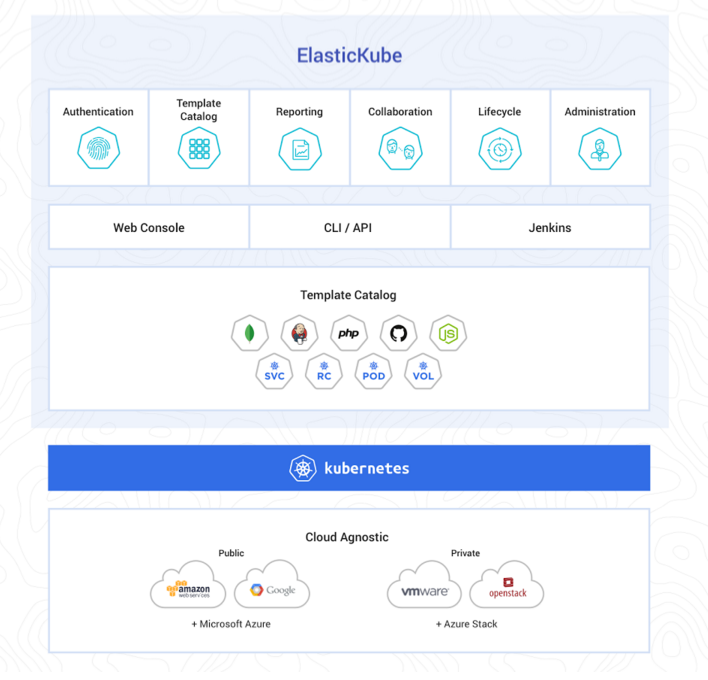

- ElasticBox introduces ElasticKube to help manage Kubernetes within the enterprise

- Kubernetes in the Enterprise with Fujitsu’s Cloud Load Control

- Kubernetes Community Meeting Notes - 20160225

- State of the Container World, February 2016

- KubeCon EU 2016: Kubernetes Community in London

- Kubernetes Community Meeting Notes - 20160218

- Kubernetes Community Meeting Notes - 20160211

- ShareThis: Kubernetes In Production

- Kubernetes Community Meeting Notes - 20160204

- Kubernetes Community Meeting Notes - 20160128

- State of the Container World, January 2016

- Kubernetes Community Meeting Notes - 20160114

- Kubernetes Community Meeting Notes - 20160121

- Why Kubernetes doesn’t use libnetwork

- Simple leader election with Kubernetes and Docker

- Creating a Raspberry Pi cluster running Kubernetes, the installation (Part 2)

- Managing Kubernetes Pods, Services and Replication Controllers with Puppet

- How Weave built a multi-deployment solution for Scope using Kubernetes

- Creating a Raspberry Pi cluster running Kubernetes, the shopping list (Part 1)

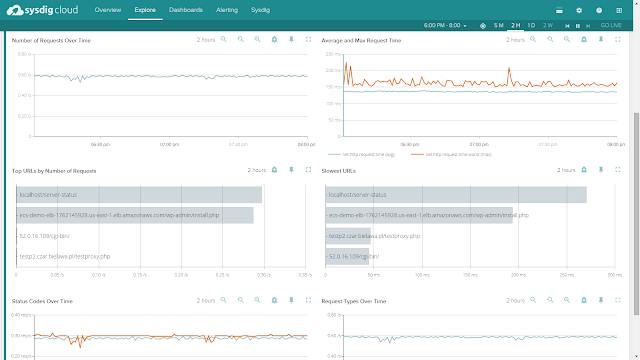

- Monitoring Kubernetes with Sysdig

- One million requests per second: Dependable and dynamic distributed systems at scale

- Kubernetes 1.1 Performance upgrades, improved tooling and a growing community

- Kubernetes as Foundation for Cloud Native PaaS

- Some things you didn’t know about kubectl

- Kubernetes Performance Measurements and Roadmap

- Using Kubernetes Namespaces to Manage Environments

- Weekly Kubernetes Community Hangout Notes - July 31 2015

- The Growing Kubernetes Ecosystem

- Weekly Kubernetes Community Hangout Notes - July 17 2015

- Strong, Simple SSL for Kubernetes Services

- Weekly Kubernetes Community Hangout Notes - July 10 2015

- Announcing the First Kubernetes Enterprise Training Course

- How did the Quake demo from DockerCon Work?

- Kubernetes 1.0 Launch Event at OSCON

- The Distributed System ToolKit: Patterns for Composite Containers

- Slides: Cluster Management with Kubernetes, talk given at the University of Edinburgh

- Cluster Level Logging with Kubernetes

- Weekly Kubernetes Community Hangout Notes - May 22 2015

- Kubernetes on OpenStack

- Docker and Kubernetes and AppC

- Weekly Kubernetes Community Hangout Notes - May 15 2015

- Kubernetes Release: 0.17.0

- Resource Usage Monitoring in Kubernetes

- Weekly Kubernetes Community Hangout Notes - May 1 2015

- Kubernetes Release: 0.16.0

- AppC Support for Kubernetes through RKT

- Weekly Kubernetes Community Hangout Notes - April 24 2015

- Borg: The Predecessor to Kubernetes

- Kubernetes and the Mesosphere DCOS

- Weekly Kubernetes Community Hangout Notes - April 17 2015

- Introducing Kubernetes API Version v1beta3

- Kubernetes Release: 0.15.0

- Weekly Kubernetes Community Hangout Notes - April 10 2015

- Faster than a speeding Latte

- Weekly Kubernetes Community Hangout Notes - April 3 2015

- Participate in a Kubernetes User Experience Study

- Weekly Kubernetes Community Hangout Notes - March 27 2015

- Kubernetes Gathering Videos

- Welcome to the Kubernetes Blog!

Announcing Ingress2Gateway 1.0: Your Path to Gateway API

With the Ingress-NGINX retirement scheduled for March 2026, the Kubernetes networking landscape is at a turning point. For most organizations, the question isn't whether to migrate to Gateway API, but how to do so safely.

Migrating from Ingress to Gateway API is a fundamental shift in API design. Gateway API provides a modular, extensible API with strong support for Kubernetes-native RBAC. Conversely, the Ingress API is simple, and implementations such as Ingress-NGINX extend the API through esoteric annotations, ConfigMaps, and CRDs. Migrating away from Ingress controllers such as Ingress-NGINX presents the daunting task of capturing all the nuances of the Ingress controller, and mapping that behavior to Gateway API.

Ingress2Gateway is an assistant that helps teams confidently move from Ingress to Gateway API. It translates Ingress resources/manifests along with implementation-specific annotations to Gateway API while warning you about untranslatable configuration and offering suggestions.

Today, SIG Network is proud to announce the 1.0 release of Ingress2Gateway. This milestone represents a stable, tested migration assistant for teams ready to modernize their networking stack.

Ingress2Gateway 1.0

Ingress-NGINX annotation support

The main improvement for the 1.0 release is more comprehensive Ingress-NGINX support. Before the 1.0 release, Ingress2Gateway only supported three Ingress-NGINX annotations. For the 1.0 release, Ingress2Gateway supports over 30 common annotations (CORS, backend TLS, regex matching, path rewrite, etc.).

Comprehensive integration testing

Each supported Ingress-NGINX annotation, and representative combinations of common annotations, is backed by controller-level integration tests that verify the behavioral equivalence of the Ingress-NGINX configuration and the generated Gateway API. These tests exercise real controllers in live clusters and compare runtime behavior (routing, redirects, rewrites, etc.), not just YAML structure.

The tests:

- spin up an Ingress-NGINX controller

- spin up multiple Gateway API controllers

- apply Ingress resources that have implementation-specific configuration

- translate Ingress resources to Gateway API with

ingress2gatewayand apply generated manifests - verify that the Gateway API controllers and the Ingress controller exhibit equivalent behavior.

A comprehensive test suite not only catches bugs in development, but also ensures the correctness of the translation, especially given surprising edge cases and unexpected defaults, so that you don't find out about them in production.

Notification & error handling

Migration is not a "one-click" affair. Surfacing subtleties and untranslatable behavior is as important as translating supported configuration. The 1.0 release cleans up the formatting and content of notifications, so it is clear what is missing and how you can fix it.

Using Ingress2Gateway

Ingress2Gateway is a migration assistant, not a one-shot replacement. Its goal is to

- migrate supported Ingress configuration and behavior

- identify unsupported configuration and suggest alternatives

- reevaluate and potentially discard undesirable configuration

The rest of the section shows you how to safely migrate the following Ingress-NGINX configuration

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/proxy-body-size: "1G"

nginx.ingress.kubernetes.io/use-regex: "true"

nginx.ingress.kubernetes.io/proxy-send-timeout: "1"

nginx.ingress.kubernetes.io/proxy-read-timeout: "1"

nginx.ingress.kubernetes.io/enable-cors: "true"

nginx.ingress.kubernetes.io/configuration-snippet: |

more_set_headers "Request-Id: $req_id";

name: my-ingress

namespace: my-ns

spec:

ingressClassName: nginx

rules:

- host: my-host.example.com

http:

paths:

- backend:

service:

name: website-service

port:

number: 80

path: /users/(\d+)

pathType: ImplementationSpecific

tls:

- hosts:

- my-host.example.com

secretName: my-secret

1. Install Ingress2Gateway

If you have a Go environment set up, you can install Ingress2Gateway with

go install github.com/kubernetes-sigs/ingress2gateway@v1.0.0

Otherwise,

brew install ingress2gateway

You can also download the binary from GitHub or build from source.

2. Run Ingress2Gateway

You can pass Ingress2Gateway Ingress manifests, or have the tool read directly from your cluster.

# Pass it files

ingress2gateway print --input-file my-manifest.yaml,my-other-manifest.yaml --providers=ingress-nginx > gwapi.yaml

# Use a namespace in your cluster

ingress2gateway print --namespace my-api --providers=ingress-nginx > gwapi.yaml

# Or your whole cluster

ingress2gateway print --providers=ingress-nginx --all-namespaces > gwapi.yaml

Note:

You can also pass--emitter <agentgateway|envoy-gateway|kgateway> to output implementation-specific extensions.3. Review the output

This is the most critical step.

The commands from the previous section output a Gateway API manifest to gwapi.yaml, and they also emit warnings that explain what did not translate exactly and what to review manually.

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

annotations:

gateway.networking.k8s.io/generator: ingress2gateway-dev

name: nginx

namespace: my-ns

spec:

gatewayClassName: nginx

listeners:

- hostname: my-host.example.com

name: my-host-example-com-http

port: 80

protocol: HTTP

- hostname: my-host.example.com

name: my-host-example-com-https

port: 443

protocol: HTTPS

tls:

certificateRefs:

- group: ""

kind: Secret

name: my-secret

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

annotations:

gateway.networking.k8s.io/generator: ingress2gateway-dev

name: my-ingress-my-host-example-com

namespace: my-ns

spec:

hostnames:

- my-host.example.com

parentRefs:

- name: nginx

port: 443

rules:

- backendRefs:

- name: website-service

port: 80

filters:

- cors:

allowCredentials: true

allowHeaders:

- DNT

- Keep-Alive

- User-Agent

- X-Requested-With

- If-Modified-Since

- Cache-Control

- Content-Type

- Range

- Authorization

allowMethods:

- GET

- PUT

- POST

- DELETE

- PATCH

- OPTIONS

allowOrigins:

- '*'

maxAge: 1728000

type: CORS

matches:

- path:

type: RegularExpression

value: (?i)/users/(\d+).*

name: rule-0

timeouts:

request: 10s

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

annotations:

gateway.networking.k8s.io/generator: ingress2gateway-dev

name: my-ingress-my-host-example-com-ssl-redirect

namespace: my-ns

spec:

hostnames:

- my-host.example.com

parentRefs:

- name: nginx

port: 80

rules:

- filters:

- requestRedirect:

scheme: https

statusCode: 308

type: RequestRedirect

Ingress2Gateway successfully translated some annotations into their Gateway API equivalents.

For example, the nginx.ingress.kubernetes.io/enable-cors annotation was translated into a CORS filter.

But upon closer inspection, the nginx.ingress.kubernetes.io/proxy-{read,send}-timeout and nginx.ingress.kubernetes.io/proxy-body-size annotations do not map perfectly.

The logs show the reason for these omissions as well as reasoning behind the translation.

┌─ WARN ────────────────────────────────────────

│ Unsupported annotation nginx.ingress.kubernetes.io/configuration-snippet

│ source: INGRESS-NGINX

│ object: Ingress: my-ns/my-ingress

└─

┌─ INFO ────────────────────────────────────────

│ Using case-insensitive regex path matches. You may want to change this.

│ source: INGRESS-NGINX

│ object: HTTPRoute: my-ns/my-ingress-my-host-example-com

└─

┌─ WARN ────────────────────────────────────────

│ ingress-nginx only supports TCP-level timeouts; i2gw has made a best-effort translation to Gateway API timeouts.request. Please verify that this meets your needs. See documentation: https://gateway-api.sigs.k8s.io/guides/http-timeouts/

│ source: INGRESS-NGINX

│ object: HTTPRoute: my-ns/my-ingress-my-host-example-com

└─

┌─ WARN ────────────────────────────────────────

│ Failed to apply my-ns.my-ingress.metadata.annotations."nginx.ingress.kubernetes.io/proxy-body-size" from my-ns/my-ingress: Most Gateway API implementations have reasonable body size and buffering defaults

│ source: STANDARD_EMITTER

│ object: HTTPRoute: my-ns/my-ingress-my-host-example-com

└─

┌─ WARN ────────────────────────────────────────

│ Gateway API does not support configuring URL normalization (RFC 3986, Section 6). Please check if this matters for your use case and consult implementation-specific details.

│ source: STANDARD_EMITTER

└─

There is a warning that Ingress2Gateway does not support the nginx.ingress.kubernetes.io/configuration-snippet annotation.

You will have to check your Gateway API implementation documentation to see if there is a way to achieve equivalent behavior.

The tool also notified us that Ingress-NGINX regex matches are case-insensitive prefix matches, which is why there is a match pattern of (?i)/users/(\d+).*.

Most organizations will want to change this behavior to be an exact case-sensitive match by removing the leading (?i) and the trailing .* from the path pattern.

Ingress2Gateway made a best-effort translation from the nginx.ingress.kubernetes.io/proxy-{send,read}-timeout annotations to a 10 second request timeout in our HTTP route.

If requests for this service should be much shorter, say 3 seconds, you can make the corresponding changes to your Gateway API manifests.

Also, nginx.ingress.kubernetes.io/proxy-body-size does not have a Gateway API equivalent, and was thus not translated.

However, most Gateway API implementations have reasonable defaults for maximum body size and buffering, so this might not be a problem in practice.

Further, some emitters might offer support for this annotation through implementation-specific extensions.

For example, adding the --emitter agentgateway, --emitter envoy-gateway, or --emitter kgateway flag to the previous ingress2gateway print command would have resulted in additional implementation-specific configuration in the generated Gateway API manifests that attempted to capture the body size configuration.

We also see a warning about URL normalization. Gateway API implementations such as Agentgateway, Envoy Gateway, Kgateway, and Istio have some level of URL normalization, but the behavior varies across implementations and is not configurable through standard Gateway API. You should check and test the URL normalization behavior of your Gateway API implementation to ensure it is compatible with your use case.

To match Ingress-NGINX default behavior, Ingress2Gateway also added a listener on port 80 and a HTTP Request redirect filter to redirect HTTP traffic to HTTPS. You may not want to serve HTTP traffic at all and remove the listener on port 80 and the corresponding HTTPRoute.

Caution:

Always thoroughly review the generated output and logs.After manually applying these changes, the Gateway API manifests might look as follows.

---

apiVersion: gateway.networking.k8s.io/v1

kind: Gateway

metadata:

annotations:

gateway.networking.k8s.io/generator: ingress2gateway-dev

name: nginx

namespace: my-ns

spec:

gatewayClassName: nginx

listeners:

- hostname: my-host.example.com

name: my-host-example-com-https

port: 443

protocol: HTTPS

tls:

certificateRefs:

- group: ""

kind: Secret

name: my-secret

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

annotations:

gateway.networking.k8s.io/generator: ingress2gateway-dev

name: my-ingress-my-host-example-com

namespace: my-ns

spec:

hostnames:

- my-host.example.com

parentRefs:

- name: nginx

port: 443

rules:

- backendRefs:

- name: website-service

port: 80

filters:

- cors:

allowCredentials: true

allowHeaders:

- DNT

...

allowMethods:

- GET

...

allowOrigins:

- '*'

maxAge: 1728000

type: CORS

matches:

- path:

type: RegularExpression

value: /users/(\d+)

name: rule-0

timeouts:

request: 3s

4. Verify

Now that you have Gateway API manifests, you should thoroughly test them in a development cluster. In this case, you should at least double-check that your Gateway API implementation's maximum body size defaults are appropriate for you and verify that a three-second timeout is enough.

After validating behavior in a development cluster, deploy your Gateway API configuration alongside your existing Ingress. We strongly suggest that you then gradually shift traffic using weighted DNS, your cloud load balancer, or traffic-splitting features of your platform. This way, you can quickly recover from any misconfiguration that made it through your tests.

Finally, when you have shifted all your traffic to your Gateway API controller, delete your Ingress resources and uninstall your Ingress controller.

Conclusion

The Ingress2Gateway 1.0 release is just the beginning, and we hope that you use Ingress2Gateway to safely migrate to Gateway API. As we approach the March 2026 Ingress-NGINX retirement, we invite the community to help us increase our configuration coverage, expand testing, and improve UX.

Resources about Gateway API

The scope of Gateway API can be daunting. Here are some resources to help you work with Gateway API:

- Listener sets allow application developers to manage gateway listeners.

gwctlgives you a comprehensive view of your Gateway resources, such as attachments and linter errors.- Gateway API Slack:

#sig-network-gateway-apion Kubernetes Slack - Ingress2Gateway Slack:

#sig-network-ingress2gatewayon Kubernetes Slack - GitHub: kubernetes-sigs/ingress2gateway

Running Agents on Kubernetes with Agent Sandbox

The landscape of artificial intelligence is undergoing a massive architectural shift. In the early days of generative AI, interacting with a model was often treated as a transient, stateless function call: a request that spun up, executed for perhaps 50 milliseconds, and terminated.

Today, the world is witnessing AI v2 eating AI v1. The ecosystem is moving from short-lived, isolated tasks to deploying multiple, coordinated AI agents that run constantly. These autonomous agents need to maintain context, use external tools, write and execute code, and communicate with one another over extended periods.

As platform engineering teams look for the right infrastructure to host these new AI workloads, one platform stands out as the natural choice: Kubernetes. However, mapping these unique agentic workloads to traditional Kubernetes primitives requires a new abstraction.

This is where the new Agent Sandbox project (currently in development under SIG Apps) comes into play.

The Kubernetes advantage (and the abstraction gap)

Kubernetes is the de facto standard for orchestrating cloud-native applications precisely because it solves the challenges of extensibility, robust networking, and ecosystem maturity. However, as AI evolves from short-lived inference requests to long-running, autonomous agents, we are seeing the emergence of a new operational pattern.

AI agents, by contrast, are typically isolated, stateful, singleton workloads. They act as a digital workspace or execution environment for an LLM. An agent needs a persistent identity and a secure scratchpad for writing and executing (often untrusted) code. Crucially, because these long-lived agents are expected to be mostly idle except for brief bursts of activity, they require a lifecycle that supports mechanisms like suspension and rapid resumption.

While you could theoretically approximate this by stringing together a StatefulSet of size 1, a headless Service, and a PersistentVolumeClaim for every single agent, managing this at scale becomes an operational nightmare.

Because of these unique properties, traditional Kubernetes primitives don't perfectly align.

Introducing Kubernetes Agent Sandbox

To bridge this gap, SIG Apps is developing agent-sandbox. The project introduces a declarative, standardized API specifically tailored for singleton, stateful workloads like AI agent runtimes.

At its core, the project introduces the Sandbox CRD. It acts as a lightweight, single-container environment built entirely on Kubernetes primitives, offering:

- Strong isolation for untrusted code: When an AI agent generates and executes code autonomously, security is paramount. The Sandbox custom resource natively supports different runtimes, like gVisor or Kata Containers. This provides the necessary kernel and network isolation required for multi-tenant, untrusted execution.

- Lifecycle management: Unlike traditional web servers optimized for steady, stateless traffic, an AI agent operates as a stateful workspace that may be idle for hours between tasks. Agent Sandbox supports scaling these idle environments to zero to save resources, while ensuring they can resume exactly where they left off.

- Stable identity: Coordinated multi-agent systems require stable networking. Every Sandbox is given a stable hostname and network identity, allowing distinct agents to discover and communicate with each other seamlessly.

Scaling agents with extensions

Because the AI space is moving incredibly quickly, we built an Extensions API layer that enables even faster iteration and development.

Starting a new pod adds about a second of overhead. That's perfectly fine when deploying a new version of a microservice, but when an agent is invoked after being idle, a one-second cold start breaks the continuity of the interaction. It forces the user or the orchestrating service to wait for the environment to provision before the model can even begin to think or act. SandboxWarmPool solves this by maintaining a pool of pre-provisioned Sandbox pods, effectively eliminating cold starts. Users or orchestration services can simply issue a SandboxClaim against a SandboxTemplate, and the controller immediately hands over a pre-warmed, fully isolated environment to the agent.

Quick start

Ready to try it yourself? You can install the Agent Sandbox core components and extensions directly into your learning or sandbox cluster, using your chosen release.

We recommend you use the latest release as the project is moving fast.

# Replace "vX.Y.Z" with a specific version tag (e.g., "v0.1.0") from

# https://github.com/kubernetes-sigs/agent-sandbox/releases

export VERSION="vX.Y.Z"

# Install the core components:

kubectl apply -f https://github.com/kubernetes-sigs/agent-sandbox/releases/download/${VERSION}/manifest.yaml

# Install the extensions components (optional):

kubectl apply -f https://github.com/kubernetes-sigs/agent-sandbox/releases/download/${VERSION}/extensions.yaml

# Install the Python SDK (optional):

# Create a virtual Python environment

python3 -m venv .venv

source .venv/bin/activate

# Install from PyPI

pip install k8s-agent-sandbox

Once installed, you can try out the Python SDK for AI agents or deploy one of the ready-to-use examples to see how easy it is to spin up an isolated agent environment.

The future of agents is cloud native

Whether it’s a 50-millisecond stateless task, or a multi-week, mostly-idle collaborative process, extending Kubernetes with primitives designed specifically for isolated stateful singletons allows us to leverage all the robust benefits of the cloud-native ecosystem.

The Agent Sandbox project is open source and community-driven. If you are building AI platforms, developing agentic frameworks, or are interested in Kubernetes extensibility, we invite you to get involved:

- Check out the project on GitHub: kubernetes-sigs/agent-sandbox

- Join the discussion in the #sig-apps and #agent-sandbox channels on the Kubernetes Slack.

Securing Production Debugging in Kubernetes

During production debugging, the fastest route is often broad access such as cluster-admin (a ClusterRole that grants administrator-level access), shared bastions/jump boxes, or long-lived SSH keys. It works in the moment, but it comes with two common problems: auditing becomes difficult, and temporary exceptions have a way of becoming routine.

This post offers my recommendations for good practices applicable to existing Kubernetes environments with minimal tooling changes:

- Least privilege with RBAC

- Short-lived, identity-bound credentials

- An SSH-style handshake model for cloud native debugging

A good architecture for securing production debugging workflows is to use a just-in-time secure shell gateway

(often deployed as an on demand pod in the cluster).

It acts as an SSH-style “front door” that makes temporary access actually temporary. You can

authenticate with short-lived, identity-bound credentials, establish a session to the gateway,

and the gateway uses the Kubernetes API and RBAC to control what they can do, such as pods/log, pods/exec, and pods/portforward.

Sessions expire automatically, and both the gateway logs and Kubernetes audit logs capture who accessed what and when without shared bastion accounts or long-lived keys.

1) Using an access broker on top of Kubernetes RBAC

RBAC controls who can do what in Kubernetes. Many Kubernetes environments rely primarily on RBAC for authorization, although Kubernetes also supports other authorization modes such as Webhook authorization. You can enforce access directly with Kubernetes RBAC, or put an access broker in front of the cluster that still relies on Kubernetes permissions under the hood. In either model, Kubernetes RBAC remains the source of truth for what the Kubernetes API allows and at what scope.

An access broker adds controls that RBAC does not cover well. For example, it can decide whether a request is auto-approved or requires manual approval, whether a user can run a command, and which commands are allowed in a session. It can also manage group membership so that you grant permissions to groups instead of individual users. Kubernetes RBAC can allow actions such as pods/exec, but it cannot restrict which commands run inside an exec session.

With that model, Kubernetes RBAC defines the allowed actions for a user or group (for example, an on-call team in a single namespace). I recommend you only define access rules that grant rights to groups or to ServiceAccounts - never to individual users. The broker or identity provider then adds or removes users from that group as needed.

The broker can also enforce extra policy on top, like which commands are permitted in an interactive session and which requests can be auto-approved versus require manual approval. That policy can live in a JSON or XML file and be maintained through code review, so updates go through a formal pull request and are reviewed like any other production change.

Example: a namespaced on-call debug Role

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: oncall-debug

namespace: <namespace>

rules:

# Discover what’s running

- apiGroups: [""]

resources: ["pods", "events"]

verbs: ["get", "list", "watch"]

# Read logs

- apiGroups: [""]

resources: ["pods/log"]

verbs: ["get"]

# Interactive debugging actions

- apiGroups: [""]

resources: ["pods/exec", "pods/portforward"]

verbs: ["create"]

# Understand rollout/controller state

- apiGroups: ["apps"]

resources: ["deployments", "replicasets"]

verbs: ["get", "list", "watch"]

# Optional: allow kubectl debug ephemeral containers

- apiGroups: [""]

resources: ["pods/ephemeralcontainers"]

verbs: ["update"]

Bind the Role to a group (rather than individual users) so membership can be managed through your identity provider:

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: oncall-debug

namespace: <namespace>

subjects:

- kind: Group

name: oncall-<team-name>

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: Role

name: oncall-debug

apiGroup: rbac.authorization.k8s.io

2) Short-lived, identity-bound credentials

The goal is to use short-lived, identity-bound credentials that clearly tie a session to a real person and expire quickly. These credentials can include the user’s identity and the scope of what they’re allowed to do. They’re typically signed using a private key that stays with the engineer, such as a hardware-backed key (for example, a YubiKey), so they can not be forged without access to that key.

You can implement this with Kubernetes-native authentication (for example, client certificates or an OIDC-based flow), or have the access broker from the previous section issue short-lived credentials on the user’s behalf. In many setups, Kubernetes still uses RBAC to enforce permissions based on the authenticated identity and groups/claims. If you use an access broker, it can also encode additional scope constraints in the credential and enforce them during the session, such as which cluster or namespace the session applies to and which actions (or approved commands) are allowed against pods or nodes. In either case, the credentials should be signed by a certificate authority (CA), and that CA should be rotated on a regular schedule (for example, quarterly) to limit long-term risk.

Option A: short-lived OIDC tokens

A lot of managed Kubernetes clusters already give you short-lived tokens. The main thing is to make sure your kubeconfig refreshes them automatically instead of copying a long-lived token into the file.

For example:

users:

- name: oncall

user:

exec:

apiVersion: client.authentication.k8s.io/v1

command: cred-helper

args: ["--cluster=prod", "--ttl=30m"]

Option B: Short-lived client certificates (X.509)

If your API server (or your access broker from the previous section) is set up to trust a client CA, you can use short-lived client certificates for debugging access. The idea is:

- The private key is created and kept under the engineer’s machine (ideally hardware-backed, like a non-exportable key in a YubiKey/PIV token)

- A short-lived certificate is issued (often via the CertificateSigningRequest API, or your access broker from the previous section, with a TTL).

- RBAC maps the authenticated identity to a minimal Role

This is straightforward to operationalize with the Kubernetes CertificateSigningRequest API.

Generate a key and CSR locally:

# Generate a private key.

# This could instead be generated within a hardware token;

# OpenSSL and several similar tools include support for that.

openssl genpkey -algorithm Ed25519 -out oncall.key

openssl req -new -key oncall.key -out oncall.csr \

-subj "/CN=user/O=oncall-payments"

Create a CertificateSigningRequest with a short expiration:

apiVersion: certificates.k8s.io/v1

kind: CertificateSigningRequest

metadata:

name: oncall-<user>-20260218

spec:

request: <base64-encoded oncall.csr>

signerName: kubernetes.io/kube-apiserver-client

expirationSeconds: 1800 # 30 minutes

usages:

- client auth

After the CSR is approved and signed, you extract the issued certificate and use it together with the private key to authenticate, for example via kubectl.

3) Use a just-in-time access gateway to run debugging commands

Once you have short-lived credentials, you can use them to open a secure shell session to a just-in-time access gateway, often exposed over SSH and created on demand. If the gateway is exposed over SSH, a common pattern is to issue the engineer a short-lived OpenSSH user certificate for the session. The gateway trusts your SSH user CA, authenticates the engineer at connection time, and then applies the approved session policy before making Kubernetes API calls on the user’s behalf. OpenSSH certificates are separate from Kubernetes X.509 client certificates, so these are usually treated as distinct layers.

The resulting session should also be scoped so it cannot be reused outside of what was approved. For example, the gateway or broker can limit it to a specific cluster and namespace, and optionally to a narrower target such as a pod or node. That way, even if someone tries to reuse the access, it will not work outside the intended scope. After the session is established, the gateway executes only the allowed actions and records what happened for auditing.

Example: Namespace-scoped role bindings

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: jit-debug

namespace: <namespace>

annotations:

kubernetes.io/description: >

Colleagues performing semi-privileged debugging, with access provided

just in time and on demand.

rules:

- apiGroups: [""]

resources: ["pods", "pods/log"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["pods/exec"]

verbs: ["create"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: jit-debug

namespace: <namespace>

subjects:

- kind: Group

name: jit:oncall:<namespace> # mapped from the short-lived credential (cert/OIDC)

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: Role

name: jit-debug

apiGroup: rbac.authorization.k8s.io

These RBAC objects, and the rules they define, allow debugging only within the specified namespace; attempts to access other namespaces are not allowed.

Example: Cluster-scoped role binding

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: jit-cluster-read

rules:

- apiGroups: [""]

resources: ["nodes", "namespaces"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: jit-cluster-read

subjects:

- kind: Group

name: jit:oncall:cluster

apiGroup: rbac.authorization.k8s.io

roleRef:

kind: ClusterRole

name: jit-cluster-read

apiGroup: rbac.authorization.k8s.io

These RBAC rules grant cluster-wide read access (for example, to nodes and namespaces) and should be used only for workflows that truly require cluster-scoped resources.

Finer-grained restrictions like “only this pod/node” or “only these commands” are typically enforced by the access gateway/broker during the session, but Kubernetes also offers other options, such as ValidatingAdmissionPolicy for restricting writes and webhook authorization for custom authorization across verbs.

In environments with stricter access controls, you can add an extra, short-lived session mediation layer to separate session establishment from privileged actions. Both layers are ephemeral, use identity-bound expiring credentials, and produce independent audit trails. The mediation layer handles session setup/forwarding, while the execution layer performs only RBAC-authorized Kubernetes actions. This separation can reduce exposure by narrowing responsibilities, scoping credentials per step, and enforcing end-to-end session expiry.

References

- Authorization

- Using RBAC Authorization

- Authenticating

- Certificates and Certificate Signing Requests

- Issue a Certificate for a Kubernetes API Client Using a CertificateSigningRequest

- Role Based Access Control Good Practices

Disclaimer: The views expressed in this post are solely those of the author and do not reflect the views of the author’s employer or any other organization.

The Invisible Rewrite: Modernizing the Kubernetes Image Promoter

Every container image you pull from registry.k8s.io got there through

kpromo, the Kubernetes image

promoter. It copies images from staging registries to

production, signs them with cosign, replicates

signatures across more than 20 regional mirrors, and generates

SLSA provenance attestations. If this tool breaks, no

Kubernetes release ships. Over the past few weeks, we rewrote its core from

scratch, deleted 20% of the codebase, made it dramatically faster, and

nobody noticed. That was the whole point.

A bit of history

The image promoter started in late 2018 as an internal Google project by

Linus Arver. The goal was simple: replace the

manual, Googler-gated process of copying container images into k8s.gcr.io with

a community-owned, GitOps-based workflow. Push to a staging registry, open a PR

with a YAML manifest, get it reviewed and merged, and automation handles the

rest. KEP-1734

formalized this proposal.

In early 2019, the code moved to kubernetes-sigs/k8s-container-image-promoter

and grew quickly. Over the next few years,

Stephen Augustus consolidated multiple tools

(cip, gh2gcs, krel promote-images, promobot-files) into a single CLI

called kpromo. The repository was renamed to

promo-tools.

Adolfo Garcia Veytia (Puerco) added cosign signing

and SBOM support. Tyler Ferrara built

vulnerability scanning. Carlos Panato kept the project in a healthy and

releasable state. 42 contributors made about 3,500 commits across more than 60 releases.

It worked. But by 2025 the codebase carried the weight of seven years of incremental additions from multiple SIGs and subprojects. The README said it plainly: you will see duplicated code, multiple techniques for accomplishing the same thing, and several TODOs.

The problems we needed to solve

Production promotion jobs for Kubernetes core images regularly took over 30 minutes and frequently failed with rate limit errors. The core promotion logic had grown into a monolith that was hard to extend and difficult to test, making new features like provenance or vulnerability scanning painful to add.